GTC Europe 2016: NVIDIA Keynote Live Blog with CEO Jen-Hsun Huang

by Ian Cutress on September 28, 2016 4:17 AM EST- Posted in

- GPUs

- Quadro

- 2011

- Trade Shows

- NVIDIA

- Tegra

- Live Blog

- GTC Europe

04:24AM EDT - I'm here at the first GTC Europe event, ready to go for the Keynote talk hosted by CEO Jen-Hsun Huang.

04:25AM EDT - This is a satellite event to the main GTC in San Francisco. By comparison the main GTC has 5000 attendees, this one has 1600-ish

04:25AM EDT - This is essentially GTC on the road - they're doing 5 or 6 of these satellite events around the world after the main GTC

04:27AM EDT - We're about to start

04:29AM EDT - Opening video

04:30AM EDT - 'Deep Learning is helping farmers analyze crop data in days what used to take years'

04:30AM EDT - 'Using AI to deliver relief in harsh conditions' (drones)

04:30AM EDT - 'Using AI to sort trash'

04:30AM EDT - Mentioning AlphaGO

04:31AM EDT - JSH to the stage

04:32AM EDT - 'GPUs can do what normal computing cannot'

04:32AM EDT - 'We're at the beginning of something important, the 4th industrial revolution'

04:33AM EDT - 'Several things at once came together to make the PC era something special'

04:33AM EDT - 'In 2006, the mobile revolution and Amazon AWS happened'

04:33AM EDT - 'We could put high performance compute technology in the hands of 3 billion people'

04:34AM EDT - '10 years later, we have the AI revolution'

04:34AM EDT - 'Now, we have software that writes software. Machines learn. And soon, machines will build machines.'

04:35AM EDT - 'In each era of computing, a new computing platform emerged'

04:35AM EDT - 'Windows, ARM, Android'

04:35AM EDT - 'A brand new type of processor is needed for this revolution - it happened in 2012 with the Titan X'

04:37AM EDT - 'Deep Learning was in the process, and the ability to generalize learning was a great thing, but it had a handicap'

04:37AM EDT - 'It required a large amount of data to write its own software, which is computationally exhausting'

04:38AM EDT - 'The handicap lasted two decades'

04:38AM EDT - 'Deep Neural Nets were then developed on GPUs to solve this'

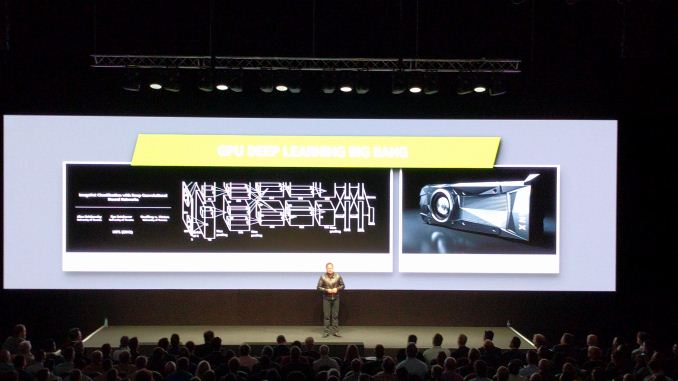

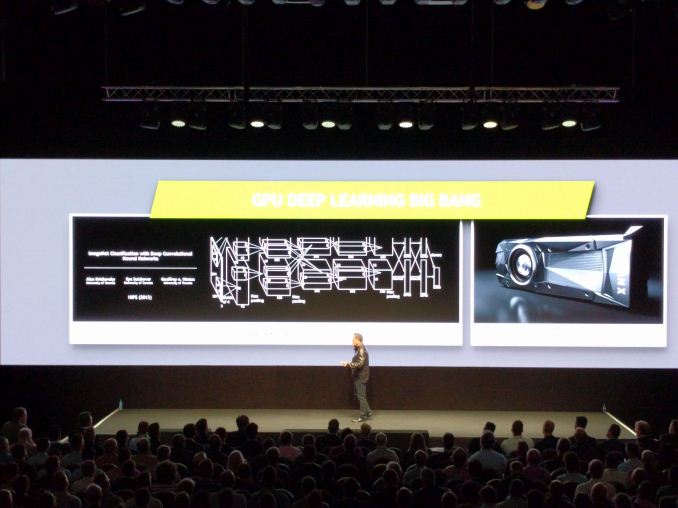

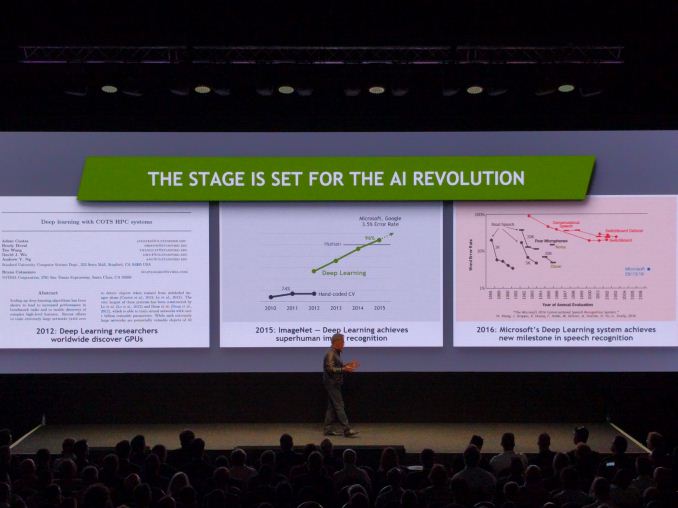

04:39AM EDT - 'ImageNet Classification with Deep Convolutional Neural Networks' by Alex Krizhevsky at the University of Toronto

04:40AM EDT - 'The neural network out of that paper, 'AlexNet' beat seasoned Computer Vision veterans with hand tuned algorithms at ImageNet'

04:40AM EDT - 'One of the most exciting events in computing for the last 25 years'

04:41AM EDT - 'Now, not a week goes by when there's a new breakthrough or milestone reached'

04:42AM EDT - 'e.g., 2015 where Deep Learning beat humans at ImageNet, 2016 where speech recognition reaches sub-3% in conversational speech'

04:43AM EDT - 'As we grow, the computational complexity of these networks becomes even greater'

04:44AM EDT - 'Now, Deep Learning can beat humans at image recognition - it has achieved 'Super Human' levels'

04:44AM EDT - 'One of the big challenges is autonomous vehicles'

04:44AM EDT - 'Traditional CV approaches wouldn't ever work for auto'

04:45AM EDT - 'Speech recognition is one of the most researched areas in AI'

04:45AM EDT - 'Speech will not only change how we interact with computers, but what computers can do'

04:47AM EDT - Correction, Microsoft hit 6.3% error rate in speech recognition

04:47AM EDT - 'The English language is fairly difficult for computers to understand, especially in a noisy environment'

04:48AM EDT - 'Reminder, humans don't achieve 0% error rate'

04:48AM EDT - 'These three achievements are great: we now have the ability to simulate human brains: learning, sight and sound'

04:49AM EDT - 'The ability to perceive and the ability to learn are fundamentals of AI - we now have the three pillars to solve large-scale AI problems'

04:49AM EDT - 'NVIDIA invented the GPU, and 10 years ago we invented GPU computing'

04:50AM EDT - 'Almost all new high performance computers are accelerated, and NVIDIA is in 70% of them'

04:50AM EDT - 'Virtual Reality is essentially computing human imagination'

04:51AM EDT - 'Some people have called NVIDIA the AI Computing Company'

04:51AM EDT - JSH: 'We're still the fun computing company, solving problems, and most of the work we do is exciting for the future'

04:52AM EDT - 'Merging simulation, VR, AR, and powered by AI, and scenes like Tony Stark in Iron Man captures what NVIDIA is going after'

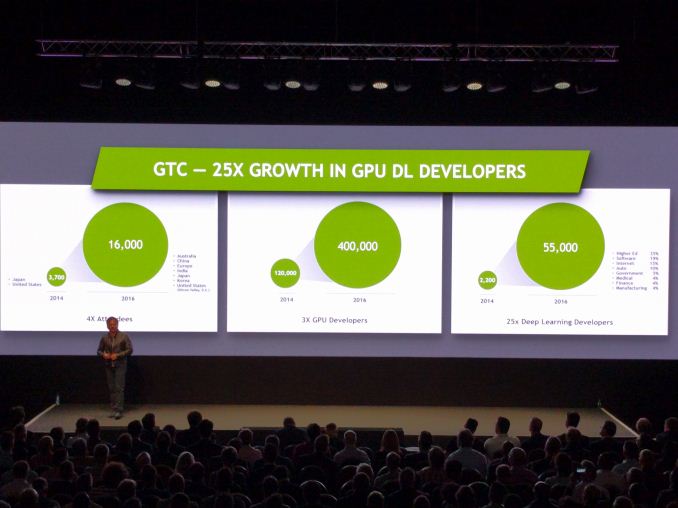

04:53AM EDT - GTC 2014 to 2016: 4x attendees, 3x GPU developers, 25x deep learning devs, moving from 2 events/year to 7 events/year worldwide

04:54AM EDT - The GPU devs and Deep Learning devs numbers are 'industry metrics', not GTC attendees

04:55AM EDT - 'I want the developers to think about the Eiffel Tower - an iconic image in Europe'

04:56AM EDT - 'The brain typically imagines an image of the tower - your brain did the graphics'

04:56AM EDT - 'The brain thinks like a GPU, and the GPU is like a brain'

04:57AM EDT - 'The computer industry has invested trillions of dollars into this'

04:57AM EDT - 'The largest supercomputer has 16-18000 NVIDIA Tesla GPUs, over 25m CUDA cores'

04:58AM EDT - 'GPUs are at the forefront of this'

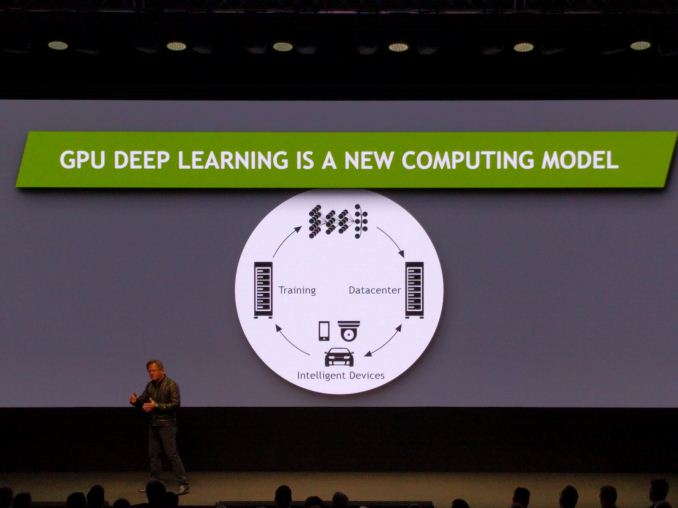

04:58AM EDT - 'GPU Deep Learning is actually a new model'

04:59AM EDT - 'Previously, software engineers write the software, QA engineers test it, and in production the software does what we expect'

04:59AM EDT - 'GPU Deep Learning is a bit different'

04:59AM EDT - 'Learning is important - a deep neural net has to gather data and learn from it'

05:00AM EDT - 'This is the computationally intensive part of Deep Learning'

05:00AM EDT - 'Then the devices infer, using the generated neural net'

05:00AM EDT - 'GPUs have enabled larger and deeper neural networks that are better trained in shorter times'

05:01AM EDT - 'A modern neural network has hundreds of hidden layers and learns a hierarchy of features'

05:01AM EDT - 'Our brain has the ability to do that. Now we can do that in a computer context'

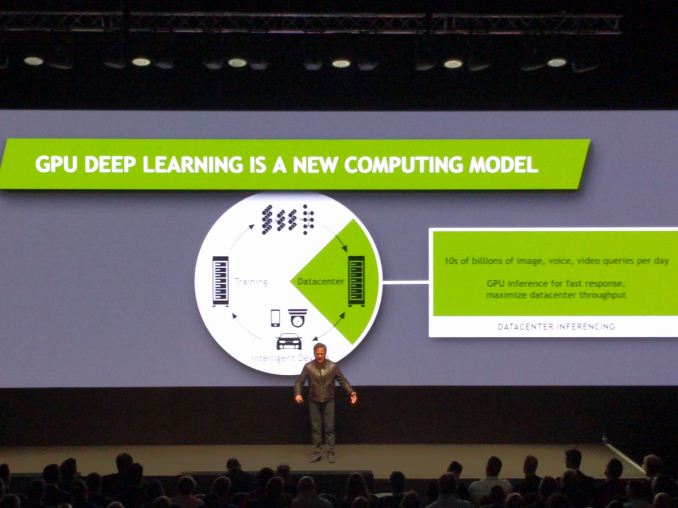

05:02AM EDT - 'The trained network is placed into data centers for cloud inferencing with large libraries to answer questions from its database'

05:02AM EDT - 'This is going to be big in the future. Every question in the future will be routed through an AI network'

05:03AM EDT - 'GPU inferencing makes response times a lot faster'

05:03AM EDT - 'This is the area of the intelligent device'

05:04AM EDT - 'This is the technology for IoT'

05:04AM EDT - 'AI is comparatively small coding with lots and lots of computation'

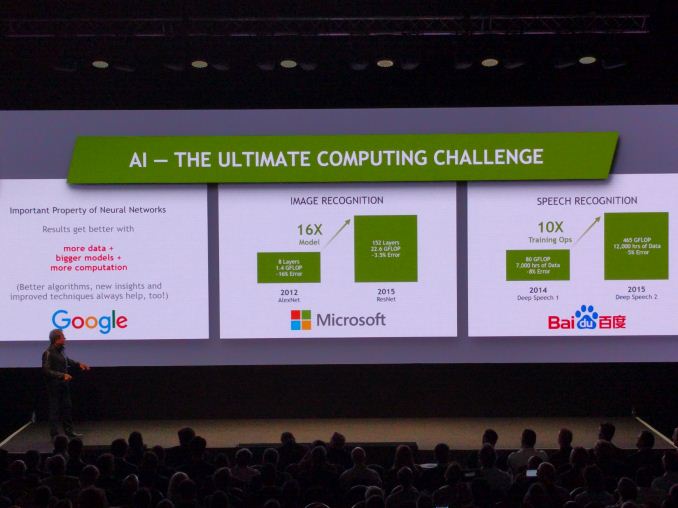

05:05AM EDT - 'The important factor of neural nets is that they work better with larger datasets and more computation'

05:05AM EDT - 'It's about the higher quality network'

05:05AM EDT - 2012 AlexNet was 8-layers, 1.4 GFlops, 16% error

05:06AM EDT - 2015 ResNet is 152 Layers, 22.6 GFlops for 3.5% error

05:06AM EDT - The 2016 winner has improved this with a network 4x deeper

05:06AM EDT - Baidu in 2015, using 12k hours of data and 465 GFLOPs can do 5% speech recognition error

05:07AM EDT - 'This requires a company to push hardware development at a faster pave than Moore's Law'

05:07AM EDT - 'So NVIDIA thought, why not us'

05:08AM EDT - 'I want to dedicate my next 40 years to this endeavour'

05:08AM EDT - 'The rate of change for deep learning has to grow, not diminish'

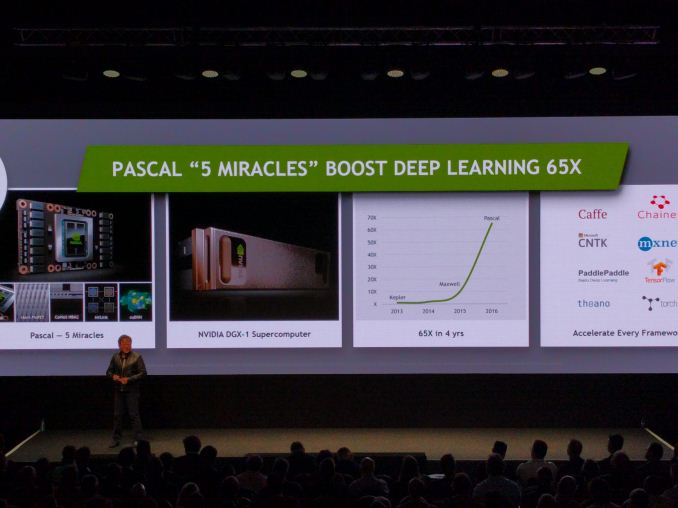

05:09AM EDT - 'The first customer of Pascal is an open lab called OpenAI, and their mission is to democratize the AI field'

05:10AM EDT - 'Our platform is so accessible - from a gaming PC to the cloud, supercomputer, or DGX-1'

05:11AM EDT - 'You can buy it, rent it, anywhere in the world - if you are an AI researcher, this is your platform'

05:11AM EDT - 'We can't slow down against Moore's Law, we have to hypercharge it'

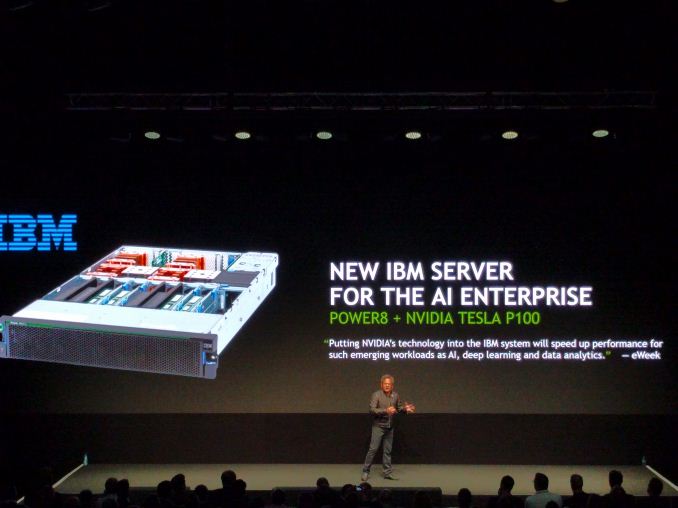

05:12AM EDT - 'We also have partners, such as IBM with cognitive computing services'

05:13AM EDT - 'We worked with IBM to develop NVLink to pair POWER8 with the NVIDIA P100 GPU to get a network of fast processors and fast GPUs, dedicated to solve AI problems'

05:13AM EDT - 'Today we are announcing a new partner'

05:13AM EDT - 'To apply AI for other companies worldwide'

05:14AM EDT - New partner is SAP

05:14AM EDT - 'We want to bring AI to companies around the world as one of the biggest AI hardware/software collaborations'

05:14AM EDT - 'We want SAP to be able to turbocharge their customers with Deep Learning'

05:15AM EDT - 'The research budget of DGX-1 was $2b, with 10k engineer years of work'

05:15AM EDT - 'DGX-1 is now up for sale. It's in the hands of lots of high impact research teams'

05:17AM EDT - 'We're announcing two designated research centers for AI research in Europe - one in Germany, one in Switzerland, with access to DGX-1 hardware'

05:17AM EDT - Now Datacenter Inferencing

05:18AM EDT - 'After the months and months of training of the largest networks, when the network is complete, it requires a hyperscale datacenter'

05:18AM EDT - 'The market goes into 10s of millions of hyperscale servers'

05:18AM EDT - 'With the right inference GPU, we can provide instantaneous solutions when billions of queries are applied at once'

05:19AM EDT - 'NVIDIA can allow datacenters to support a factor million more load without ballooning costs or power a million times'

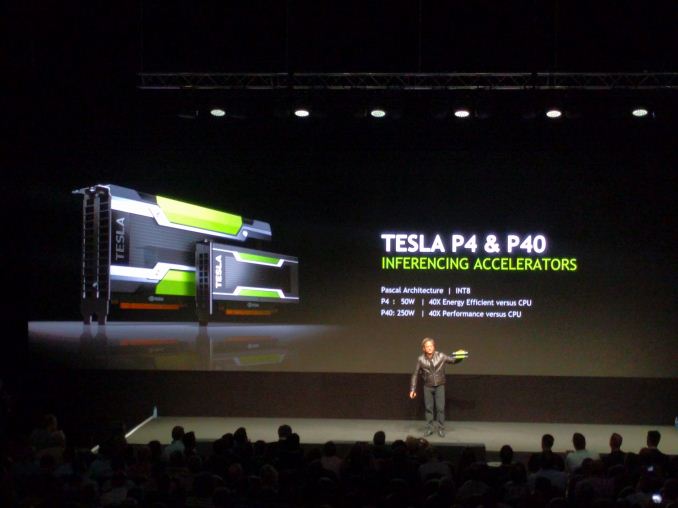

05:20AM EDT - 'NVIDIA recently launched the Tesla P4 and P40 inferencing accelerators'

05:20AM EDT - 'You can replace 3-4 racks with one GPU'

05:21AM EDT - Here's the link to our write-up of the P4/P40 news: http://www.anandtech.com/show/10675/nvidia-announces-tesla-p40-tesla-p4

05:21AM EDT - P4 at 50W, P40 at 250W, using Pascal and using INT8

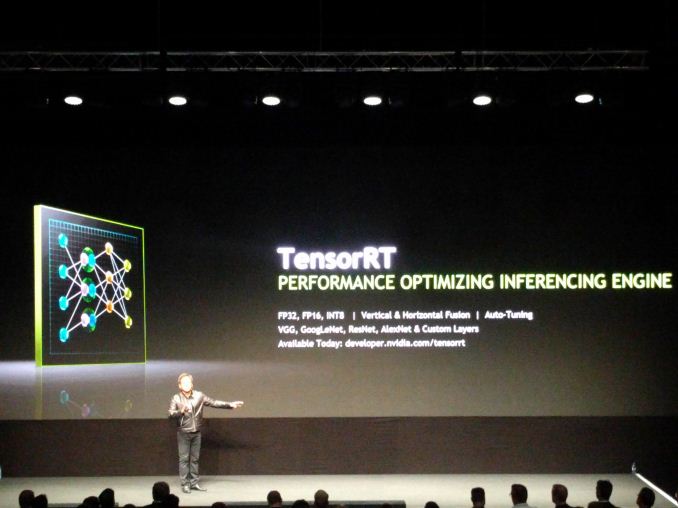

05:22AM EDT - Announcing TensorRT

05:22AM EDT - 'Software package that compiles, fuses operations and autotuning, optimizing the code to the GPU for efficiency'

05:22AM EDT - 'Support a number of networks today, plan to support all major networks in time'

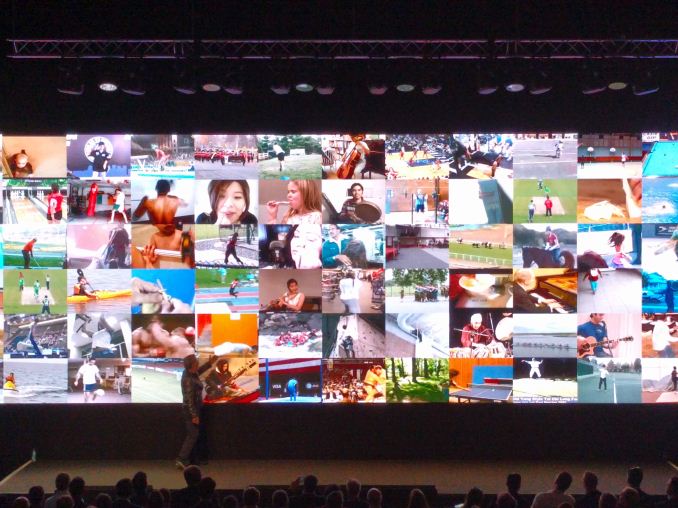

05:23AM EDT - 'Live video is the increasingly shared content of importance'

05:24AM EDT - 'It would be nice if, as you are uploading the live video, it would identify which of your viewers/family would be interested'

05:24AM EDT - 'AI should be able to do this'

05:24AM EDT - Example on stage of 90 streams at 720p30 running at once

05:25AM EDT - A human can do this relatively easily, but a bit slowly

05:25AM EDT - 'A computer can learn from the videos what is happening and assign relevant tags'

05:26AM EDT - 'The future needs the ability to filter based on tags generated from AI live video as it is being generated'

05:28AM EDT - 'We trained a network to determine the style of various paintings, and repaint it in a different style'

05:29AM EDT - 'We want to be able to do it live'

05:29AM EDT - redrawing every single frame

05:30AM EDT - 'The neural network generates new art'

05:30AM EDT - 'frame by frame'

05:31AM EDT - 'Applications for deep learning are clearly very broad'

05:32AM EDT - 'Today, 2000 active deep learning implementations are in use for customers'

05:33AM EDT - 'Partners are ready to configure servers with Deep Learning embedded'

05:35AM EDT - 'ODMs and Server Builders can configure a Deep Learning system for any customer'

05:36AM EDT - Deep Learning for advertising is interesting. 'Adding ads to live video based on viewer preferences'

05:37AM EDT - 'AI Startups or startups using deep learning are everywhere, in lots of diverse fields'

05:38AM EDT - 'Benevolent.ai is the first Europe customer of DGX-1'

05:38AM EDT - 'They can take what used to take a year, and now complete it in a week or two'

05:40AM EDT - 'not all faces are straight on to the camera, so AI can tell if things have changed (hair, age, emotion)'

05:40AM EDT - 'Deep learning can be applied for intelligent voice, to detect if someone is lying on an insurance claim or similar'

05:40AM EDT - 'Deep Learning can detect plastic in waste that is suitable for recycling'

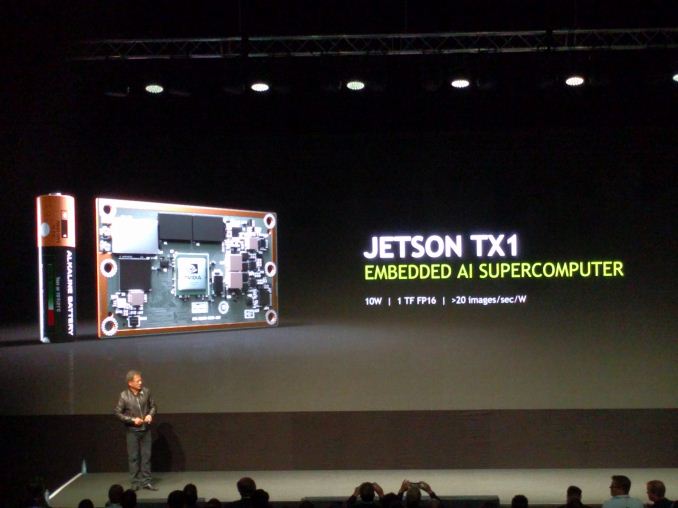

05:41AM EDT - Now inferencing for devices

05:41AM EDT - 'Intelligent Devices, or what people call IoT'

05:42AM EDT - 'Only our imagination limits what the devices are'

05:42AM EDT - >This is the usual thing for companies focusing on AI hardware - leave the 'killer device' problem to others and just provide the hardware during development.

05:43AM EDT - 'Internet connected, intelligent machines, that have the capability of AI, are the future'

05:44AM EDT - Mentioning Jetson TX1, >20 images/sec/W in a 10W platform (adjust as required)

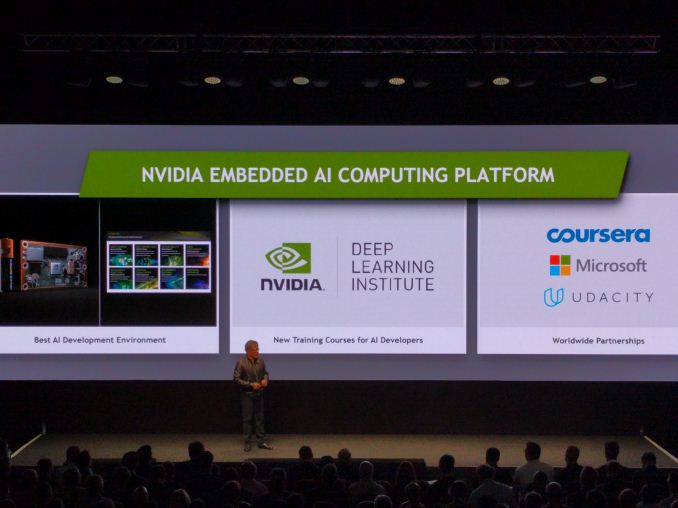

05:45AM EDT - 'We're starting an institute for applying deep learning - the NVIDIA Deep Learning Institute'

05:45AM EDT - 'NVIDIA DLI places always sell out, so we're expanding the reach'

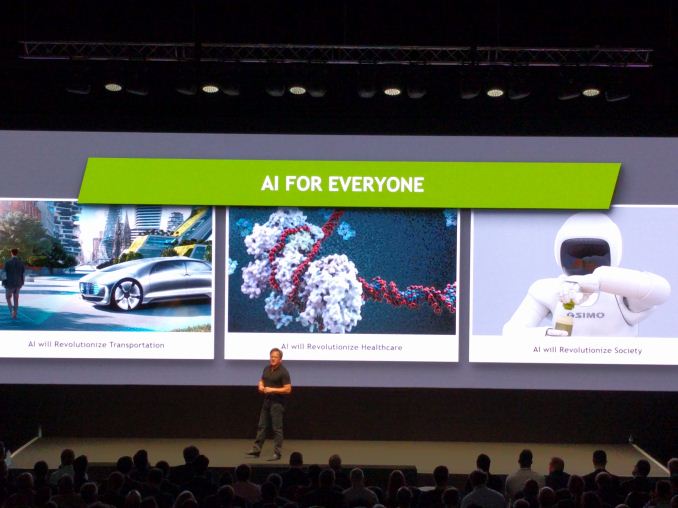

05:46AM EDT - 'AI Transportation is a $10T industry: safety, accessibility and ease of use'

05:46AM EDT - 'Autonomous vehicles is not just about smart sensors - it's a compute problem'

05:48AM EDT - 'AI Auto has to perceive, reason, understand, plan, and apply'

05:49AM EDT - 'NVIDIA has jumped in with both feet to create a scalable platform for the Auto industry'

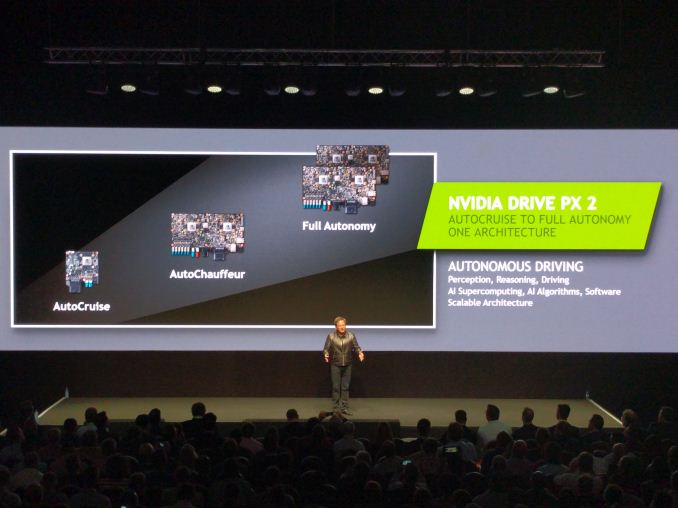

05:49AM EDT - 'Scalability means different segments of the industry have different visions for autonomous vehicles'

05:50AM EDT - Cruise hardware vs. auto chauffeur vs full autonomy

05:51AM EDT - >I just had a thought, it'd be interesting as to what Taxi drivers think about this. If people can just say 'take me home'...

05:51AM EDT - 'Drive PX 2 is perception, reasoning, driving, AI processing, algorithms and software with scalability'

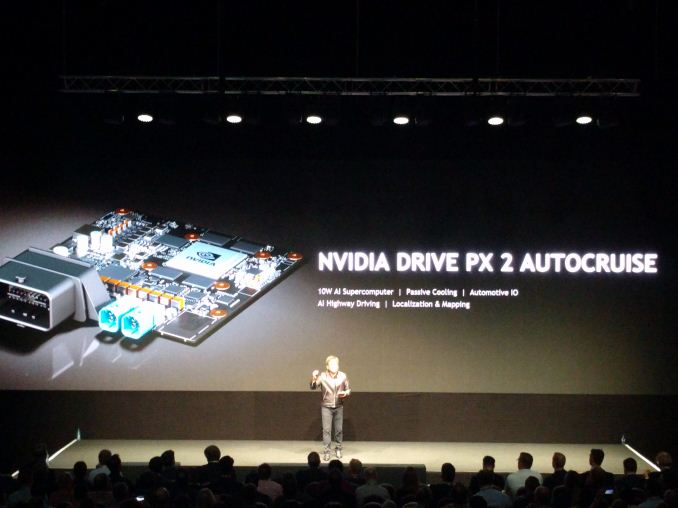

05:52AM EDT - 'When we first showed off PX2, it was the large full-fat version as that's what the big customers wanted'

05:53AM EDT - 'Drive PX 2 Auto Cruise connects to two cameras and identifies a HD map, at 10W'

05:54AM EDT - 'It's a HD map all the way to the cloud and supercomputer'

05:54AM EDT - 'Baidu selected Drive PX 2 for their self-driving cars - DriveWorks is connected to the cloud'

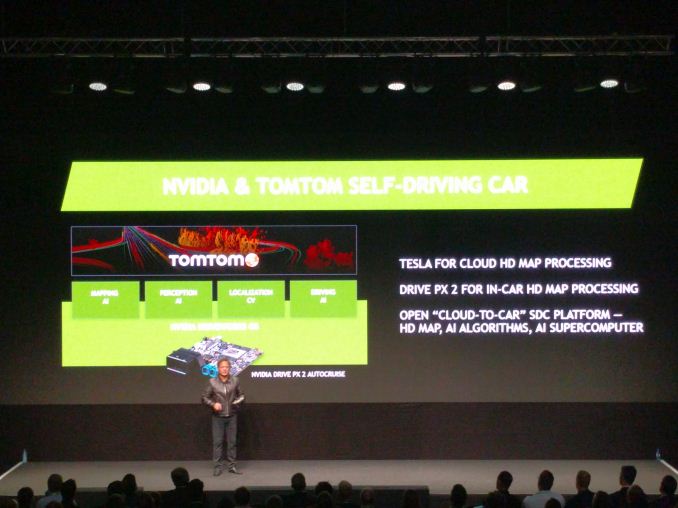

05:55AM EDT - 'NVIDIA is announcing a partnership with TomTom as a mapping partner'

05:56AM EDT - 'Tesla for cloud HD map processing, Drive PX 2 for in-car processing, open cloud-to-car platform using AI'

05:56AM EDT - 'Has to be accurate, coherent, and accurate within a few centimenters'

05:56AM EDT - 'We want to continuously crunch data as it is recorded'

05:57AM EDT - Alain De Taeye from TomTom on stage

05:58AM EDT - 'We've mapped 70% of society, 47 million miles'

05:59AM EDT - 'we have basic information, but we need to make accurate HD maps in an affordable way'

06:00AM EDT - 'TomTom gets 7 billion traces a day which can be processed'

06:00AM EDT - 'We're 120k miles into the 60million mile challenge for HD maps'

06:01AM EDT - 'We need to process video from the cars in super-real time using AI'

06:01AM EDT - 'Using the car to maintain the HD map is the holy grail'

06:01AM EDT - 'Self-driving cars requires an accurate HD map'

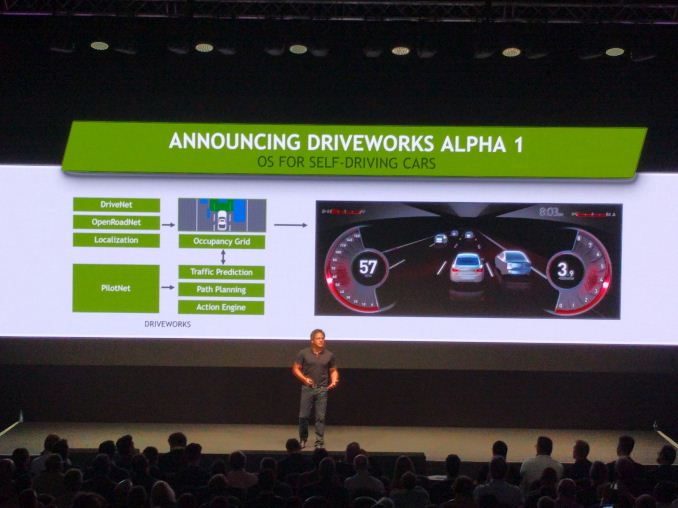

06:02AM EDT - Announcing Driveworks Alpha 1, an OS for self-driving cars

06:03AM EDT - 'the ultimate real-time supercomputing problem'

06:03AM EDT - ''I tried' is not an acceptable answer for self-driving cars'

06:04AM EDT - 'The OS uses three neural networks for different things'

06:05AM EDT - DriveNet, OpenRoadNet and PilotNet

06:06AM EDT - >Just had a thought. HD maps means identifying individual trees and triangulating position on a road for self-driving based on an internal schematic, and updating it if there's weather etc. That's important

06:06AM EDT - 'Driving is a behaviour, like playing tennis, it's somewhat automatic and by reflex'

06:07AM EDT - 'PilotNet is a behaviour network'

06:08AM EDT - 'The occupancy grid is tested against what PilotNet wants to do, based on everything it has been taught and also future predictions'

06:08AM EDT - 'We need to continually test what we see with what the car sees and how the AI car reacts'

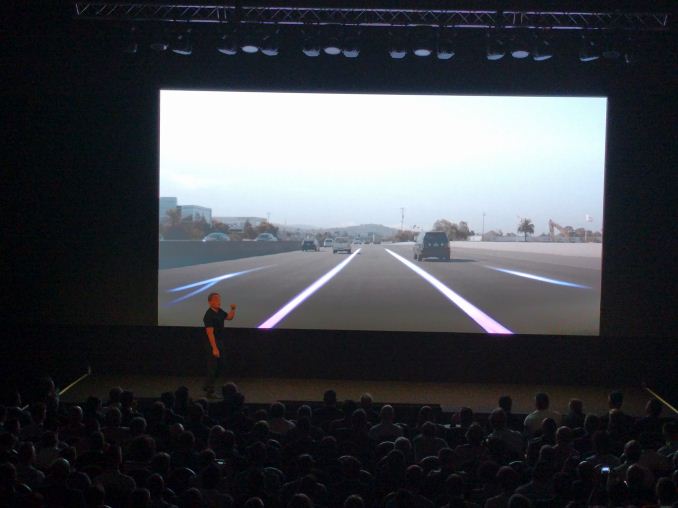

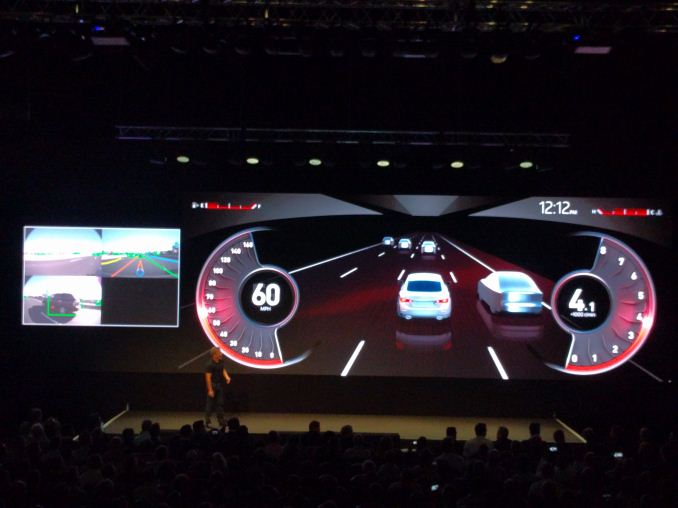

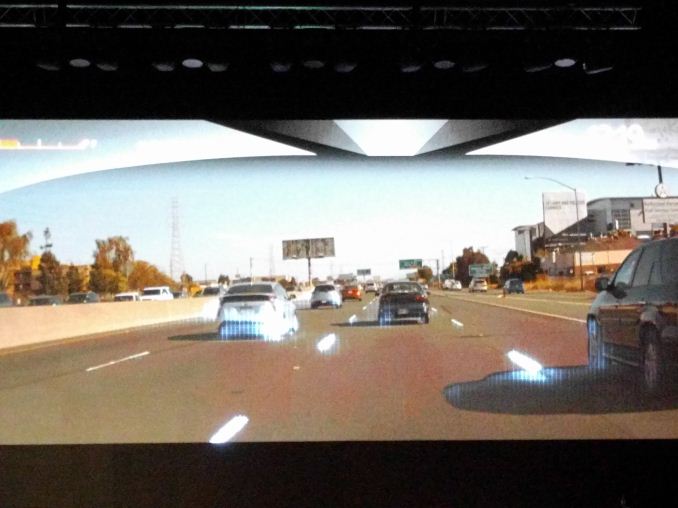

06:09AM EDT - Videos showing a couple of demos

06:09AM EDT - Detecting objects on a front facing vehicle camera

06:10AM EDT - 'Where other cars are, where the lanes are, where it is safe to drive - all done via neural network inferencing'

06:10AM EDT - 'Where is it ok to drive is more important than where you need to drive - it keeps it safe'

06:12AM EDT - 'The occupancy grid combines the sensor data and HD maps to generate it's own 3D map of where the vehicle is'

06:13AM EDT - 'Caffeine is the fuel of deep learning engineers'

06:15AM EDT - 'When we're driving, we don't do calculus, but the AI has to'

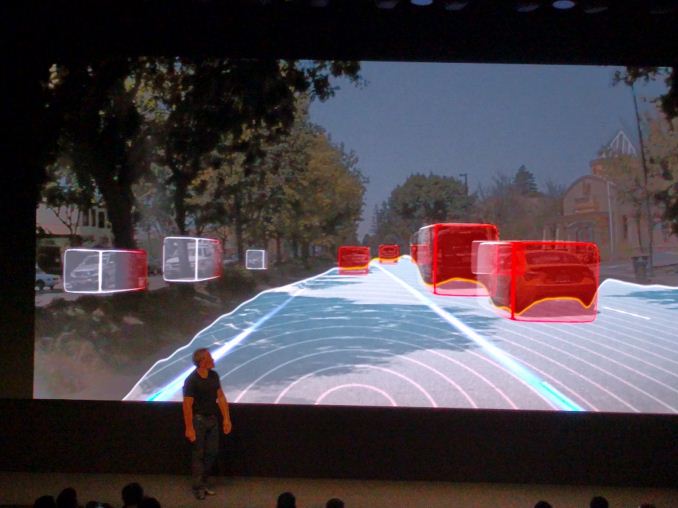

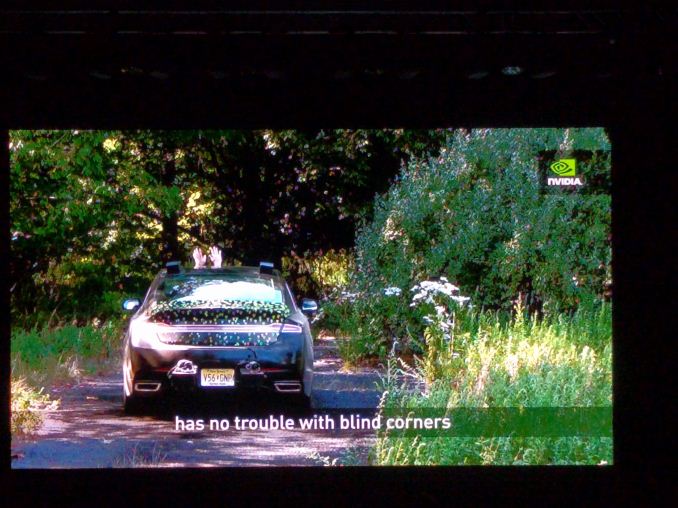

06:16AM EDT - 'NVIDIA BB8 is a car without any prior knowledge, the idea of imitating the driver'

06:17AM EDT - 'We don't have to describe equations, the car will generalise driving behaviour by repeated sampling'

06:18AM EDT - 'Will leave the road to remain safe'

06:19AM EDT - 'BB8 learned how to drive like us'

06:20AM EDT - The sparkles are BB8's neurons firing regarding information that it thinks is important to its behaviour

06:20AM EDT - 'It learned to stay in the middle of the lane, in the middle of the road, a road in the dark etc'

06:20AM EDT - 'It learned that we don't drive over bushes'

06:21AM EDT - 'Driveworks is an open platform for OEMs and car companies to pick and choose the bits they want/need for their solutions'

06:22AM EDT - 'today's announcement is that Alpha 1 is being launched to top tier partners in October with updates every two months'

06:22AM EDT - 'NVIDIA has many AI self-driving cars in development with different partners'

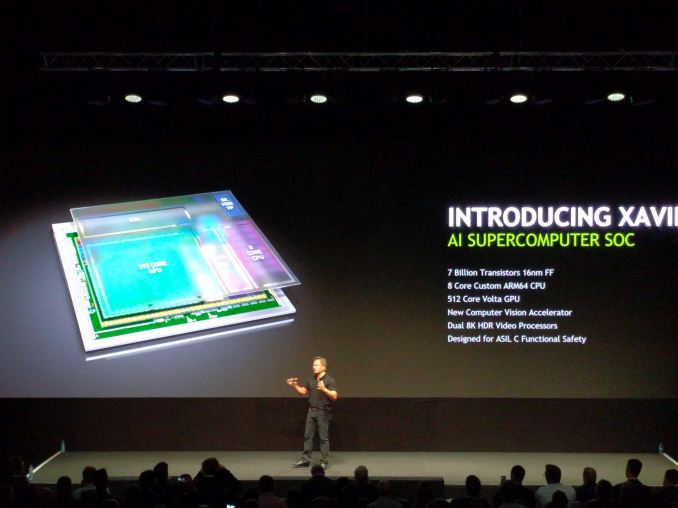

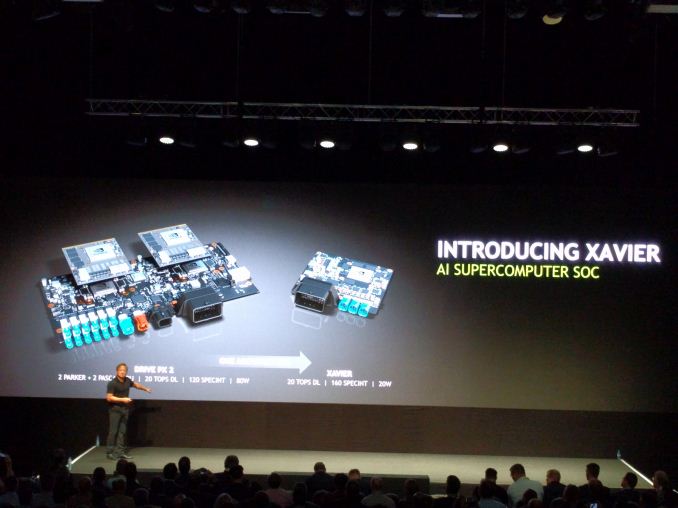

06:24AM EDT - Announcing Xavier

06:25AM EDT - AI Supercomputer SoC

06:25AM EDT - 8-core custom ARM CPU, 512-core Vota, dual 8K video processors, New CV accelerator

06:25AM EDT - 7 billion transistors, 16nm FF+

06:25AM EDT - No perf numbers... ?

06:26AM EDT - Doesn't say Denver cores, so next-gen Denver with Volta?

06:26AM EDT - Sampling Xavier in Q4 2017

06:27AM EDT - Xavier does 20 TOPS DL, 160 SPECINT in 20W

06:28AM EDT - It's so far out, over 12 months mind

06:28AM EDT - So, HotChips 2017 might show off some new uArch details, just like Denver2 this year

06:30AM EDT - Ryan thinks 1 TOPS/W is going to be a tough target

06:30AM EDT - Looks like that's a wrap for the keynote. I think there's some Q&A with JHH now for the press. Time to ask questions

40 Comments

View All Comments

Qwertilot - Wednesday, September 28, 2016 - link

Taxi drivers? They're simply going to be out a job en mass. Along with lorry drivers etc.Its an objectively significant net gain for the human race, but it will take out an awful lot of existing jobs.

SunnyNW - Wednesday, September 28, 2016 - link

The taxi business is already facing hard times with Uber, Lyft, and the like entering into the picture. Autonomous vehicles will be the end of the taxi driver but not the taxi business. Uber will gladly continue providing rides just without the human component.TheinsanegamerN - Wednesday, September 28, 2016 - link

Much like train drivers, I doubt that trucks will ever be totally driver-less, even if remote control is possible. There are just too many variables for people to trust self driving vehicles. The multiple (overblown) tesla S crashes are not helping things.Plus, all it takes is a single hack causing massive crashes, and all trust of self driving goes out the window for 10 years. And given the car industry's current record for safety in this area, I dont have high hopes.

Meteor2 - Wednesday, September 28, 2016 - link

There are driverless trains in the UK.tamalero - Wednesday, September 28, 2016 - link

Theres still people controlling them on a remote center, dont they?Meteor2 - Thursday, September 29, 2016 - link

There are signallers, yes, but the trains themselves are autonomous -- they respond to the signals, with the computer controlling accelerating and braking. It's much safer.Jeanniev - Wednesday, October 5, 2016 - link

No.at80eighty - Wednesday, September 28, 2016 - link

this analogy would make sense if the environment conditions they operate in were similar; which are basically incomparablenathanddrews - Wednesday, September 28, 2016 - link

Autonomous vehicles will most certainly bring a benefit of saving many, many lives - both those that cause crashes and those impacted by them. In dense urban areas, where people have no space for cars (Manhattan, for example), taxi companies will continue to exist, but the drivers themselves will likely become more like chauffeurs, opening doors, helping with luggage, becoming more high end like Lyft or Uber. Personally, I'm hoping for Johnny Cab.More than that, however, I believe that this will reignite the passion for the open road that we first saw after World War II. A two or three day road trip is now a one day road trip. If your car could drive you anywhere and you could sleep, read, or watch Netflix while it does that - where would you go? Visit family or friends more often that are 5-10 hours away? Leave at bedtime and arrive in the morning! Tour the national parks, see the Grand Canyon. I could see domestic travel and tourism rise significantly. Shorter domestic flights might suffer a bit. A flight from Chicago to Minneapolis is about 5 hours after factoring the commute to and from the airport, security lines, and typical delays. You could drive it in 6 for less money and be significantly more comfortable while doing so.

jwcalla - Wednesday, September 28, 2016 - link

The best part of having a car is driving it.I like the idea of everyone else having self-driving cars though.