G.Skill Phoenix Blade (480GB) PCIe SSD Review

by Kristian Vättö on December 12, 2014 9:02 AM EST

G.Skill hasn't been a very visible SSD OEM lately. Like many DRAM module companies, G.Skill entered the market early around 2009 when the market was very immature and profits were high, but lately the company has more or less been on a hiatus from the market. Even though G.Skill has had an SF-2281 based Phoenix III in the lineup for quite some time, it never really did anything to push the product and a Google search yields zero reviews for that drive (at least from any major tech review outlet).

However, back at Computex this year G.Skill showcased a prototype of its next generation SSD: the Phoenix Blade. Unlike most SSDs on the market, the Phoenix Blade utilizes a PCIe 2.0 x8 interface, but unfortunately it is not the native PCIe drive many of you have been waiting for. It is driven by four SandForce SF-2282 controllers in a RAID 0 configuration. It makes sense for G.Skill to pursue the ultra high-end niche because the SATA SSD market is currently extremely populated. It ends up being close to impossible for the smaller OEMs to compete against giants like Samsung, SanDisk and Crucial/Micron while being profitable, since ultimately the NAND manufacturers that are vertically integrated will always have a cost advantage.

| G.Skill Phoenix Blade Specifications | |

| Capacity | 480GB |

| Form Factor | Half-Height, Half-Length (HHHL) |

| Interface | PCI Express 2.0 x8 |

| RAID Controller | SBC 208-2 |

| NAND Controller | 4x SandForce SF-2282 |

| NAND | Toshiba 64Gbit 19nm MLC |

| Sequential Read | Up to 2000MB/s |

| Sequential Write | Up to 2000MB/s |

| 4KB Random Read | Up to 90K IOPS |

| 4KB Random Write | Up to 245K IOPS |

| Power Consumption | 8W (idle) / 18W (max) |

| Encryption | AES-128 |

| Endurance | 1536TB (~1.4TB per day) |

| Warranty | Three years |

At this moment the Phoenix Blade is only available in 480GB capacity, although G.Skill plans to add a 960GB model later. 480GB is a logical choice because for the target group 240GB would be too small in many cases and doesn't provide the same level of performance, whereas the cost of the 960GB would push most shoppers away. In the end, G.Skill hasn't been actively involved in SSDs recently, so doing a 'soft' launch and observing the market's reaction is a safe strategy.

The interesting spec is the endurance and it's not a typo. G.Skill is indeed rating the Phoenix Blade at 1,536TB, which translates to 1.4TB (i.e. 1433.6GB) of writes per day for three years. I asked G.Skill about the rating method and I was told it's simply raw NAND capacity multiplied by the number of P/E cycles, which is then divided by the average write amplification. G.Skill assumes an average write amplification of 1x due to SandForce's real-time data compression, so 512GB*3,000/1 yields 1,536TB. As G.Skill's SSD venture is solely consumer focused, it has no reason to artificially limit the endurance to boost its enterprise SSD sales like many vendors do, although I am concerned whether the Phoenix Blade has been fully validated for workloads that write over a terabyte of data per day.

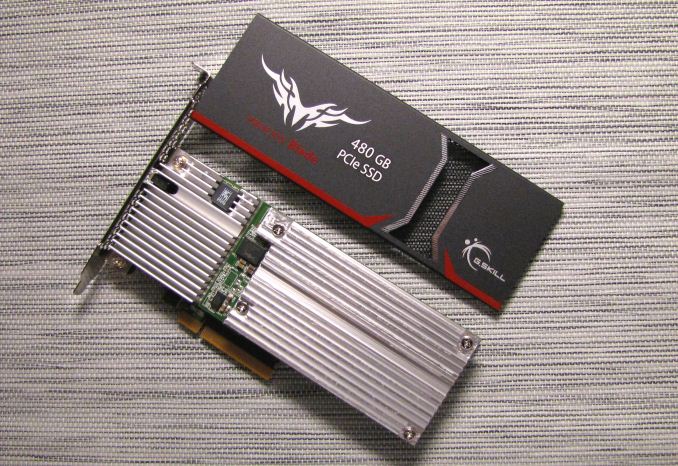

Delving into the Phoenix Blade reveals a massive metal heatsink that covers nearly the whole PCB (or both PCBs, actually). There's plenty to cool since each SF-2282 can draw up to ~4W under load plus at least another couple of watts for the RAID controller, which results in a maximum power rating of 18W according to G.Skill's data sheet.

Taking off the heatsinks reveals the main PCB as well as the daughterboard. Both are home to two SF-2282 controllers with each controller being connected to eight dual-die 16GB NAND packages (i.e. 32*16GiB=512GiB of NAND in total).

The RAID controller in the Phoenix Blade is a complete mystery. Googling the part number doesn't bring any light to the situation and due to confidentiality agreements G.Skill is tightlipped about any details regarding the controller. My best guess is that the controller features firmware from SBC Designs with the actual silicon coming from another vendor.

Update 12/13: It turns out that the controller has actually nothing to do with SBC Designs as it's from Comay that is a brand used by CoreRise, which is a Chinese SSD manufacturer. In fact, the Phoenix Blade looks a lot like CoreRise's Comay BladeDrive G24, so I wouldn't be surprised if G.Skill was sourcing the drives from CoreRise and rebranding them (nearly all memory vendors do this -- very few do the manufacturing in-house). I'm still inclined to believe that the silicon is from a third party as CoreRise's product lineup suggests that the company doesn't have the expertise that's needed for semiconfuctor design and development, but the firmware is likely unique to CoreRise.

The Phoenix Blade is bootable in any system. Upon boot, the drive loads legacy drivers before the BIOS and hence it can be selected as a boot device just like any other drive. Loading the drivers adds a few seconds to the boot time, but other than that the Phoenix Blade behaves like a normal SATA drive (but with much higher rated peak performance). TRIM and SCSI unmap are also supported.

While not easily visible in the photo due to residue from thermal pads, the Phoenix Blade uses the new B2 stepping of the SF-2282 controller. The fundamental design of the controller has remained unchanged, but the new stepping introduces DevSleep support for improved power efficiency, although in this case DevSleep brings no real added value.

Test System

| CPU | Intel Core i5-2500K running at 3.3GHz (Turbo & EIST enabled) |

| Motherboard | ASRock Z68 Pro3 |

| Chipset | Intel Z68 |

| Chipset Drivers | Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory | G.Skill RipjawsX DDR3-1600 4 x 8GB (9-9-9-24) |

| Video Card | Palit GeForce GTX 770 JetStream 2GB GDDR5 (1150MHz core clock; 3505MHz GDDR5 effective) |

| Video Drivers | NVIDIA GeForce 332.21 WHQL |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

Thanks to G.Skill for the RipjawsX 32GB DDR3 DRAM kit

62 Comments

View All Comments

Duncan Macdonald - Friday, December 12, 2014 - link

How does this compare to 4 240GB Sandforce SSDs in software RAID 0 using the Intel chipset SATA interfaces?Kristian Vättö - Friday, December 12, 2014 - link

Intel chipset RAID tends not to scale that well with more than two drives. I have to admit that I haven't tested four drives (or any RAID 0 in a while) to fully determine the performance gains, but it's safe to say that the Phoenix Blade is better than any Intel RAID solution since it's more optimized (specific hardware and custom firmware).nathanddrews - Friday, December 12, 2014 - link

Sounds like you just set yourself up for a Capsule Review.Havor - Saturday, December 13, 2014 - link

I dont get the high praise of this drive, sure it has value for people that need high sequential speed, or people that use it to host a database on a budget that have tons of request, and can utilize high QD, all other are better off with a SATA SSD that preforms much better with a QD of 2 or less.As desktop users almost never go over QD2 in real word use, so they would be much better of with a 8x0 EVO or so, both performance wise as price wise.

I am actually wane of the few that could use the drive, if i had space for it (running quad SLI), as i use a RAMdrive, and copy programs and games that are stored on the SSD in a RAR file, true a script from a R0 set of SSDs to the RAMdisk, so high sequential speed is king for me.

But i count my self in the 0.1% of nerds, that dose things like that because i like doing stuff like that, any other sane person would just use a SSD to run its programs of.

Integr8d - Sunday, December 14, 2014 - link

The typical self-centered response: "This product doesn't apply to me. So I don't understand why anyone else likes it or why it should be reviewed," followed by, "Not that my system specs have ANYTHING to do with this, but here they are... 16 video cards, raid-0 with 16 ssd's, 64TB ram, blah blah blah..." They literally just look for an excuse to brag...It's like someone typing a response to a review of Crest toothpaste. "I don't really know anything about that toothpaste. But I saw some, the other day, when I went to the store in my 2014 Dodge Charger quad-Hemi supercharged with Borla exhaust, 20" BBS with racing slicks, HID headlights, custom sound system, swimming pool in the trunk and with wings on the side so I can fly around.

It's comical.

dennphill - Monday, December 15, 2014 - link

Thanks, Integ8d, you put a smile on my face this morning! My feelings exactly.pandemonium - Tuesday, December 16, 2014 - link

Hah. Nicely done, Integr8d.alacard - Friday, December 12, 2014 - link

The DMI interface between the chipset and the processor maxes out at about 1800~1850MB/s and this bandwidth has to be split between all the devices connected to the PCH which also incorporates an 8x pci 2.0 link. Simply put, there's not enough bandwidth to go around with more than two drives attached to the chipset in raid, not to mention that the scaling beyond 2 drives is fairly bad in general through the PCH even when nothing else is going on. And to top it all off 4k performance is usually slightly slower in Raid than a on a single SSD (ie it doesn't scale at all).I know Tomshardware had an article or two on this subject if you want to google it.

personne - Friday, December 12, 2014 - link

It takes three SSDs to saturate DMI. And 4k writes are nearly double on long queue depths. So you get more capacity, higher cost, and much of the performance benefit for many operations. Certainly tons more than a single SSD at a linear cost. If you research your statements.alacard - Friday, December 12, 2014 - link

To your first point about saturating DMI, we're in agreement. Reread what i said.To your second point about 4k, you are correct but i've personally had three separate sets of RAID 0 on my performance machine (2 vertex 3s, 2 vertex 4s, 2 vectors), and i can tell you that those higher 4k results were not impactful in any way when compared to a single SSD. (Programs didn't load faster for instance.)

http://www.tomshardware.com/reviews/ssd-raid-bench...

That leaves me curious as to what you're doing that allows you to get the benefits of high queue depth RAID0? What's your setup, what programs do you run? I ask because for me it turned out not to be worth the bother, and this is coming from someone who badly wanted it to be. In the end the higher low queue depth 4k of 1 SSD was a better option for me so i switched back.

http://www.hardwaresecrets.com/article/Some-though...