Intel’s 2011 Investor Meeting - Intel’s Architecture Group: 14nm Airmont Atom In 2014

by Ryan Smith on May 17, 2011 6:27 PM ESTToday Intel is holding their annual investors meeting at their Santa Clara headquarters. In true Intel fashion it’s being treated as a big event (ed: it’s so big they ran out of lunch), as this is the company’s primary vehicle for addressing the investors holding their 125 billion dollars in shares; in essence it’s a reprise of their current and future technology plans as a pep-talk for investors. As such it’s not really a technical event, but it’s not uncommon for a few new technical details to shake out during the presentations.

There are a number of presentations throughout the day, including keynotes from Paul Otellini, and presentations from a number of Intel groups including the architecture group, the data center group, and the manufacturing group. If something is going to shake out it’s bound to be the architecture group, so this is where we’ll start.

Atom/SoC

A big part of the architecture group’s discussion focused on Atom/SoC. The 32nm Medfield Atom is due this year, so Intel has been laying out their plans for what they’re going to be doing with Medfield. Unsurprisingly, a big push with Medfield is to break into the SoC space in a way that Moorestown could not. Intel never managed any major design wins for smartphones with Moorestown, which is something they want to correct with Medfield. To that extent Intel has been showing off Medfield concept phones to show investors that it’s a viable product and to try to drum up support.

Intel's Medfield Concept Phone

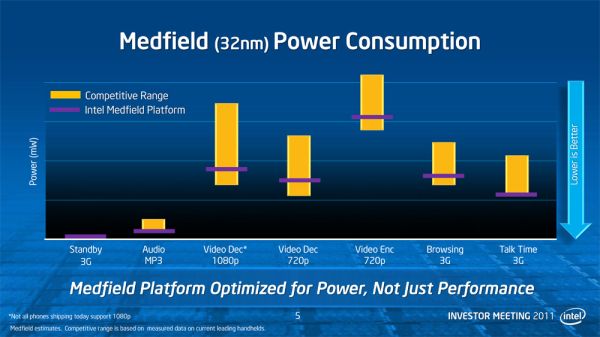

Intel is also spending some effort to dispel the idea that they can’t be competitive on a power consumption basis; in fact for the amount of effort they put into that message and the number of times they repeated it, they seem to be seriously concerned about being perceived as uncompetitive. Intel did some power consumption testing on Medfield and put together a slide showing their findings that Medfield is competitive with some current leading phones, but as always take this with a pinch of salt. Ultimately this is a comparison of 32nm Medfield with 4Xnm ARM SoCs, so it’s only applicable so long as Intel’s still ahead of the ARM producers on manufacturing technology.

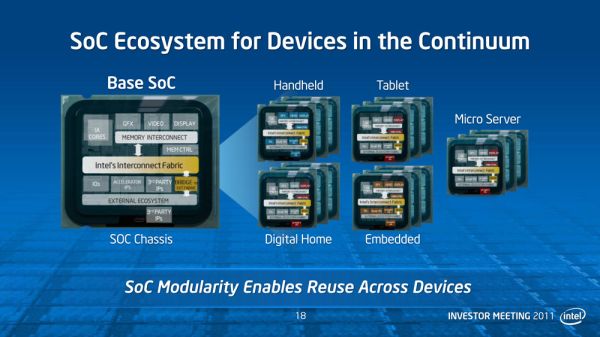

Moving on, one thing Intel has been dealing with as Atom has evolved is how to consolidate all the disparate parts of a system onto a SoC, given the wide variety of uses for a SoC. With discrete components manufacturers could simply build a device out of the parts necessary for the features they need, but with Atom SoCs much of that gets shifted onto Intel. For Intel this means they will be focusing on producing a wider variety of SoCs, based on building up individual SoC designs on a modular basis. Intel isn’t going in-depth on how many specific SoC variants they’re planning on offering, but our impression is that there will be many variants, similar to how Intel offers a number of different desktop/laptop/server CPUs based on a common architecture.

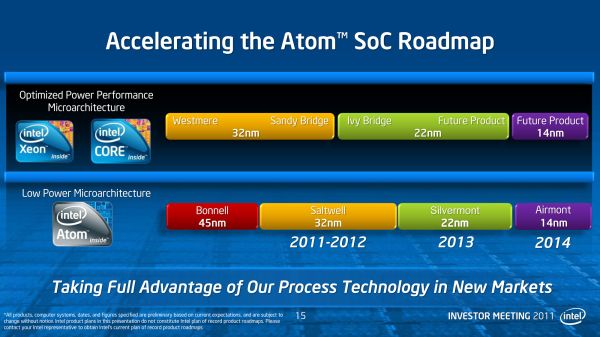

Finally, Intel published a new generalized roadmap for Atom through 2014. Unfortunately they aren’t going into any significant detail on architecture here – while Silvermont is named, nothing is confirmed besides the name and manufacturing process – but it’s a start, and it ends with a shocker. We will see Silvermont in 2013 on Intel’s 22nm process, likely hand-in-hand with Intel’s aforementioned plans for additional SoC variations.

Far more interesting however is that Intel didn’t stop with Silvermont on their Atom roadmap. Intel’s roadmap goes out to 2014 and includes Silvermont’s successor: Airmont. We know even less about Airmont than we do Silvermont, but a good guess would be that it’s the tick in Intel’s tick-tock cadence for Atom. The biggest news here is that with a move to tick-tock for Atom, Intel is finally accelerating the production of Atom parts on their newer fab processes. Currently Atom processors are a year or more behind Core processors for using a new process, and even with Silvermont that’s still going to be the case. But for Airmont that window is shrinking: Airmont will be released on Intel’s forthcoming 14nm process in 2014, the same year as their respective Core processor. Intel hasn’t specified when in 2014 this will be, and it’s likely it will still be months after 14nm launches for Core processors, but nevertheless it’s much sooner than it has been before.

By accelerating their production of Atom on new processes, this should allow Intel to capitalize on their manufacturing advantages over the rest of the fabs. With Intel’s existing Atom schedule, they only have a year or less before other fabs catch up with them, so by putting Atoms on new processes sooner, they increase that lead time. So far Intel’s ARM SoC competitors have really only had to deal with Intel’s threats on an architectural level, so having Intel challenge them at a manufacturing level too will make Intel a much more threatening competitor.

Core

Meanwhile, for the rest architecture group’s presentation, it was largely a catch-all for all of Intel’s consumer technologies. Much of the talk focused on where Intel plans to be in the next few years, based on where they expect to be thanks to their newly announced 22nm process. Intel considers their 22nm process to be a significant advantage for their products, so a great deal of their plans in the consumer space involve exploiting it in some manner or another.

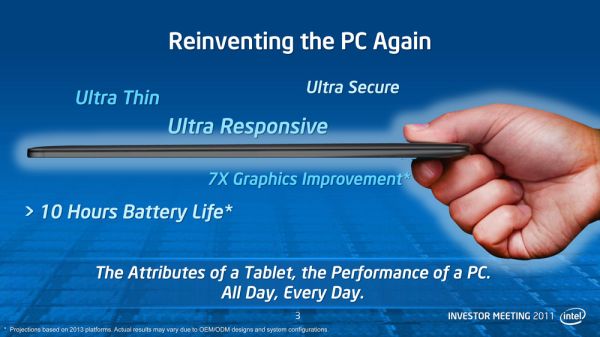

Ivy Bridge, Intel’s first 22nm product, is being shown off in a few sample systems with Intel reiterating that it will be launching at the beginning of next year – we’d guess at CES. Longer term, Intel wants to get laptops closer to netbooks/tablets in terms of size and battery life, so that they can push 10 hours on a laptop (something the C2D-based Macbook Air can already get very close to). The catalyst for this will be Haswell, Intel’s new microarchitecture on their 22nm process scheduled for 2013.

Intel also used the occasion to show off a couple new technologies that they’re working on for Ivy Bridge generation computers. We’ve heard the name Fast Flash Standby mentioned before, but as far as we know this was the first time it has been demoed. In a nutshell, Fast Flash Standby is hibernating to SSDs, another product Intel has a significant interest in. The basis for Fast Flash Standby is that while going into sleep is fast, it requires leaving the RAM powered up to hold its contents, which is why sleep is only good for a few days of standby versus weeks for hibernation. Hibernating to a SSD, particularly one with a very high sequential read and write throughput, allows hibernation to take place much quicker and to resume much sooner. Intel is doing more here than just writing a hibernation file to a SSD, but the concept is similar.

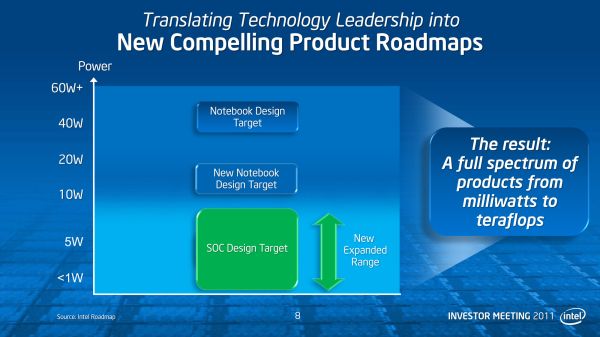

Longer term Intel is looking at what kind of markets they want to go after, and what architectures they need to reach them. Intel is talking – albeit nebulously – about a new 10-20W notebook design target to sit right above their sub-10W target for Atom/SoC. Currently Intel offers CULV Sandy Bridge processors in the 10-20W range, but Intel appears to want to go beyond CULV with this new target. Whether this is a bulked up Atom, or a further trimmed IB/Haswell/Skylake remains to be seen. Intel is throwing around some performance targets however: they’re looking to improve iGPU performance by 12x over today’s performance in that 10-20W envelope.

63 Comments

View All Comments

ibudic1 - Tuesday, May 17, 2011 - link

I am also having doubts going beyond 14nm. The quantum physics will start to show its ugly head. I think that the transistor thickness will be too narrow for the quantum affects of electrons "jumping" over to another gate.I know that this was said before, but this time, I don't think they will be able to cheat physics.

Also is anyone else nervous about 3 gate transistors?

ghost55 - Tuesday, May 17, 2011 - link

they said the same thing about 92nm and the light diffusion limit, and look where we are today. my guess, is that they will go down to the transistor limit of one atom thick, ten atoms wide (they have made transistors that size) and then stuff like layered circuit boards, optical processors, and graphene will come into play. also, the 3-gate transistor will probably be a major step forward.softdrinkviking - Tuesday, May 17, 2011 - link

3 gate transistors seem fine to me, but they seem like a stopgap tech on the way to a whole new way of building transistors rather than a step in a new direction.FunBunny2 - Tuesday, May 17, 2011 - link

-- a whole new way of building transistors rather than a step in a new direction.Now, that's wishful thinking. If the limit is 1 X 10 atom device, transistor or otherwise, that's the limit. For an electrical device. Which brings up the various notions of bio or photon based device. Someone has to invent the valve/transistor/foo equivalent first, though.

numberoneoppa - Wednesday, May 18, 2011 - link

Memristors!therealnickdanger - Wednesday, May 18, 2011 - link

Or maybe we'll just see a return of large computers! Can't build the chips smaller? Put more chips in! :)wumpus - Friday, May 20, 2011 - link

From memory, there have been light issues starting from closer to 1000nm (going submicron). Around 90nm (and really happening the next step down) leakage became an issue. This is even more apropos to the discussion in that the transistors themselves weren't working as they used to. Before you got cheaper, faster, lower power. After 90nm you had cheaper, faster, lower power: pick two (and if you don't work on lowering power heat will go up exponentially as you shrink your process).As we keep going, the number of miracles needed for each step pile up. I think that between the money and invested equipment, the show can go on, but don't expect it to be CMOS transistors.

kb3edk - Tuesday, May 17, 2011 - link

14nm is not the end of the road but it's pretty darn close. We will probably see a 10nm process node around 2016 and then a "final" 6nm process in 2018 or so before quantum mechanics finally brings an end to the age of Moore's Law.I'm extrapolating out from this interview with an Intel engineer from a few years ago... http://www.webcitation.org/5hjItXYEI

He seems very confident that Intel will just keep on chugging away with nanotubes and whatnot... I am less convinced however. I think that as this decade comes to a close, the only major improvements in CPUs will be in microarchitecture not lithography. It could even be the end of the road for x86 altogether, it's possible the CPUs of the 2020s are what we call GPUs today.

FunBunny2 - Tuesday, May 17, 2011 - link

-- It could even be the end of the road for x86 altogether.IIRC, it's been years since Intel burned the instruction set into hardware. They've been using all those millions of transistors to a RISC hardware, with an emulation layer for the X86 on top. Why they did that rather than use the budget to make really fast X86 hardware is instructive. Even IBM, starting with the 360, knew enough to emulate the cheap machines and build the instruction set into hardware for the fast machines. The only plausible answer is to provide an escape hatch from X86.

Strunf - Wednesday, May 18, 2011 - link

Millions of transistor out of billions don't seem to me to be much of an handicap. It's not the decode from x-86 to microOps that makes the x86 worst than anything else, this is just a small step on the whole processing process and probably the least demanding.