GIGABYTE Launches Two 4U NVIDIA Tesla GPU Servers: High Density for Deep Learning

by Joe Shields on May 9, 2018 4:00 PM EST

GIGABYTE has announced a pair of new GPU-focused servers, rolling out the 8x SXM2 GPU G481-S80 and the 10x PCIe GPU G481-HA0, offering data centers some of the highest GPU density in this form factor available on the market. The new servers feature dual Intel Xeon Scalable processors with up to 28 core CPUs and the highest CPU TDP requirement of 205W for each socket. With deep learning-based systems becoming more visible in our daily lives (think image recognition, autonomous vehicles, and medical research), data centers need high-density machines to serve their client needs as well as save precious real estate.

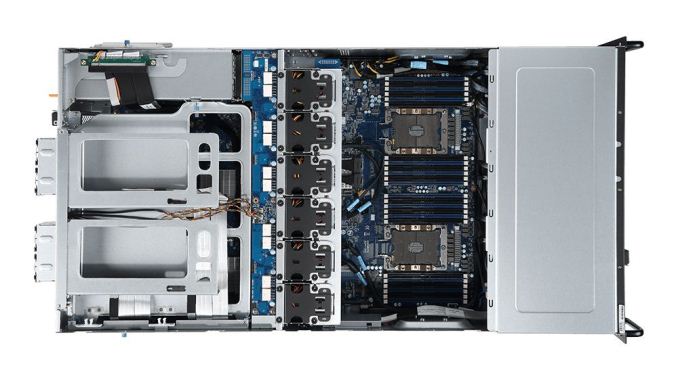

Both server chassis are in a 4U form factor supporting the latest Xeon Scalable processors with up to 28 cores per CPU and the ability to handle the 205W TDP supporting Intel’s Omni-Path connection technology to reduce CPU to network latency. The G481 series uses space optimization and hot-swappable dual fan walls for keeping the unit cool. The platform uses 6-channel RDIMM/LRDIMM DDR4 supporting up to 2666 Mhz and 1.5TB (CPU dependent) through 12 DIMMs per socket.

The G481-S80 is able to accommodate up to eight SXM2 form factor GPUs from the NVIDIA Volta V100 or Pascal P100 lines, with support for NVLink GPU to GPU interconnection which yields higher bandwidth, more links, and improved scalability. A single NVIDIA Tesla V100 GPU can support up to six NVLink links offering a signaling rate of 25 GB/s in each direction for a total bandwidth of 300 GB/s. Meanwhile the G481-HA0 is able to support a total of 10 PCIe GPU cards, with GIGABYTE saying these systems are designed to offer the highest GPU density available in this form factor available on the market.

GPUs aside, the G481-S80 has four PCIe expansion slots to support advanced network connectivity such as InfiniBand for RDMA (Remote Direct Memory Access) for high-throughput, low latency GPU to network connectivity for each GPU pair. The HA0 adds the ability to install additional networking connection options in one of the PCIe expansion slots instead of a GPU. For example, a combination of 8x GPUs and two network cards such as InfiniBand or 10/25/50/100 Gb Ethernet for increased flexibility.

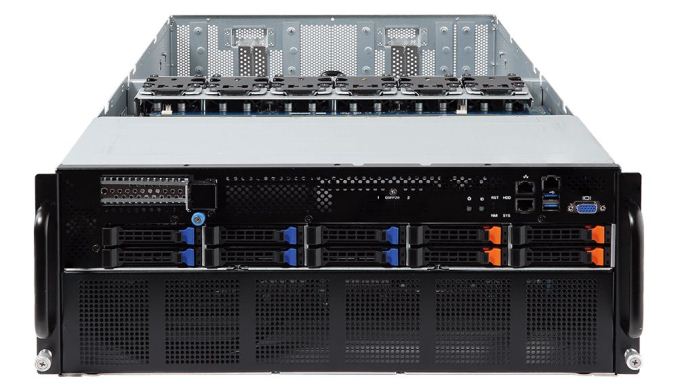

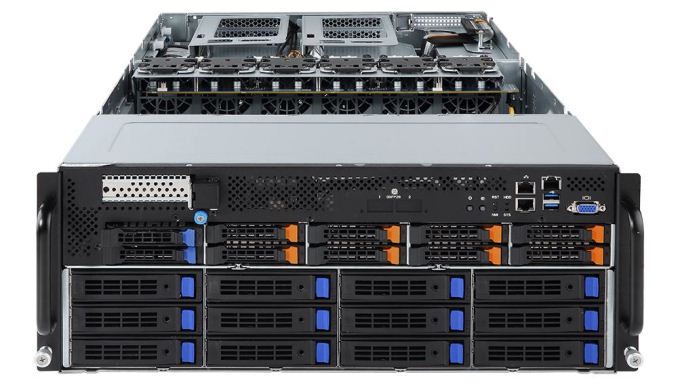

For storage, the G481-S80 has 10 2.5-inch hot-swap drive bays in the front able to support four NVMe drives through U.2 connection and six drives through SATA/SAS. The HA0 takes that further and offers 10 2.5-inch hot-swap drive bays able to house up to eight 2.5-inch NVMe drives and two 2.5-inch SATA/SAS drives. Below the 2.5-inch storage drive area are an additional 12 3.5-inch SATA/SAS hot swap drive bays. Both models also have a PCIe expansion slot in front above the storage area to attach a RAID card with a battery backup unit slot as well.

Powering these units are 80 Plus Platinum 2.2Kw power supply units. The S80 uses four, three for main system power and one back up for N+N redundancy while the HA0 uses three units for an N+1 configuration.

Neither pricing nor availability was mentioned; however, their product pages are on the website so we do expect to see these soon.

| GIGABYTE G481 Series | |||||

| G481-S80 | G481-HA0 | ||||

| CPU | Dual Intel Xeon Scalable Processors (up to 205W TDP per socket) | ||||

| DIMM | 6-channel RDIMM/LRDIMM/NVDIMM DDR4, 24 x DIMMs | ||||

| LAN | 2 x 1 GBE LAN ports (Intel I350-AM2) 2 x 1 Gb M-LAN ports |

2 x 10GBE LAN ports (Intel X550-AT2) 2 x 1 GbE LAN ports (Intel I350-AM2) 2 x 1Gb M-LAN ports |

|||

| Storage | 4 x 2.5" NVMe 6 x 2.5" SATA/SAS hot-swappable HDD/SSD bays |

8 x NVMe 2 x SATA/SAS 2.5" hot-swappable HDD/SSD bays 12 x 3.5" SATA/SAS hot-swappable HDD/SSD bays |

|||

| Expansion Slots | 5 x low profile PCIe 3.0 expansion slots 1 x OCP Gen3 x16 Mezzanine slot |

10 x PCIe x16 slots (Gen3 x16 bus) for GPUs 2 x low profile PCIe 3.0 expansion slots |

|||

| GPU Supported | 8 x SXM2 GPU modules (NVIDIA V100 or P100) | 10 x PCIe GPU cards | |||

| Power Supply | 4 x 80 PLUS Platinum 2200W redundant PSUs | 3 x 80 PLUS Platinum 2200W redundant PSUs | |||

Complete specifications for each server can be found at their webpage (S80, HA0)

Related Reading:

- GIGABYTE's G190-G30: A 1U Server with four Volta and NVLink

- GIGABYTE Server Launches Three New Density-Focused Servers: Skylake-SP, Choice of NIC

- Immersion Server Liquid Cooling: ZTE makes a Splash at MWC

- More EPYC Servers: Dell Launches 1P and 2P PowerEdge for HPC and Virtualization

- Sizing UP Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Sever CPU Battle of the Decade?

- Intel to Update Xeon D in Early 2018, with Skylake-SP Cores

- Intel Launches Xeon-W CPUs for Workstations: Skylake-SP & ECC for LGA2066

Source: GIGABYTE

6 Comments

View All Comments

drexnx - Thursday, May 10, 2018 - link

...how many sol/s in equihash? what's the sols/watt?dolphin2x - Thursday, May 10, 2018 - link

How does this handle PCIe lane switching? The HA0 has 10x x16 slots, but 2x sockets will only get you 96 lanes total. Seems like they'd be overcommitting GPUs to a certain extent.MajGenRelativity - Thursday, May 10, 2018 - link

They could have a PLX chip to increase the PCIe lane count availabledolphin2x - Thursday, May 10, 2018 - link

It wouldn't increase lane count, only enable multiplexing to the existing 96 lanes right? So there would still be contention if all the GPUs were maxing out their x16's.MajGenRelativity - Thursday, May 10, 2018 - link

It wouldn't increase lane count to the CPU, no. I do however think it increases bandwidth *between* the GPUs, depending on their locations relative to the switch.dolphin2x - Thursday, May 10, 2018 - link

Thanks for your response!