GTC 2010 Day 1: NVIDIA Announces Future GPU Families for 2011 And 2013

by Ryan Smith on September 22, 2010 2:46 AM ESTAs we mentioned last week, we’re currently down in San Jose, California covering NVIDIA’s annual GPU Technology Conference. If Intel has IDF and Apple has the World Wide Developers Conference, then GTC is NVIDIA’s annual powwow to rally their developers and discuss their forthcoming plans. The comparison to WWDC is particularly apt, as GTC is a professional conference focused on development and business use of the compute capabilities of NVIDIA’s GPUs (e.g. the Tesla market).

NVIDIA has been pushing GPUs as computing devices for a few years now, as they see it as the next avenue of significant growth for the company. GTC is fairly young – the show emerged from NVISION and its first official year was just last year – but it’s clear that NVIDIA’s GPU compute efforts are gaining steam. The number of talks and the number of vendors at GTC is up compared to last year, and according to NVIDIA’s numbers, so is the number of registered developers.

We’ll be here for the next two days meeting with NVIDIA and other companies and checking out the show floor. Much of this trip is to get a better grasp on just where things are for NVIDIA still-fledging GPU compute efforts, especially on the consumer front where GPU compute usage has been much flatter than we were hoping for at this time last year with the announcement/release of NVIDIA and AMD’s next-generation GPUs, and the ancillary launch of APIs such as DirectCompute and OpenCL, which are intended to allow developers to write an application against these common APIs rather than targeting CUDA or Brook+/Stream. If nothing else, we’re hoping to see where our own efforts in covering GPU computing need to lie – we want to add more compute tests to our GPU benchmarks, but is the market to the point yet where there’s going to be significant GPU compute usage in consumer applications? This is what we’ll be finding out over the next two days.

Jen-Hsun Huang Announces NVIDIA’s Next Two GPUs

While we’re only going to be on the show floor Wednesday and Thursday, GTC unofficially kicked off Monday, and the first official day of the show was Tuesday. Tuesday started off with a 2 hour keynote speech by NVIDIA’s CEO Jen-Hsun Huang, which keeping with the theme of GTC focused on the use of NVIDIA GPUs in business environments.

Not unlike GTC 2009, NVIDIA is also using the show as a chance to announce their next-generation GPUs. GTC 2009 saw the announcement of the Fermi family, with NVIDIA first releasing the details of the GPU’s compute capabilities there, before moving on to focusing on gaming at CES 2010. This year NVIDIA announced the next two GPU families the company is working on, albeit not in as much detail as we got about Fermi in 2009.

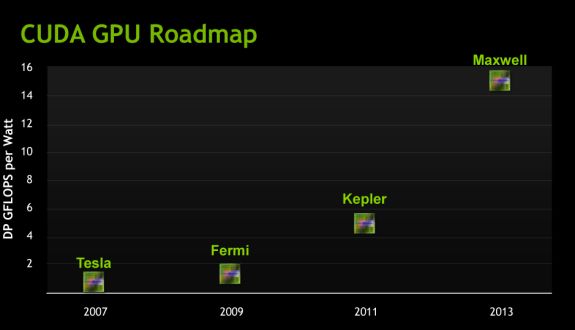

The progression of NVIDIA's GPUs from a Tesla/Compute Standpoint

The first GPU is called Kepler (as in Johannes Kepler the mathematician), which will be released in the 2nd half of 2011. At this point the GPU is still a good year out, which is why NVIDIA is not talking about its details just yet. For now they’re merely talking about performance in an abstract manner, in this case Kepler should offer 3-4 times the amount of double precision floating point performance per watt of Fermi. With GF100 NVIDIA basically hit the wall for power consumption (and this is part of the reason current Tesla parts are running 448 out of 512 CUDA cores), so we’re basically looking at NVIDIA having to earn their performance improvements without increasing power consumption. They’re also going to have to earn their keep in sales, as NVIDIA is already talking about Kepler taking 2 billion dollars to develop and it’s not out for another year.

The second GPU is Maxwell (named after James Clerk Maxwell, the physicist/mathematician), and will be released some time in 2013. Compared to Fermi it should offer 10-12 times the DP FP performance per watt, which means it’s roughly another 3x increase over Kepler.

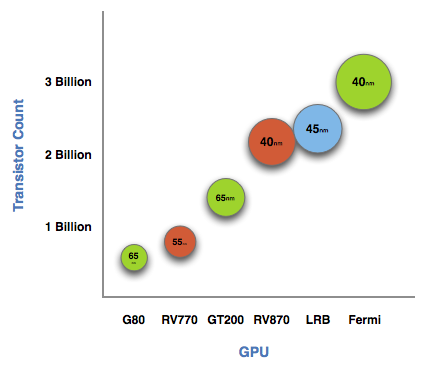

NVIDIA GPUs and manufacturing processes up to Fermi

NVIDIA still has to release the finer details of the GPUs, but we do know that Kepler and Maxwell are tied to the 28nm and 22nm processes respectively. So if nothing else, this gives us strong guidance on when they would be coming out, as production-quality 28nm fabrication suitable for GPUs is still a year out and 22nm is probably a late 2013 release at this rate. What’s clear is that NVIDIA is not going to take a tick-tock approach as stringently as Intel did – Kepler and Maxwell are going to launch against new processes – but this is only about GPUs for NVIDIA’s compute efforts. It’s likely the company will still emulate tick-tock to some degree, producing old architectures on new processes first; similar to how NVIDIA’s first 40nm products were the GT21x GPUs. In this scenario we’re talking about low-end GPUs destined for life in consumer video cards, so the desktop/graphics side of NVIDIA isn’t bound to this schedule like the Fermi/compute side is.

At this point the biggest question is going to be what the architecture is. NVIDIA has invested heavily in their current architecture ever since the G80 days, and even Fermi built upon that. It’s a safe bet that these next two GPUs are going to maintain the same (super)scalar design for the CUDA cores, but beyond that anything is possible. This also doesn’t say anything about what the GPUs’ performance is going to be like under single precision floating point or gaming. If NVIDIA focuses almost exclusively on DP, we could see GPUs that are significantly faster at that while not being much better at anything else. Conversely they could build more of everything and these GPUs would be 3-4 times faster at more than just DP.

Gaming of course is a whole other can of worms. NVIDIA certainly hasn’t forgotten about gaming, but GTC is not the place for it. Whatever the gaming capabilities of these GPUs are, we won’t know for quite a while. After all, NVIDIA still hasn’t launched GF108 for the low-end.

Wrapping things up, don’t be surprised if Kepler details continue to trickle out over the next year. NVIDIA took some criticism for introducing Fermi months before it shipped, but it seems to have worked out well for the company anyhow. So a repeat performance wouldn’t be all that uncharacteristic for them.

And on that note, we’re out of here. We’ll have more tomorrow from the GTC show floor.

82 Comments

View All Comments

silverblue - Wednesday, September 22, 2010 - link

Quite so; apparently it's not difficult to convert x87 to SSE, yet nVidia deliberately didn't in order to make a better case for hardware physics processing. If they're going to be giving CUDA a helping hand, then they should put some effort into releasing version 3.0 of the PhysX SDK too.iwodo - Thursday, September 23, 2010 - link

While personally hate it, This is exactly what Intel has done so on their Compiler against AMD.nbjknk - Thursday, November 25, 2010 - link

Dear customers, thank you for your support of our company.

Here, there's good news to tell you: The company recently

launched a number of new fashion items! ! Fashionable

and welcome everyone to come buy. If necessary, please$$$$$$$$$$__$$$_$$$$$$$$$$$

http://www.vipshops.org

$$_____$$$_$$$_________$$$

$$$_____$$$_$$$______ $$$

$$$ ____$$$_ $$$_____ $$$

$$$$$$$$$$__$$$____$$$

$$$_____$$$_$$$___$$$

$$$_____$$$_$$$__$$$

$$$$$$$$$$$_$$$_$$$

$$$$$$$$$$__$$$_$$$$$$$$$$$$ !::!

http://www.vipshops.org

Thursday, 21 October 2010 at 9:48 PM

Ryan Smith - Wednesday, September 22, 2010 - link

CUDA-x86 is a commercial product by the Portland Group. It's not even the first x86 runtime for CUDA; Ocelot offered this last year (although I suspect Portland's implementation will be much better). In any case since it's a commercial product, don't expect to see it used in consumer products; it really doesn't sound like it's intended for that.iwodo - Thursday, September 23, 2010 - link

Arh, Thanks for the clarifying . I thought it came with the SDK as well.AnandThenMan - Wednesday, September 22, 2010 - link

--- NVIDIA took some criticism for introducing Fermi months before it shipped, but it seems to have worked out well for the company anyhow. ---It did? Fermi is a disaster for Nvidia, at least up until now. AMD is about to move on to a new(er) architecture, Nvidia has not even put Fermi into all market segments. Your statement is highly suspect.

Ryan Smith - Wednesday, September 22, 2010 - link

Keep in mind we're at GTC, so we're talking about the compute side of things. The guys here weren't any happier than anyone else about GF100's delay, but the fact of the matter is that there are halls full of developers happily clobbering Tesla products. For them, having Fermi announced ahead of its actual launch was a big deal. And that's why the early announcement worked out well for the company - it for the developers, not the consumers.muhahaaha - Wednesday, September 22, 2010 - link

After your recent failure with Fermi (hot, loud, and power hungry), you're gonna need to lie and hype your next gen stuff.If anything like last time happens, you'll be on your ass, you media whores, re-branding liars, and FUD pushers.

That CEO gotta go. What a foo.

aguilpa1 - Wednesday, September 22, 2010 - link

Dude you need to grow up. When the next generation of ATI the 2900XT came it was "hot, loud and power hungry...., and under performing to the at that time brand new 8800 series. It has taken this long for the architecture to mature. It has matured, you haven't. As far as re branding amd media whores, both sides do it man..., whatever.Dark_Archonis - Wednesday, September 22, 2010 - link

The only thing suspect here is the motives of some posters.Fermi is loud, hot, and power hungry? Really? I guess you've been living under a rock, and never heard of the GTX 460 or the GTS 450. Neither of them are loud, hot, or that power-hungry.

You want to know what's loud, hot, and power-hungry? An ATI 5970 card.