AMD's Ryzen 9 6900HS Rembrandt Benchmarked: Zen3+ Power and Performance Scaling

by Dr. Ian Cutress on March 1, 2022 9:30 AM ESTOffice and Science

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

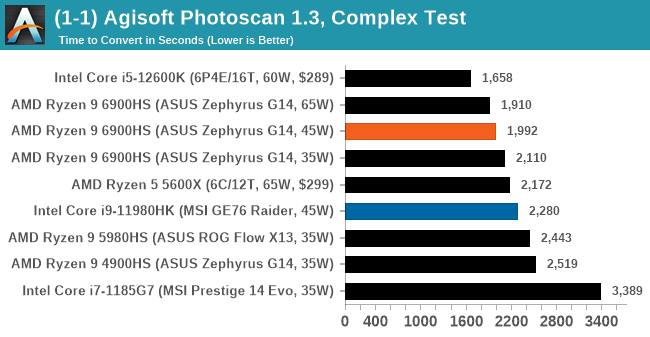

Agisoft Photoscan 1.3.3: link

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

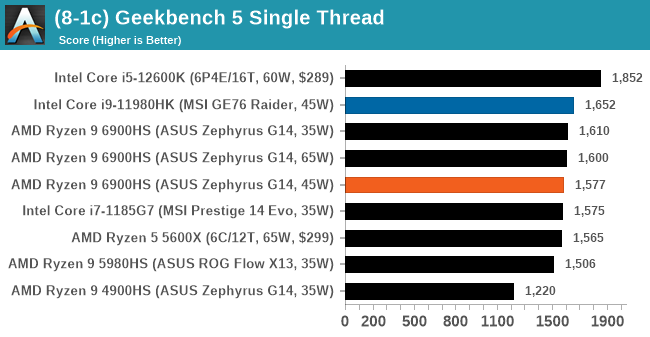

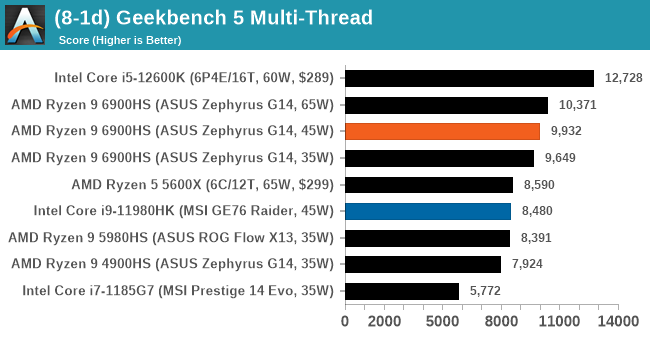

GeekBench 5: Link

As a common tool for cross-platform testing between mobile, PC, and Mac, GeekBench is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We have both GB5 and GB4 results in our benchmark database. GB5 was introduced to our test suite after already having tested ~25 CPUs, and so the results are a little sporadic by comparison. These spots will be filled in when we retest any of the CPUs.

We saw a few instances where the 35W/45W results were almost identical, with the margin that the 35W would come out ahead in single threaded tasks. This may be because 35W was a fixed setting in the software options, whereas 45W was the power management framework in action.

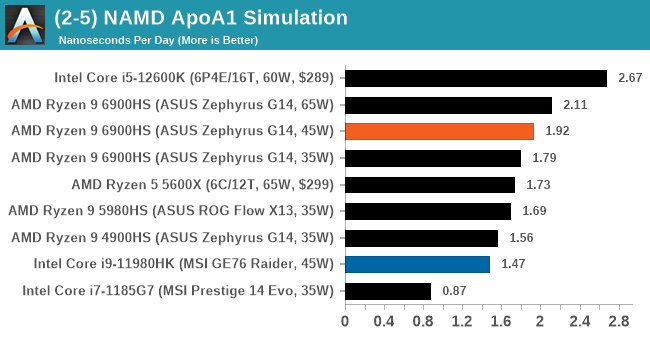

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modeling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps. We use version 2.13 as this was the recommended version at the time of integrating this benchmark into our suite. The latest nightly builds we’re aware have started to enable support for AVX-512, however due to consistency in our benchmark suite, we are retaining with 2.13. Other software that we test with has AVX-512 acceleration.

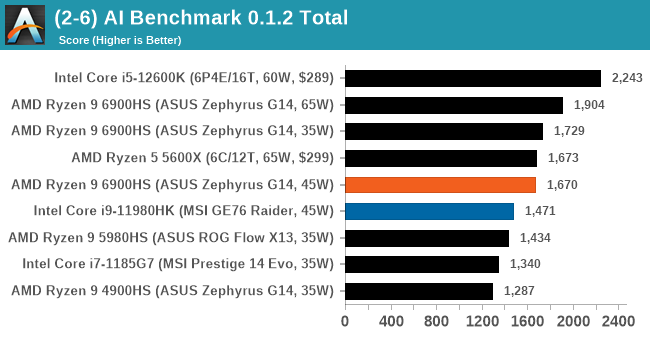

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6.

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

We saw a few instances where the 35W/45W results were almost identical, with the margin that the 35W would come out ahead in single threaded tasks. This may be because 35W was a fixed setting in the software options, whereas 45W was the power management framework in action.

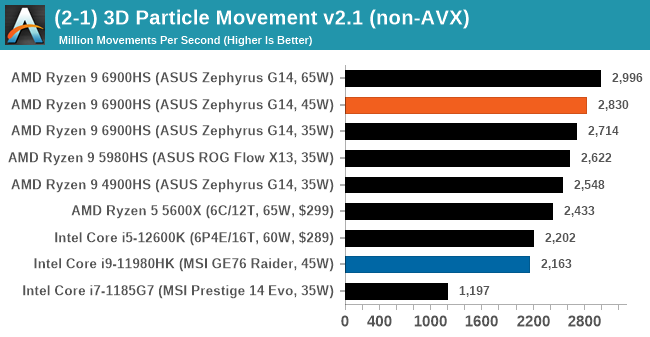

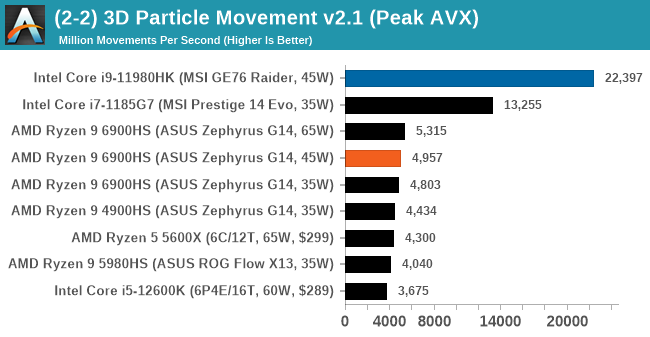

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of this benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, and flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements.

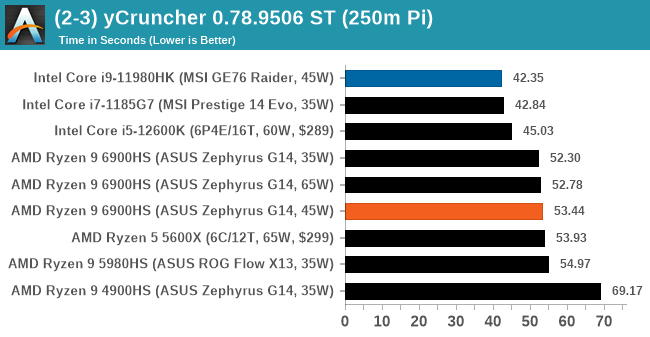

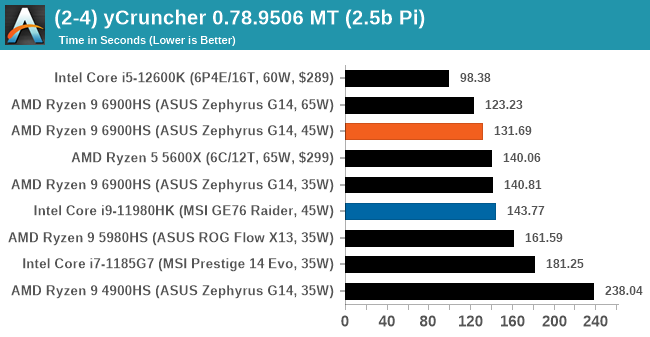

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In single thread mode we calculate 250 million digits, while in multithreaded mode we go for 2.5 billion digits. That 2.5 billion digit value requires ~12 GB of DRAM, and so is limited to systems with at least 16 GB.

92 Comments

View All Comments

Kangal - Sunday, March 6, 2022 - link

Zeno, Guards of Zeno, Grand Priest, Whis/Angels, Awakened Gass, Ultra Granolah, Ultra Instinct Goku, Beerus/GoDs, Fused Zamasu, Prime Moro, Raged Broly, Ultra Ego Vegeta, Full-power Jiren, LSS Kefla, Destruction Toppo, Max Hit, Anilaza, SSJ Rose Black, Golden Frieza, Dyspo, LSS Kale, Spirit Future Trunks, Super Ribrianne, Trained #17, Ultimate Gohan, Buu, Seven-Three.GeoffreyA - Monday, March 7, 2022 - link

Astonishing, and thanks for that! Remembering only Buu, Ultimate Gohan, and a bit of Beerus, I am really out of touch with DB canon!Kangal - Tuesday, March 8, 2022 - link

You can find the new series Dragon Ball Super online or even youtube. There is also the official manga which you can read for free* here:https://www.viz.com/shonenjump/chapters/dragon-bal...

*only the latest three issues available, new issues always free.

GeoffreyA - Tuesday, March 8, 2022 - link

Thanks, I only saw the first 10-15 episodes of Super in 2018, where Beerus came to the cruiseship, and still hope to watch the rest of it, eventually. It was nostalgic, I remember, seeing these dear characters after so long.mode_13h - Wednesday, March 9, 2022 - link

Eh, I only watched the original series. The few things after it that I had seen lacked the same charm. And among that series, the first season was one of the best. The censors had clearly cracked down on it, after that.What I thought worked so well about the original DB was Goku's innocence, ignorance, indomitable spirit, and purity of heart. That made it so much more entertaining and gratifying to see him overcome all the obstacles and enemies he encountered. And it's almost as if not knowing his own limitations made him unrestrained by them.

GeoffreyA - Thursday, March 10, 2022 - link

That's an exact description of Goku's character and is likely the secret of his greatness and why he often prevailed over his enemies. I would add that forgiveness was another trait of his, and something they could never understand. It's almost paradoxical, at least in DBZ, how he was the comic clumsy figure, and yet when it came to saving the world, only he could do it. Without knowing it, people are emulating, I feel, more of Vegeta's cynical character today. What the world needs is more of Goku.mode_13h - Friday, March 11, 2022 - link

I haven't watched much since the mid 2000's, but I thought Luffy, in One Piece, had a similar personality. However, he seemed to have a mercurial wisdom and canniness, just beneath the surface. In that regard, he seemed to have echos of Irresponsible Captain Taylor.I don't remember too much of Naruto, though I had started watching it from the beginning. Like Goku, he also had an innocence and indomitably, but there was obviously a darkness about him and inside of him.

I wouldn't have patience for any of that, now. Even at the time, it seemed rather excessively drawn out.

BTW, did you see the live action DB movie? I think it was made around 2008? More of a Hollywood movie; not Japanese. I'm apparently among the small minority who actually liked it. It helps to remember that Dragon Ball itself is loosely based on the ancient tale of Saiyuki and just don't expect a direct translation from the TV series or manga. It's very much a reinterpretation, but I enjoyed it.

GeoffreyA - Monday, March 14, 2022 - link

Luffy is very like Goku, regarding his innocence, simplicity, and goodness, but yes, he had another element which is hard to pin down. Perhaps a certain stoic quality that clicked on at times, and made him something fearful to all those who practised evil. (It's even evident when they left the Merry; Goku would never have operated like that, and for my part, I agree with Usopp.) I really loved One Piece. It could often be tedious and silly, but once the story knocked into gear, it was usually astonishing, and gave you the feeling of being on an adventure with noble companions. Namaka. My favourite arcs were Arlong/Nami, Arabasta, and Water 7. In 2018, I got stuck at Thriller Bark and never went on.What caused me to stop was seeing Evangelion for the first time that year. It left me a sadder, more sober person for ever; and I'll add a word of warning to others, Eva is terribly depressing and no joke. That and Steins;Gate are my favourite anime. I've heard great things about Naruto but to this day have not seen a single episode. Rurouni Kenshin was nice.

My brother really enjoyed the live-action Dragon Ball, but though I've seen only bits and pieces, I always debate with him that it's not the real DB! He contends that it's pretty good. Well, perhaps I need to sit down and actually watch it and give it a proper appraisal.

mode_13h - Tuesday, March 15, 2022 - link

Wow, all those One Piece names are definitely a flashback. I hung out with some Kenshin fans, so I saw the entire original series.Evangelion was pretty mind-blowing for me, when the original series first aired. A bit confusing, especially with the movies, the revisionist ending, and whatnot.

More recently, I went to a marathon showing of the original series, back when they started releasing the new version. I watched the first 2-3 installments of the new version and just quit. It got too absurd for me. I never liked the direction Gainax took with FLCL, but I guess it was inevitable the new Eva would go there (and lose me). I did really like Kare Kano and Chobits (lol, they seemed to have HDDs inside!).

I got a lot from Evangelion, but I'm pretty much over it. Not unlike how I parted ways with the Star Wars franchise, more than a decade ago. I just don't need it. I don't seem to have trouble finding enough to watch. For instance, a movie I recently enjoyed was Arrival.

Even the news is like a high-tension drama, for at least the past half decade. I can really feel like I'm living through history. It gives me a new perspective on much of the past century and what it was probably like, at the time.

Some other old anime that's fun to re-watch are the original Patlabor OAV series and movies, Akira, and the Ghost in the Shell series and movies. And every time I hear about space junk in the news, my mind goes back to Planetes.

GeoffreyA - Tuesday, March 15, 2022 - link

Evangelion is indeed out of this world. It's really the sadness of the characters that touched me, though of course the relentless, minimal action was impressive when it came. Can anyone ever forget Eva-01 breaking out of the shadow space in episode 16, or the time, nearing the end, when it reactivates though its power is gone? The new movies' weakness is that they tried to spin everything out to excessive detail, whereas the series' strength was minimalism. The characterisation, too, was subtly altered. Anyhow, last year I watched the final film, Thrice upon a Time, and can honestly say they did a good job and ended Eva on a surprisingly cheerful note, with a good message. I secretly hope for a return someday.I haven't seen most of the anime you mentioned, except for Akira and Ghost in the Shell. Suffice to say, Akira leaves the viewer speechless.

Same here. Lost my interest in Star Wars and don't care to see it again. Arrival was great, with a good performance by Amy Adams. And talking of Villeneuve movies, the new Dune was a big disappointment to me.