The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM ESTA Stacked CPU: Intel’s Foveros

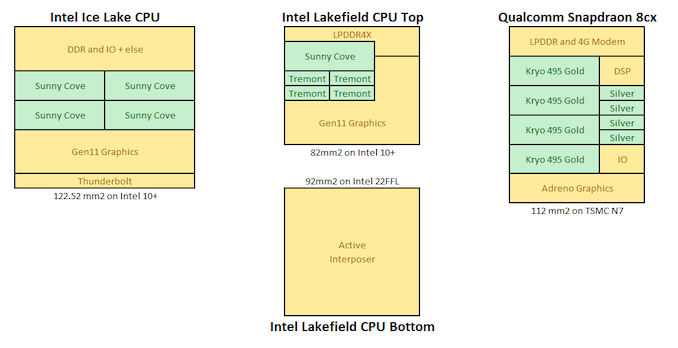

The previous designs of Intel, AMD and Qualcomm are what we call monolithic designs – everything on the processor happens on a single piece of physical silicon. When everything is on a single piece of silicon, it makes data management inside the processor a lot easier and simpler, it makes designing the processor a lot simpler, and manufacturing and assembly can be streamlined when only dealing with one physical element to the processor.

However, there have been moves in the industry to deviate from these single monolithic designs, as the benefits of trying something different are starting to offer beneficial points of differentiation within a product portfolio. It can lead to optimizations on different parts of the processor, it can be advantageous for cost reasons, and it also can expand silicon products beyond traditional manufacturing limits as well.

Monolithic vs Chiplets

You may be aware that recent AMD desktop processors are built on a ‘chiplet’ design. This is where multiple pieces of silicon are connected through wires in the green PCB in order to create a single ‘processor’. By using separate chiplets, each individual chiplet can either be focused on a single task (and be manufactured in the most efficient way for that task) or it can be a one of a repeated unit designed to scale out the compute performance.

For example, a processor core that contains logic circuits might aim for performance, and thus might require a very speed optimized layout. This has different manufacturing requirements compared to something like a USB controller, which is built to a series of specifications as per the USB standard.

Under a traditional monolithic regime, the single piece of silicon will use a singular manufacturing process that has to be able to cater for both situations – both the processor core logic and the USB controller. By having different parts of the overall design separated in different pieces of silicon, each one optimized for the best manufacturing scenario. This only works as long as the connectivity between the chips works, and it potentially enables a better mix of performance where you need it, and better efficiency (or cheaper cost) where you need it as well.

Of course, there are trade-offs: additional connectivity is required, and each chiplet needs to be able to connect to other chiplets – the total physical design area of the combined chiplets is often greater than what a single piece of silicon would offer because of these connectivity additions, and it could become costly to assemble depending on how many parts are involved (and if those parts are manufactured in different locations). Ultimately, if some chiplets are on an expensive manufacturing process, and some are on a cheaper manufacturing process, then we get the benefits of the expensive process (power, performance) without having to spend the money to build everything on that process, overall saving money.

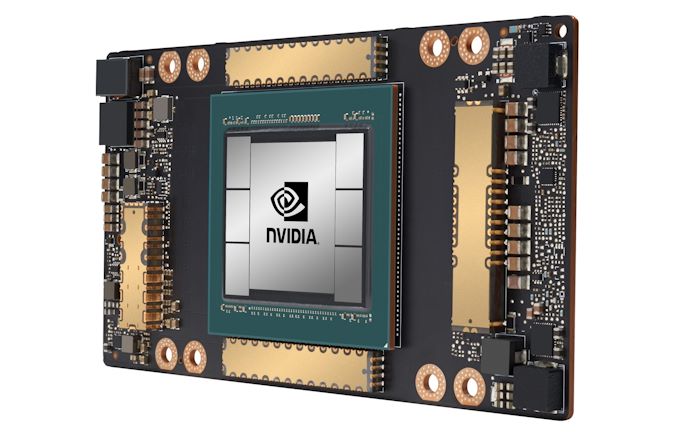

One other benefit that a chiplet process can bring is total silicon size of the product. Standard monolithic silicon designs, due to the manufacturing process technologies we use today, have an upper bound of how big a single piece of silicon can be. By implementing chiplets, suddenly that upper limit isn’t much of a concern unless each chiplet reaches that limit - using multiple chiplets can give a total silicon area bigger than a single monolithic chip design. An example of this is AMD’s Rome CPUs, which total an area of over 1000 square millimetres, while the single largest monolithic silicon die is NVIDIA’s A100 GPU, coming in at 826 square millimetres.

NVIDIA's A100 GPU, with one big monolithic die and six high bandwidth memory dies.

Already in Market: AMD Chiplets

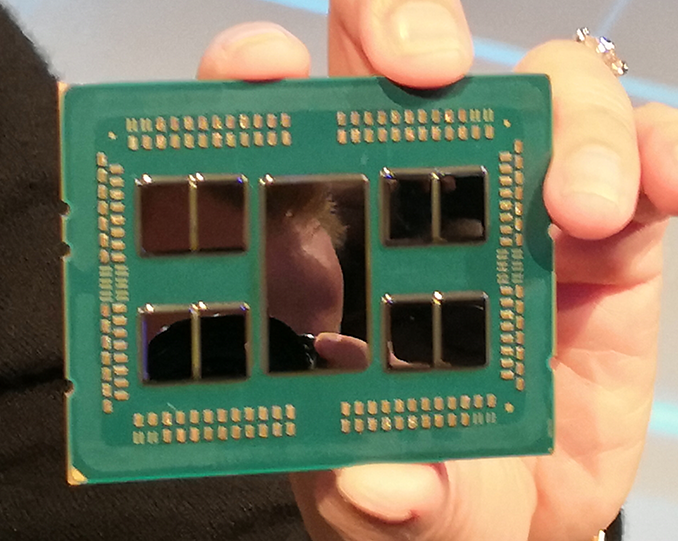

To put this into context of a modern design, AMD’s Ryzen processors use one or more ‘compute chiplets’ combined with a single ‘peripheral’ chiplet (often called an IO die). The compute chiplets are built on TSMC’s high-performance 7nm manufacturing node which extracts peak performance and power from the design. The ‘peripheral’ chiplet, which is not so peak performance focused but more tuned to standards like SATA, PCIe and USB, can be built on a manufacturing node where efficiency is more important, and also the cost can be lower, such as GlobalFoundries’ cheaper 14nm manufacturing node. Put together, these chiplets form a singular product.

AMD's Rome with 1 big IO die and eight compute dies

AMD had to overcome a lot of hurdles to get here, such as developing a chip-to-chip connectivity standard (known as Infinity Fabric), managing the power of the connectivity, but also physical manufacturing, such as ensuring all the individual chiplets match the same height for the heatspreader and cooler that goes on top to be effective.

One of the benefits of AMD going this route, according to the company, is that it allows them to scale the parts of their design that are easiest to scale for performance (the compute cores), and also manage where they think the future of compute is going. The other big benefit is that total die size of one of AMD’s server CPUs is larger than what can be manufactured in a single piece of silicon.

This sort of chiplet based approach also lends people to believe that AMD could swap out a compute based chiplet for a graphics based chiplet, or an AI-focused chiplet, and thus AMD could in the future offer different variants of its products depending on customer requirements for different workloads that the organization might have.

Coming to Market: Intel Chiplets

For Lakefield, Intel also goes down the chiplet route. But instead of placing the chiplets physically alongside each other like AMD, the chiplets are stacked on top one another. This creates a physically smaller processor package in the x-y dimensions, which is a critical component for laptop and small form factor mobile designs that Lakefield is aiming towards.

This stacked design replaces the tradeoff of physical space for one of cooling. By placing two high-powered bits of silicon on top of each other, managing thermals becomes more of an issue. Nonetheless, the physically smaller floorplan (along with a design focused to embed more control into the processor) in the x-y directions helps build thinner and lighter systems.

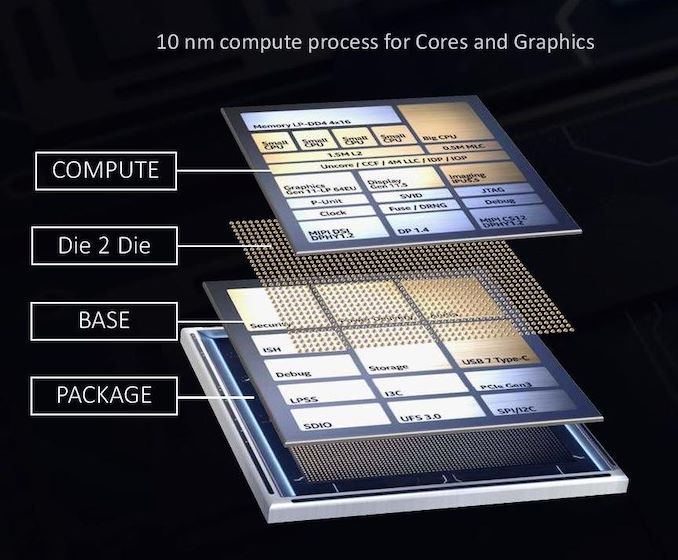

For the two stacked chiplets in the middle, the top chiplet is built on Intel’s high-performance 10nm+ manufacturing node and contains the 1+4 compute core configuration, as well as the graphics and the memory controller. The bottom chiplet contains the ‘peripheral’ components that are not as performance related, such as security controller, USB ports, and PCIe lanes. This is built on Intel’s cheaper 22nm manufacturing node.

Because this chiplet is on the bottom and has connections for power to pass through, Intel technically calls the lower chiplet an ‘active interposer’. An interposer is a term commonly used when chiplets are connected through a base piece of silicon, rather than through a green package PCB, because it allows communication between chiplets to be faster and more efficient, but it is a more expensive implementation.

What makes it an active interposer, rather than the passive interposers we have seen on some GPUs in recent years, is that it contains functional logic, such as the USB ports, the security, the chipset functions, and others. The passive interposers are just connection passthroughs, taking advantage of faster signaling. Active interposers include functional logic and have an associated power consumption that goes along with that.

The reason I bring this up is because there is some debate as to whether an active interposer is true 3D stacking as traditionally interpreted, or more akin to 2.5D stacking, which is what we commonly call a passive interposer. For those users who read more about Lakefield beyond AnandTech, you are likely to see both used.

Getting Stacked: DRAM and NAND vs Lakefield

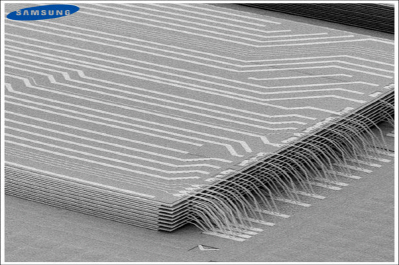

The use of stacking is not necessarily new to the world of semiconductors. Both computer random access memory, such as DRAM, and storage components, such as NAND Flash, have implemented multiple layer technology for many years. What makes these elements different is the way they are stacked, plus also the power of the components involved.

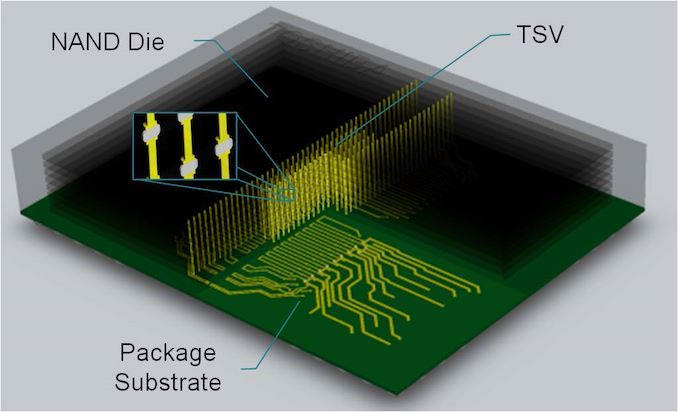

The two main ways of stacking silicon together are through simple wire bonding, where the layers are not directly connected, or with Through Silicon Vias (TSVs), which are akin to stacks running through the layers.

This is an image of Samsung’s NAND wire bonding technique, where multiple layers have separate connections to a base die. There is no direct connection between layers other than the act of physically coming together.

This is ‘Through Silicon Via’ (TSV) stacking, whereby each layer has a vertical channel that connects to the die above and below it. It allows for direct connection through the stack for fast access, which is useful when NAND has 64 or more layers. It can be quite difficult to do as well, but NAND manufacturers are experts in this methodology.

However, DRAM and NAND Flash are not the high-powered elements of a computer. Even the most dense memory configurations look to contribute single digit of milliwatts of power per layer when in use. Applying these techniques to high-powered computer chips is a bit more complex.

Stacking with Lakefield

What Intel is doing with Lakefield, with its stacking, is putting together multiple layers of high-performance compute into a single product. Also, while most DRAM and NAND Flash implementations stack silicon on top of each other, and then use external wire bonding or TSVs, to provide connectivity - for Intel’s Lakefield, the connectivity goes through the silicon, as with a traditional interposer (as mentioned above), and uses a die-to-die bonding to provide the communications.

Intel calls its stacking technology ‘Foveros’. It uses a novel design of die-to-die connectivity.

At the bottom is the base packaging material that connects all the signals going out into the system (power, USB, display). On top of this is the base silicon peripheral die, the active interposer, containing things like the USB control, storage control, security, and such.

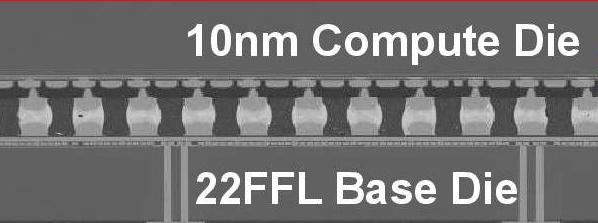

Between the base silicon peripheral die and the top logic compute die is a method of connecting the two, in this case we have a solder ball array with a 50 micron pitch. This is essentially a ‘balls-on-balls’ technique, but with two silicon dies of different process node manufacturing techniques.

These connections will come in three flavors: structural, data, and power. Creating these bumps and ensuring they deliver what is intended is a hard problem – electrical issues, such as capacitance, and computational issues, such as maintaining a clock frequency, have to be managed, along with achieving targets in data rate bandwidth as well as power.

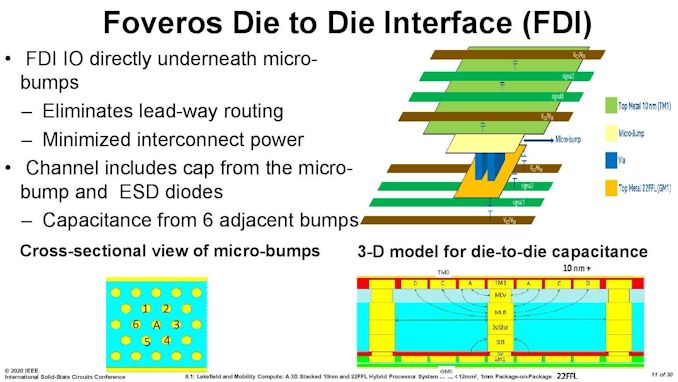

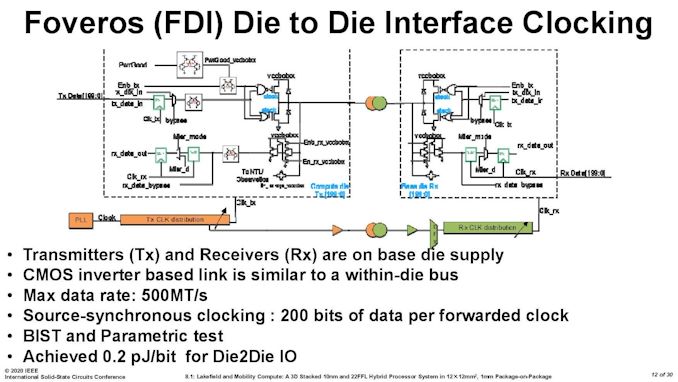

Here is the main introduction slide that Intel presented at the ISSCC conference regarding the die-to-die interface. Unfortunately these were the quality of the pictures as presented (the unreadable aspect ratios are also native to the presentation).

As mentioned, maintaining the clock coherency at speed and low power is a concern, and here’s what Intel did, with each connection operating at 500 mega-transfers per second. The key point on this slide is the power: 0.2 picojoules of energy consumed per bit transferred. If we extrapolate this out to a memory bandwidth of 34 GBps (maximum memory bandwidth of Lakefield), this equates to 54 millwatts of power for the data transfer.

0.2 pJ/bit is one of the benefits of keeping the transmission of the data ‘inside’ the silicon, but moving between the two layers. This is an order of magnitude better than the numbers quoted by AMD for its first generation EPYC server processors, which used data transfer links within the CPU package – AMD quoted 2 pJ/bit transfer by comparison.

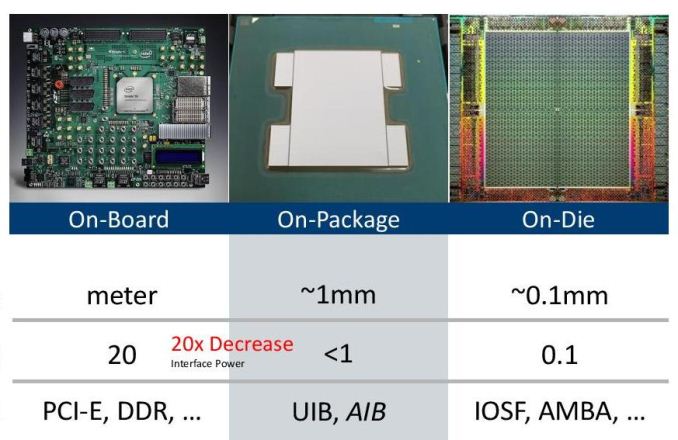

Here’s a slide from Intel’s 2018 Hot Chips talk about new data transfer and connectivity suggestions. On the left is the ‘on-board’ power transfer through a PCB, which runs at 20 pJ/bit. In the middle is on-package data transfer, akin to what AMD did with 1st Gen EPYC’s numbers, around 1-5 pJ/bit (depends on the technique), and then we get on-silicon data movement, which is more 0.1 pJ/bit. Intel’s Foveros die-to-die interconnect is most like the latter.

221 Comments

View All Comments

ichaya - Sunday, July 19, 2020 - link

SPEC is useful for some IPC comparisons, but it's questionable to use it for much else. PG bench in the phoronix link has a 50%+ speedup with SMT which is basically inline for perf/W/$ with Graviton 2 instance. The worst case is Casandra, but everything else is within ~5% for similar perf/$ if not comparable perf/W too since comparing TDP is workload dependent as well and not measured by most tests.XZ and Blender are ~45% faster with SMT in your openbenchmark link, but that's a 3900X (12-core/24-thread), so any comparisons to server chips (64-core Graviton 2) are unfair given power consumption and core differences. 4 times the L3 is also wrong, it's 50% more L2+L3 with half the cores and SMT if you're being fair between m6g.16xlarge or c6g.16xlarge and c5a.16xlarge.

Quantumz0d - Friday, July 3, 2020 - link

Intel has lost it's edge. And this whole portable nonsense is reaching peaks of stupidity. Those Lakefield processor equipped machines will be close to $1000 for their thin and ultra light 1 USB C / 1 3.5mm audio jack, what a fucking disaster.I had owned one ultrabook which is Acer Aspire S3 and I used to even play DotA2 on that, and after 1-2 years the whole machine heated like crazy, I repasted, no dice, cleaned fans, nothing. And then battery also stopped holding a charge. Now what ? That stupid POS is dead, not even worth, meanwhile a Haswell machine with rPGA socket, and an MXM slot from 2013 and guess what ? the GPU got an upgrade to Pascal 1070 MXM from Kepler 860M.

All these BGA trash machines will no longer hold charge nor have their serviceability, older ultrabooks atleast had a 2.5" drive, newer ones have NVMe SSDs, these 2 in 1 trash like most of the Surface lineup is almost impossible to even repair or service. And because of this thin and light market Windows 10 has been ruined as well to cater to this bs phenomenon and desktop class OS is hit with that ugly Mobile UX which lacks powerful software options, navigation and all. Plus you don't even get to repair it yourself due to non available servicing parts.

With Apple HW same thing, full BGA not even NVMe SSDs, and now they also started to make their Mac OS look and feel like iOS trash. This whole mobile and ultra portable garbage is ruining everything, from gaming to the HW.

PandaBear - Monday, July 6, 2020 - link

They don't want to cannibalize their highly profitable x86 business, so they have to give you crap for what you want if you want to pay less. The problem right now is other companies don't have to deal with this political monopoly BS and they are eating Intel for lunch.Most monopolies die this way: when their monopoly business is obsoleted and they hang on to it to milk the cow till it dies.

yeeeeman - Friday, July 3, 2020 - link

Tigerlake should also be in the pipeline soon, right?Deicidium369 - Saturday, July 4, 2020 - link

Benchmarks showing it destroying AMD Renoir at single core, and within 17% on MT - despite half the cores...https://wccftech.com/intel-10nm-core-i7-1165g7-cpu...

watzupken - Sunday, July 5, 2020 - link

"Benchmarks showing it destroying AMD Renoir at single core, and within 17% on MT - despite half the cores...https://wccftech.com/intel-10nm-core-i7-1165g7-cpu...

Till we see the actual performance, you need to take these leaks with a lot of salt. The test bed are not revealed in leaks and it is not possible to ascertain if it is a realistic number. This we don't have to speculate for long since it should be out pretty soon.

pugster - Friday, July 3, 2020 - link

Lakefield's 2.5w standby sounds kind of high. ARM cpu is probably much lower than that.Ian Cutress - Monday, July 20, 2020 - link

2.5 mWProDigit - Friday, July 3, 2020 - link

Qualcomm has proven that a single fast core isn't enough. Intel needs to at least do 2 fast cores. Then add at least 6 atom cores.But if Intel wants to compete with AMD, it'll need to create a quad core big setup, with at least 10 to 12 atom cores.

Any less will be too little. These are too little as is, competing against the 3000 series of AMD.

It would be awesome, if Intel could make a 25W quad core cpu, paired with an additional 40 watts on atom cores. That's about 20 additional cores, or a 24 core cpu.

abufrejoval - Friday, July 3, 2020 - link

A great article overall, very informative, deeply technical while still readable to a layman, very little judgement or marketing, allowing readers to form their own opinion: Anandtech at its very best!Not mentioned in the article and not covered by the comments so far is that the main driver behind Intel’s low power SoCs has been Apple: This is what Intel thought Apple would want and be happy with!

And if you contrast it to what Apple will now do on their own, that makes me want to sell all my Intel shares: Good thing I never had any.

This is another Intel 80432 or i860, tons of great ideas engineered into parts, but great parts don’t automatically make a convincing whole.

And I simply don’t see them iterate that into many more designs over the next years at competitive prices: With that hot-spot governed layout between the two all the flexibility and cost savings a chiplet design is supposed to deliver goes away and you now have two chips in a very tight symbiosis with no scale-up design benefits.

It’s a Foveros tech demo, but a super expensive one with very little chance of currying favors even at ‘negative revenues’ in the current market.

X86 is not competitive in terms of Watts or transistors required for a given amount of compute. It didn’t matter that much in PCs, the competing servers were much worse for a long time, but in the mobile space, phones to ultrabooks, it seems impossible to match ARM, even if you could rewind the clock by ten years and started to take BIG-little seriously. Lakefield is essentially a case study for Core being too big and thus power hungry and Atom failing on performance.

ISA legacy is still holding x86 from dying completely, but that matters less and less at both the top of the performance range with servers and at the bottom in mobile, where the Linux kernel rules supreme and many userlands and ISAs compile just fine.

Gaming is a hold-out, but perhaps the last generation consoles on x86, gamer PCs alone too much of a niche to determine the future.

The desktop will switch to who offers the bigger, longer lasting bang for the buck and there is a very good chance that will be ARM next.

Microsoft may be allowed to blunder along with lackluster ARM64 support for a couple more days, but Apple’s switch puts them under long deserved pressure. A nice Linux/Android/Chromium hybrid ultrabook running whatever Office could get things moving quicker… at least I hope that, because I’d never want to be forced into the bitten Apple…. by these corporate decision makers I see already twitching.

No chance I’d ever let a new Apple into my home: The ][ was the last good one they made.