The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM ESTLakefield in Terms of Laptop Size

In a traditional AMD or Intel processor designed for laptops, we experience two to eight processing cores, along with some graphics performance, and it is up to the company to build the chip with the aim of hitting the right efficiency point (15 W, or 35/45 W) to enable the best performance for a given power window. These processors also contain a lot of extra connectivity and functionality, such as a dual channel memory controller, extra PCIe lanes to support external graphics, support for USB port connectivity or an external connectivity hub, or in the case of Intel’s latest designs, support for Thunderbolt built right into the silicon without the need for an external controller. These processors typically have physical dimensions of 150 square millimeters or more, and in a notebook, when paired with the additional power delivery and controllers needed such as Wi-Fi and modems, can tend towards the board inside the system (the motherboard) totaling 15 square inches total.

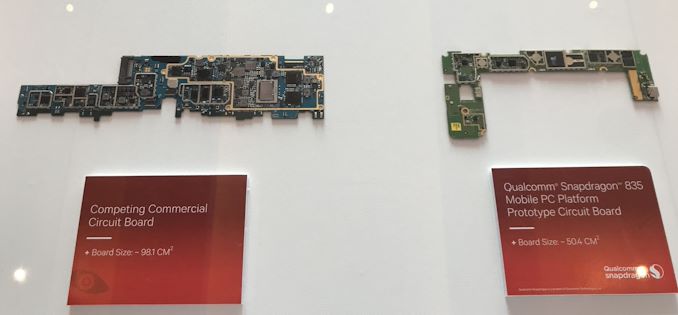

One of Qualcomm’s examples from 2018

For a Qualcomm processor designed for laptops, the silicon is a paired down to the essentials commonly associated with a smartphone. This means that modem connectivity is built into the processor, and the hardware associated with power delivery and USB are all on the scale of a smartphone. This means a motherboard designed around a Qualcomm processor will be around half the size, enabling different form factors, or more battery capacity in the same size laptop chassis.

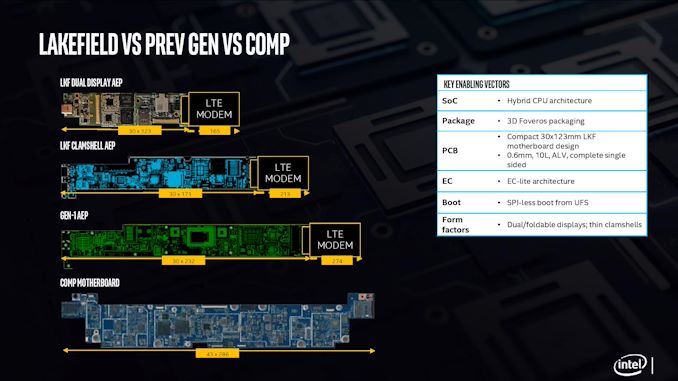

With Intel’s new Lakefield processor design, the chip is a lot smaller than previous Intel implementations. The company designed the processor from the ground up, with as much included on the CPU as to not need additional chips on the motherboard, and to fit the dimensions similar to one of Qualcomm’s processors. Above is a slide showing how Intel believes that with an LTE modem included, a Lakefield motherboard can move down to 7.7 square inches, similar to a Qualcomm design. This leaves more room for battery inside a device.

When Intel compares it against its own previous low power CPU implementations, the company quotes a 60% decrease in overall board area compared to its first generation 4.5 W processors.

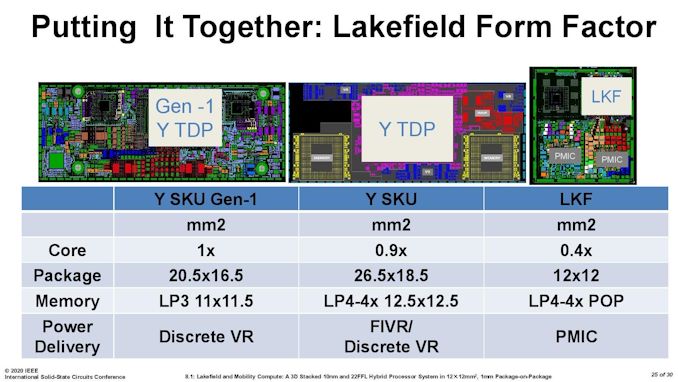

It is worth noting that for power delivery, Intel placed MIMCAPs inside the Lakefield silicon, much like a smartphone processor, and as a result it can get by on the power delivery implementation with a pair of PMICs (power management ICs). The reason why there is two is because of the two silicon dies inside – they are controlled differently for power for a number of technical reasons. If each layer within an active stacked implementation requires its own PMIC, that would presumably put an upper limit on future stacked designs – I fully expect Intel to be working on some sort of solution for this for it not to be an issue, however that wasn’t implemented in time for Lakefield.

For those that are interested, Lakefield’s PMICs are under the codenames Warren Cove and Castro Cover, and were developed in 2017-2018.

221 Comments

View All Comments

ichaya - Sunday, July 12, 2020 - link

You've claimed ARM64 has a code density advantage without any evidence for a few posts now. Being byte-aligned has advantages too, which are clear in the paper with the real world program! You're welcome to provide more real world evidence!We're changing the goal posts now with new numbers, you can't estimate IPC based on one specific INTrate2006 test, and assume it's similar across other workloads as well. If we just stick to INTrate2006, IPC seems within 5% where Graviton 2 has twice the cache of AMD Epyc 7742.

Comparing a top-line power number like you were doing is irrelevant when features like AVX can easily blow past any power envelope you might have, and one chip lacks the feature.

Wilco1 - Sunday, July 12, 2020 - link

No, I am stating that AArch64 has better code density as a fact. Maybe 5 years ago you could argue about it as AArch64 was still relatively new, but today that's not even disputable. So check it out if you'd like to see it for yourself.I used the overall intrate result to get an accurate IPC comparison. If you do the math correctly you'll see that Graviton 2 has 12% higher IPC than EPYC 7742.

At the end of the day what matters is performance, perf/W and cost. Whether you have AVX or not is not relevant in this comparison - EPYC 7742 uses the same amount of power whether it executes AVX code or not.

ichaya - Tuesday, July 14, 2020 - link

This is not the first time I've seen someone look at single thread performance and disregard everything else. All Graviton 2 and A13 single thread gains can be attributed to large (100~200% more) shared L2/L3 caches, and when compared with x86, 5% or even 75% IPC gains turn out to be ~10% less real world performance or ~10% more with marginal power use difference on 7nm. AMD has everything from a 15W to 280W chip.For multi-threaded, the Graviton 2 looks better, but the 64 vcpu EPYC 2 c5a.16xlarge (144MB L2+L3) AWS instance costs the same as the 64 core Graviton 2 m6g.16xlarge (96MB L2+L3) instance and delvers equivalent performance on real world tasks while having 1/2 the real cores, 1/2 the system RAM and 50% more L2+L3.

perf/W/$ is important, and since ARM has always been on the lower end of W and $, it can be hard to see past it. If you can compare cache sizes, power and real world performance, the only thing revolutionary is the fact that Amazon, Apple and the ARM ecosystem have come this far in a few years. The overall features (AVX2+SMT among others) and openness still leaves a lot to be desired.

Wilco1 - Wednesday, July 15, 2020 - link

Single threaded performance is important in showing that x86 does no longer have the big advantage it once used to have. Overall throughput is well correlated with single thread performance, you can see that clearly in the results we discussed. Do you believe 64 Graviton 1 cores would do equally well against 7742 if they had the same huge caches?I haven't seen serious benchmarks on c5a, do you have a link? With 32 cores at 3.3GHz it should burn well over 200W, not an improvement...

It's not that revolutionary if you followed the rapid increase of single thread performance over the last 5 years. Smartphones paid for the progress in microarchitecture and process technology that enabled competitive Arm servers (it helped AMD surpass Intel as well). I don't believe SMT or AVX are useful - 128 cores in Altra Max will beat 64 cores with SMT+AVX on performance and area at similar power.

As for AVX, this article discusses how Intel's latest CPU disables AVX... Linus had some interesting comments recently about the fragmentation of the many AVX variants. Then there are all the unresolved clocking and power issues. It's a mess.

ichaya - Thursday, July 16, 2020 - link

If there was a significant power difference between m6g.16xlarge and c5a.16xlarge, they would be priced differently. 128GB of RAM can't be more than ~15W.Single thread performance can help multi-thread performance up to a point, but SMT, non-boost clocks, and biasing towards TLP more than ILP (like an in-order GPU) can hurt single thread performance at the expense of more multi-threaded throughput.

AVX-512 is a mess, but AVX2 is worth having in most contexts now. Maybe some AVX512 instructions worth having will make it into a AVX2.1 which can completely supersede AVX2. For the price of Lakefield, there are certainly more attractive options, though compatibility, packaging and performance can trump battery life.

Wilco1 - Thursday, July 16, 2020 - link

Well there is a much better comparison, c6g.16xlarge has 128GB and is 12% cheaper than c5a.16xlarge. More than enough to pay for the electricity cost of the 280W TDP of c5a.Yes you can optimize for multithreaded throughput but SMT remains questionable, especially for large core counts. Why add SMT when you could just add some more cores?

Indeed AVX512 is worse, and could be removed without anyone missing it. Lakefield battery life comparisons are in, the Atom curse has struck yet again...

ichaya - Thursday, July 16, 2020 - link

12% is probably more the amount of subsidies these instances are getting. Amazon has a very very long history of putting any profit margins back into growth. Either that, or 128GB of RAM is 100W+!SMT is perhaps the lowest level at which TLP can be extracted, recent multi-core Atoms don't have it, but for server/workstation tasks like compilation, DB engine or even general multi-tasking, it's well worth it.

Wilco1 - Friday, July 17, 2020 - link

Graviton 2 is less than a third of the silicon area of EPYC so cheaper to make. 128GB server DRAM costs over $1000, which is why the 256GB/512GB versions are more expensive. The power cost of extra DRAM is a tiny fraction of that.There are tasks where SMT helps but equally there also tasks where it is slower. So it looks great on marketing slides where you just show the best cases, but overall it is a small gain.

ichaya - Saturday, July 18, 2020 - link

I wouldn't call a 64 vcpu (180W) system beating or equaling a 64 core (110W) system in web serving/DB and code compilation a small gain. The tasks where SMT hurts is basically single threaded JS, which is just such a shame. Shame! I don't think POWER, SPARC and others have been wrong in having added SMT years ago.For code compilation and DB the differences are 50%-100%+ making perf/W/$ very competitive.

https://www.phoronix.com/scan.php?page=article&...

This article also seems to mention SMT might make an appearance in the next Neoverse N* chips: https://www.nextplatform.com/2019/02/28/arm-sharpe...

Wilco1 - Sunday, July 19, 2020 - link

The Phoronix link has various benchmarks that aren't even running identical code between different ISAs (eg. Linux kernel compile). So it's not anywhere near a fair CPU comparison like SPEC. And this: https://openbenchmarking.org/result/1907314-AS-RYZ... shows SMT gives almost no gain on multithreaded benchmarks once you stop cherry picking the good results and ignore the bad ones...Even if we just consider the benchmarks with the largest SMT speedup, Coremark and 7-zip have good SMT gains of 41% and 32%, but m6g *still* outperforms c5a by 5% and 24%.

So the best SMT gain combined with a 32% frequency advantage and 4 times the L3 cache is still not enough to provide equal per-thread performance!