Launching the #CPUOverload Project: Testing Every x86 Desktop Processor since 2010

by Dr. Ian Cutress on July 20, 2020 1:30 PM ESTWe want to have every desktop CPU since 2010 tested on our new benchmarks.

Updating our testing suite is all well and good, but in order for users to find the data relevant, it has to span as many processors as possible. Using tools such as Intel's ARK, Wikipedia, CPU-World and others, I have compiled a list of over 800+ x86 processors (actually 900+ when this article goes live) which qualify. At the highest level, I am splitting these into four categories:

- Intel Consumer (Core i-series, HEDT)

- Intel Enterprise (Xeon, Xeon-W, 1P, 2P)

- AMD Consumer (Ryzen, FX, A-Series)

- AMD Enterprise (EPYC, Opteron)

Within both AMD and Intel, the consumer and enterprise arms of each company are discretely different business units, with product teams, and rarely is there any cross-over. The separation of the departments is easy to follow, in that ‘Consumer’ basically stands for mainstream processors that are aimed at machines a user or an OEM could build with off-the-shelf parts, and typically do not support ECC memory. ‘Enterprise’ is going to refer to processors that might end up in workstations, servers or data centers, that have professional grade features, and most of these parts do support ECC memory.

Three boxes of CPUs. Less than half

The next level of separation for the processors, for our purposes, is going to be under the heading ‘family’. Family is a term that typically groups the processors by the microarchitecture, but could also have separation based on socket or features. For CPU Overload, choosing one high-level category breaks down like this:

- AMD Consumer (360+ processors), inc Pro

- Ryzen 3000 (Threadripper, Ryzen 9/7/5/3; 7nm Zen2)

- Ryzen 2000 (Threadripper, Ryzen 7/5/3; 12+ Zen+)

- Ryzen 1000 (Threadripper, Ryzen 7/5/3; 14nm Zen)

- Bristol Ridge (A12 to Athlon X4, 28nm AM4-based Excavator v2 APUs)

- Carrizo (Athlon X4, 28nm FM2+ Excavator)

- Kaveri Refresh (A10 to Athlon X2, FM2+)

- Kaveri (A10 to Sempron X2, FM2+)

- Richland (A10 to Sempron X2, FM2+ Piledriver)

- Trinity (A10 to Sempron X2, FM2/2+ Steamroller)

- Llano (A8 to Sempron X2, 32nm FM1 K10)

- Kabini (FM1, 28nm Jaguar)

- Vishera (FX-9590 to FX-4300, 32nm AM3 Piledriver)

- Zambezi (FX-8100 to FX-4100, 32nm AM3 Bulldozer)

- AM3 Phenom II X6 to X4 (K10, Thuban/Zosma/Deneb)

- (Optional) Other AM3 (K10, Zosma, Deneb, Propus, Heka, etc)

- (Optional) Other AM2 (Agena, Toliman, Kuma)

Neither AMD nor Intel provides complete lists of the processors it launched within a certain family. Intel does it best, under its ark.intel.com (known as ARK) platform, however sometimes there are obscure CPUs that do not make the official list due to partnerships or being geo-specific. The only way we end up knowing these obscure CPUs exist is because someone has ended up with the processor in their system and run diagnostic tests. Intel calls these processors ‘off-roadmap’ CPUs, and while it only provides information on them through ARK if you know the exact processor number already. Scouring the various resources available online to draw up that picture proved one thing: no list is complete. I doubt the one I have is complete either.

For example, most users believe that the last AMD FX processor that was made by AMD was the massive 220W FX-9590 for the AM3 platform. This is not the case.

AMD released two FX CPUs on FM2+, and these were only sold in HP pre-built systems. The two CPUs are the FX-770K and the FX-670K. Typically FX processors are known for being only on the AM3+ platform on the desktop, however AMD and HP struck a deal to give the premium FX name to these other CPUs and they were never launched at retail – in order to get these we found a seller on eBay that had pulled them out of old systems.

In some lists we found online, it was very easy to get mixed up because some companies have not kept their naming consistent. Take the strange case of the Athlon X4 750. Despite the name not having a suffix, is classified as a newer family to the Athlon X4 750K. The X4 750 being Piledriver based and the X4 750K which is Steamroller based.

Then there are region specific CPUs, like the FX-8330, which was only released in China.

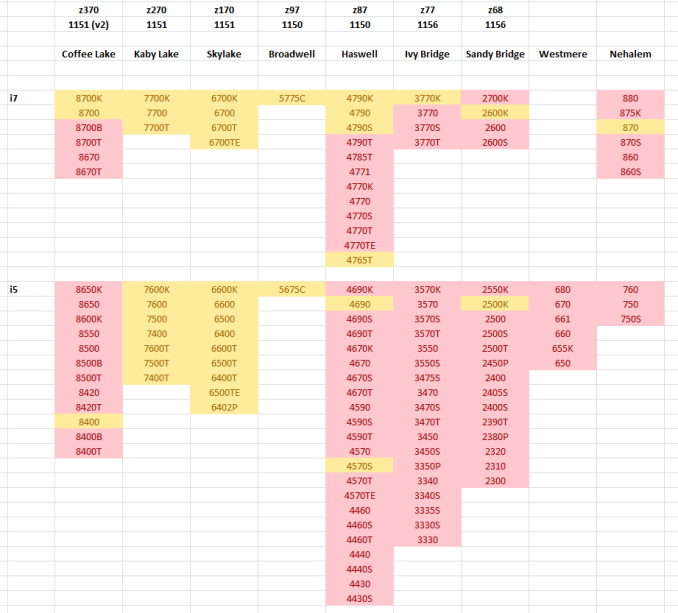

This is the current standing of the 'Intel Consumer' Core i7 and Core i5 processors up to Coffee Lake. Ones marked in yellow are ones that I have immediately available, ready for testing, and ones marked in red are still to-be-obtained. The common thread is that Intel has supplied all the Core i7-K processors for most generations, and the i5-K processors for some of them. The big blocks of yellow for Kaby Lake and Skylake were also sourced from Intel. The other singular dotted processors are either ones that I have purchased personally from my own stash, or ones that we have obtained via partners, such as the S/T processors when we covered some low-power hardware a few years ago. Needless to say, there are plenty of gaps, especially on the latest (and unannounced processors), but also going further back, before I was in charge of the CPU reviews.

Some of the feedback we have had with this project is that the database technically does not need every CPU that ever existed to be relevant, and even then, for some CPUs if we reduce the frequency multiplier, it will perform the same as a processor we do not have. While for some CPUs that is true, it has to be as long as the testing does not fall foul of the power states, the turbo states, the points at which turbo frequencies are enabled and the appropriate frequency/voltage curve binning (and if the cache sizes line up). While this can be the case, it is often on a case-by-case basis. However for the scope of this project, and for this project to be authentic in the data it is going to give, one of the rules I am imposing is that the data has to come from testing with the CPU on hand - no synthetic numbers from 'simulated' processors.

Rule: No Synthetic Numbers from Simulated Processors

I mentioned that sourcing is one of the most difficult parts of this project, and the obvious answer to get hardware is to go direct to the manufacturer and request it: both manufacturers end up being big parts of this project regardless of their active participation, but the best scenario is that they should be the hardware source.

As the four areas above (AMD/Intel, Consumer/Enterprise) are for lack of a better description different companies, the press contact points for the consumer and enterprise sides of each company are different. As a result, we have different relationships with each of the four, and one of the interesting barriers to sampling is rebuilding relations when long-term contacts leave. Sometimes this happens for the better (sampling improves), or for the worse (a severe reluctance to offer anything).

Unfortunately sometimes there a wall - business unit policies for sampling often mean only one CPU here or there can be offered due to what’s available for media distribution, or if the company, the press contact, or the product manager does not see any value to the business in sampling a component (such as an Intel Pentium or an AMD A9), then it is unlikely we would get that sample from them. Part of that relationship with these companies is demonstrating the value of this data.

Another aspect is not actually having any samples - these are PR teams, not infinitely packed stock rooms. So if the team we are in contact with does not have access to certain parts that we request, such as consumer-grade parts that were built specifically for certain OEMs that are not under the ‘consumer’ PR team’s remit, or even some of the low priority parts in a stack, they can't loan them to us. It sounds somewhat odd that a big company like Intel or AMD wouldn't have access to a part that I'm looking for, but take those HP-only FX CPUs I mentioned earlier – despite it being a consumer grade CPU, because it ended up being a B2B transaction to supply these parts, it would have never passed the hands of the PR team, and any deal with the OEM may have put reviews of the hardware solely at the discretion of the OEM. Or the region-only parts, then only the PR team in that region will have access to them. (I eventually picked up those parts on my own dime on eBay, but this isn’t always possible.)

Nonetheless, we have approached as many people internally at both companies, as well as some OEMs and resellers, with our CPU Overload project idea. Both of the consumer arms of Intel and AMD have already provided a good first-round bounty of the latest hardware, and in most cases complete stacks of the newest generations. The enterprise hardware is a little tricky to get hold of. But many thanks to our Intel and AMD contacts that are already on-board with CPU Overload, as we try to work closer with the other units.

One thing to mention is that the newer the processor, the easier it is to get direct from the manufacturer. Typically these parts are already within their sampling quotas. However, if I go and ask for a Sandy Bridge Core i3-2125 from 2011, a sample to share is unlikely to be at their fingertips - there might be one in the drawer in a lab somewhere, but that is never a guarantee. This is where the project will have to look to private sales, forums, and online auction sites to play a role as we move further into the past. Depending on how the project goes, we may reach out to our readers (either in a project update, or on my twitter @IanCutress) for certain parts to complete the stacks. This has already worked for at least three hard-to-find CPUs, such as the HP FX CPUs (the FX-770K and FX-670K), and the Athlon X4 750 (not 750K), which we picked up from eBay and China respectively.

For the initial few months of the project, we have around 200 CPUs to begin. This breaks down into the following:

| CPU Overload Project Status | ||

| CPUs on Hand | Key Notes | |

| Intel Consumer | 138 / 406 | 29 / 46 : HEDT 72 / 241 : Core 37 / 119 : Pentium/Celeron |

| AMD Consumer | 137 / 366 Includes Pro |

42 / 105 : AM4 and TR 43 / 108 : FM2+/FM1/AM1 52 / 153 : AM3/3+ |

| Intel Enterprise Xeon E and Xeon W |

27 / 155 | 75% of E3-1200 v5 75% of E3-1200 v4 36% of E3-1200 v3 |

| AMD EPYC | 11 / 40 | Known Socketed EPYC Lots of unknown in Cloud |

| Others | Opteron 6000: 2 / 121 Xeon-SP 1P AMD AM2 LGA 1366 |

|

| Total (Phase 1) | 313 / 967 | |

| Total | 1088+ | |

For the first phase, we are almost at a good level, having 33.7% of the processors needed. However, the models we do have a fairly localized in the Skylake/Kaby Lake-S sets, Intel's HEDT range, and some of AMD's stack. There is still a good number of interesting segments missing in what we have to hand.

The K10, LGA1366 Xeons and the older Opterons are part of the secondary scope of this project. Some of them are easy to obtain with bottomless pockets and a trip to eBay, and others require more research. There is a potential 'Phase 1.5', if we were to go after all of the Xeon E5-1600 and E5-2600 processors as well. Then it becomes an issue of tackling single socket vs dual socket systems, as well as suitable NUMA software.

So out of the two main issues with a project of this size, sourcing and benchmark longevity, we're trying to tackle both with one go - retest everything with a new benchmark suite on with the latest stable OS. The only factor left is time - retesting all these CPUs doesn't happen overnight. The key numbers to begin with testing however will be the headline Intel processors back to Sandy Bridge, and the AMD parts back to FX.

110 Comments

View All Comments

ruthan - Monday, July 27, 2020 - link

Well lots of bla, bla, bla.. I checked graphs in archizlr they are classic just few entries.. there is link to your benchmark database, but here i see preselected some Crysis benchmark, which is not part of article.. and dont lead to some ultimate lots of cpus graphs. So it need much more streamlining.i usually using old Geekbench for cpus tests and there i can compare usually what i want.. well not with real applications and games, but its quick too. Otherwise usually have enough knowledge to know if is some cpu good enough for some games or not.. so i dont need some very old and very need comparisions. Something can be found at Phoronix.

These benchmarks will always lots relevancy with new updates, unless all cpus would in own machines and update and running and reresting constantly - which could be quite waste of power and money.

Maybe some golden path is some simple multithreaded testing utility with 2 benchmarks one for integers and one for floats.

Ian Cutress - Wednesday, August 5, 2020 - link

When you're in Bench, Check the drop down menu on your left for the individual testshnlog - Wednesday, July 29, 2020 - link

> For our testing on the 2020 suite, we have secured three RTX 2080 Ti GPUs direct from NVIDIA.Congrats!

Koenig168 - Saturday, August 1, 2020 - link

It would be more efficient to focus on the more popular CPUs. Some of the less popular SKUs which differ only by clock speed can have their performance extrapolated. Testing 900 CPUs sound nice but quickly hit diminishing returns in terms of usefulness after the first few hundred.You might also wish to set some minimum performance standards using just a few tests. Any CPU which failed to meet those standards should be marked as "obsolete, upgrade already dude!" and be done with them rather than spend the full 30 to 40 hours testing each of them.

Finally, you need to ask yourself "How often do I wish to redo this project and how much resources will I be able to devote to it?" Bearing in mind that with new drivers, games etc, the database needs to be updated oeriodically to stay relevant. This will provide a realistic estimate of how many CPUs to include in the database.

Meteor2 - Monday, August 3, 2020 - link

I think it's a labour of love...TrevorX - Thursday, September 3, 2020 - link

My suggestion would be to bench the highest performing Xeons that supported DDR3 RAM. Why? Because the cost of DDR3 RDIMMs is so amazingly cheap (as in, less than 10%) compared with DDR4. I personally have a Xeon E5-1660v2 @4.1GHz with 128GB DDR3 1866MHz RDIMMs that's the most rock stable PC I've ever had. Moving up to a DDR4 system with similar memory capacity would be eye-wateringly expensive. I currently have 466 tabs open in Chrome, Outlook, Photoshop, Word, several Excel spreadsheets, and I'm only using 31.3% of physical RAM. I don't game, so I would be genuinely interested in what actual benefit would be derived from an upgrade to Ryzen / Threadripper.Also very keen to see server/hypervisor testing of something like Xeon E5-2667v2 vs Xeon W-1270P or Xeon Silver 4215R for evaluation of on-prem virtualisation hosts. A lot of server workloads are being shifted to the cloud for very good reasons, but for smaller businesses it might be difficult to justify the monthly expense of cloud hosting (and Azure licensing) when they still have a perfectly serviceable 5yo server with plenty of legs left on it. It would be great to be able to see what performance and efficiency improvements can be had jumping between generations.

Tilmitt - Thursday, October 8, 2020 - link

When is this going to be done?Mil0 - Friday, October 16, 2020 - link

Well they launched with 12 results if I count correctly, and currently there are 38 listed, that's close to 10/month. With the goal of 900, that would mean over 7 years (in which ofc more CPUs would be released)Mil0 - Friday, October 16, 2020 - link

Well they launched with 12 results if I count correctly, and currently there are 44 listed, that's about a dozen a month. With the goal of 900, that would mean 6 years (in which ofc more CPUs would be released)Mil0 - Friday, October 16, 2020 - link

Caching hid my previous comment from me, so instead of a follow up there are now 2 pretty similar ones. However, in the mean time I found Ian is actually updating on twitter, which you can find here: https://twitter.com/IanCutress/status/131350328982...He actually did 36 CPU's in 2.5 months, so it should only take 5 years! :D