The Intel Xeon E7-8800 v3 Review: The POWER8 Killer?

by Johan De Gelas on May 8, 2015 8:00 AM EST- Posted in

- CPUs

- IT Computing

- Intel

- Xeon

- Haswell

- Enterprise

- server

- Enterprise CPUs

- POWER

- POWER8

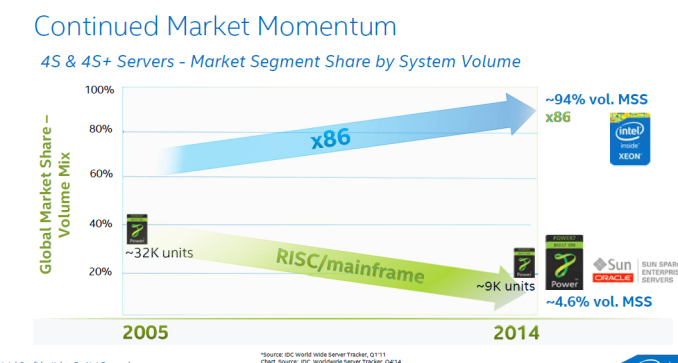

The story behind the high-end Xeon E7 has been an uninterrupted triumphal march for the past 5 years: Intel's most expensive Xeon beats Oracle servers - which cost a magnitude more - silly, and offers much better performance per watt/dollar than the massive IBM POWER servers. Each time a new generation of quad/octal socket Xeons is born, Intel increases the core count, RAS features, and performance per core while charging more for the top SKUs. Each time that price increases is justified, as the total cost of a similar RISC server is a factor more than an Xeon E7 server. From the Intel side, this new generation based upon the Haswell core is no different: more cores (18 vs 15), better RAS, slightly more performance per core and ... higher prices.

However, before you close this tab of your browser, know that even this high-end market is getting (more) exciting. Yes, Intel is correct in that the market momentum is still very much in favor of themselves and thus x86.

No less than 98% of the server shipments have been "Intel inside". No less than 92-94% of the four socket and higher servers contain Intel Xeons. From the revenue side, the RISC based systems are still good for slightly less than 20% of the $49 Billion (per year) server market*. Oracle still commands about 4% (+/- $2 Billion), but has been in a steady decline. IBM's POWER based servers are good for about 12-15% (including mainframes) or $6-7 Billion depending on who you ask (*).

It is however not game over (yet?) for IBM. The big news of the past months is that IBM has sold its x86 server division to Lenovo. As a result, Big Blue finally throw its enormous weight behind the homegrown POWER chips. Instead of a confusing and half heartly "we will sell you x86 and Itanium too" message, we now get the "time to switch over to OpenPOWER" message. IBM spent $1 billion to encourage ISVs to port x86-linux applications to the Power Linux platform. IBM also opened up its hardware: since late 2013, the OpenPower Foundation has been growing quickly with Wistron (ODM), Tyan and Google building hardware on top of the Power chips. The OpenPOWER Foundation now has 113 members, and lots of OpenPower servers are being designed and build. Timothy Green of the Motley fool believes OpenPower will threaten Intel's server hegemony in the largest server market, China.

But enough of that. This is Anandtech, and here we quantify claims instead of just rambling about changing markets. What has Intel cooked up and how does it stack up to the competion? Let's find out.

(*) Source: IDC Worldwide Quarterly Server Tracker, 2014Q1, May 2014, Vendor Revenue Share

146 Comments

View All Comments

kgardas - Thursday, May 21, 2015 - link

@Kevin G: thanks for the correction about load/store units doing simple integer operations. I agree with your testing that single-threaded POWER8 is not up to the speed of Haswell. In fact my testing shows it's on the same level like POWER7.So with POWER8 doing 6 integer ops in cycle, it's more powerful than SPARC64 X which is doing 4 or than SPARC S3 core which is doing just 2. It also explain well spec rate difference between M10-4 and POWER8 machine. Good! Things start to be more clear now...

patrickjp93 - Saturday, May 16, 2015 - link

No, just no. Intel solved the cluster latency problem long ago with Infiniband revisions. 4 nanoseconds to have a 10-removed node tell the head node something or vice versa, and no one builds hypercube or start topology that's any worse than 10-removed.Brutalizer - Sunday, May 17, 2015 - link

@patrickjp93,If Intel solved the cluster latency problem, then why are not SGI UV2000 and ScaleMP clusters used to run monolithic business software? Question: Why are there no SGI UV2000 top records in SAP?

Answer: Because they can not run monolithich software that branches too much. That is why there are no good x86 benchmarks.

misiu_mp - Tuesday, June 2, 2015 - link

Just to point out, 10ns at the speed of light in vacuum is 3m, and signalling is slower than that because of the fibre medium (glass) limits the sped of light to about 60% of c and on top of that come electronic latencies. So maybe you can get 10ns latency over 1-1.5m maximum. That's not a large cluster.Kevin G - Monday, May 11, 2015 - link

I am not uninformed. I would say that you're being willfully ignorant. In fact, you ignored my previous links about this very topic when I could actually find examples for you. ( For the curious outsider: http://www.anandtech.com/comments/7757/quad-ivy-br... )So again I will cite the US Post Office using SGI machines to run Oracle Times Ten databases:

http://www.intelfreepress.com/news/usps-supercompu...

As for the UV 2000 not being a scale up sever, did you not watch the videos I posted? You can see Linux tools in the videos clearly that indicate that it was produced on a 64 socket system. If that is not a scale up server, why are the LInux tools reporting it as such? If the UV 2000 series isn't good for SAP, then why is HANA being tuned to run on it by both SGI and SAP?

HP's Superdome X shares a strong relationship with the Itanium based Superdome 2 machine: they use the same chipset to scale past 8 sockets. This is because the recent Itaniums and Xeons both use QPI as an interconnect bus. So if the Superdome X is a cluster, then so is its Itanium 2 based offerings using that same chipset. Speaking of, that chipset does go up to 64 sockets and there is the potential to go that far (source: http://www.enterprisetech.com/2014/12/02/hps-itani... ). It won't be a decade if HP already has working chipset that they've shipped in other machines. :)

Speaking of the Superdome X, it is fast and can outrun the SPARC M10-4S at the 16 socket level by a fatctor of 2.38. Even with perfect scaling, the SPARC system would need more than 32 sockets to compete. Oh wait, if we go by your claim above that "you double the number of sockets, you will likely gain 20% or so" then the SPARC system would need to scale to 512 sockets to be competitive with the Superdome X. (Source: http://h20195.www2.hp.com/V2/getpdf.aspx/4AA5-6149... )

And if you're dead set on an >8 socket SAP benchmark using x86 processors, here is one, though a bit dated:

https://www.vmware.com/files/pdf/partners/ibm/ibm-...

68k - Tuesday, May 12, 2015 - link

You know, those >8 socket systems are flying of the shelf faster than anyone can produce them. That is why Intel only got, according to the article, 92-94% of the >4-sockets market... It seem pretty safe to state that >8 socket is an extreme niche market, which is probably why it is hard to find any benchmarks on such systems.The price point of really big scaled-up servers is also an extreme intensive to think very hard about how one can design software to now require a single system. Some problems absolutely need scale-up, as you pointed out, there are such x86 systems available and have been for quite some time.

Anyone know what the ratio between the 2-socket servers vs >4-socket in terms of market share (number of deployed systems) look like?

68k - Tuesday, May 12, 2015 - link

No edit... Pretend that '>' means "greater or equal" in the post above.Arkive - Tuesday, May 12, 2015 - link

You guys are obviously not idiots, just enormously stubborn. Why don't you take the wealth of time you spend fighting on the internet and do something productive instead?kgardas - Wednesday, May 13, 2015 - link

Kevin, I'll not argue with you about SGI UV. It's in fact very nice machine and it looks like it is on part with latency to Sun/Oracle Bixby interconnect. Anyway, what I would like to note is about your Superdome X comparison to SPARC M10-4S. Unfortunately IMHO this is purely CPU benchmark. It's multi-JVM so if you use one JVM per one processor, you pin that JVM to this processor and limit its memory to the size (max) of memory available to the processor, then basically you do have kind of scale-out cluser inside one machine. This is IMHO what they are benchmarking. What it just shows that current SPARC64 is really not up to the performance level of latest Xeon. Pity, but is fact. Anyway, my point is, for memory scalability benchmark you should use something different than multi-jvm bench. I would vote for stream here, although it's still bandwidth oriented still it provides at least some picture: https://www.cs.virginia.edu/stream/top20/Bandwidth... -- no Superdome there and HP submitted some Superdome results in the past. Perhaps it's not memory scalability hero these days?ats - Tuesday, May 12, 2015 - link

So that must be why SAP did this for SGI: https://www.sgi.com/company_info/newsroom/awards/s...You don't normally recognize partners for innovation for your products unless they are filling a need. AKA SGI actually sells their UV300H appliance.

And SAP HANA like ALL DBs can be used both SSI or clustered.

And the SAP SD 2-Tier benchmark is not at all monolithic. The whole 2-tier thing should of been a hint. And SAP SD 3-Tier is also not monolithic.

Scaling for x86 is no easier nor no harder than for any other architecture for large scale coherent systems. If you think it is, its because you don't know jack. I've designed CPUs/Systems that can scale up to 256 CPUs in a coherent image, fyi. Also it should probably be noted that the large scale systems are pretty much never used as monolithic systems, and almost always used via partitioning or VMs.