AMD Lays Out Future of Mantle: Changing Direction In Face of DX12 and glNext

by Ryan Smith on March 2, 2015 10:45 PM EST

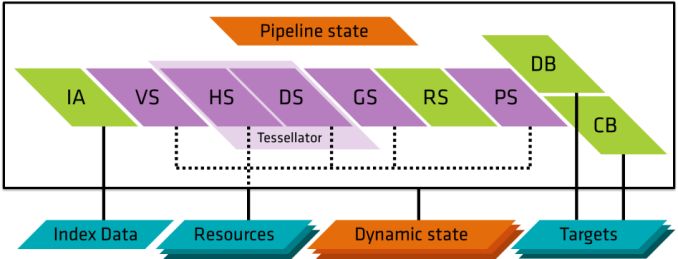

Much has been made over the advent of low-level graphics APIs over the last year, with APIs based on this concept having sprouted up on a number of platforms in a very short period of time. For game developers this has changed the API landscape dramatically in the last couple of years, and it’s no surprise that as a result API news has been centered on the annual Game Developers Conference. With the 2015 conference taking place this week, we’re going to hear a lot more about it in the run-up to the release of DirectX 12 and other APIs.

Kicking things off this week is AMD, who is going first with an update on Mantle, their in-house low-level API. The first announced of the low-level APIs and so far limited to AMD’s GCN’s architecture, there has been quite a bit of pondering over the future of the API in light of the more recent developments of DirectX 12 and glNext. AMD in turn is seeking to answer these questions first, before Microsoft and Khronos take the stage later this week for their own announcements.

In a news post on AMD’s gaming website, AMD has announced that due to the progress on DX12 and glNext, the company is changing direction on the API. The API will be sticking around, but AMD’s earlier plans have partially changed. As originally planned, AMD is transitioning Mantle application development from a closed beta to a (quasi) released product – via the release of a programming guide and API reference this month – however AMD’s broader plans to also release a Mantle SDK to allow full access, particularly allowing iit to be implemented on other hardware, has been shelved. In place of that AMD is refocusing Mantle on being a “graphics innovation platform” to develop new technologies.

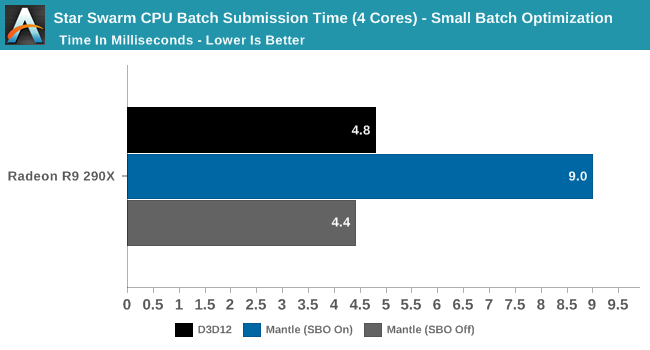

As far as “Mantle 1.0” is concerned, AMD is acknowledging at this point that Mantle’s greatest benefits – reduced CPU usage due to low-level command buffer submission – is something that DX12 and glNext can do just as well, negating the need for Mantle in this context. For AMD this is still something of a win because it has led to Microsoft and Khronos implementing the core ideas of Mantle in the first place, but it also means that Mantle would be relegated to a third wheel. As a result AMD is shifting focus, and advising developers looking to tap Mantle for its draw call benefits (and other features also found in DX12/glNext) to just use those forthcoming APIs instead.

Mantle’s new focus in turn is going to be a testbed for future graphics API development. Along with releasing the specifications for “Mantle 1.0”, AMD will essentially keep the closed beta program open for the continued development of Mantle, building it in conjunction with a limited number of partners in a fashion similar to how Mantle has been developed so far.

Thie biggest change here is that any plans to make Mantle open have been put on hold for the moment with the cancelation of the Mantle SDK. With Mantle going back into development and made redundant by DX12/glNext, AMD has canned what was from the start the hardest to develop/least likely to occur API feature, keeping it proprietary (at least for now) for future development. Which is not to say that AMD has given up on their “open” ideals entirely though, as the company is promising to deliver more information on their long-term plans for the API on the 5th, including their future plans for openness.

As for what happens from here, we will have to see what AMD announces later this week. AMD’s announcement is essentially in two parts: today’s disclosure on the status of Mantle, and a further announcement on the 5th. It’s quite likely that AMD already has their future Mantle features in mind, and will want to discuss those after the DX12 and glNext disclosures.

Finally, from a consumer perspective Mantle won’t be going anywhere. Mantle remains in AMD’s drivers and Mantle applications continue to work, and for that matter there are still more Mantle enabled games to come (pretty much anything Frostbite, for a start). How many more games beyond 2015 though – basically anything post-DX12 – remains to be seen, as developers capable of targeting Mantle will almost certainly want to target DX12 as well as soon as it’s ready.

Update 03/03: To add some further context to AMD's announcement, we have the announcement of Vulkan (aka glNext). In short Mantle is being used as a building block for Vulkan, making Vulkan a derivative of Mantle. So although Mantle proper goes back under wraps at AMD, "Mantle 1.0" continues on in an evolved form as Vulkan.

Source: AMD

94 Comments

View All Comments

Reflex - Tuesday, March 3, 2015 - link

Between 1999 and 2001 I worked as a low level kernel engineer for Microsoft. I worked on RDRAM based systems from the time they were prototypes through commercial availability. If there was one thing RDRAM was not, it was "awesome". It had severe limitations and drawbacks. A quick list:- High heat that was not evenly dissipated due to the serial nature of the chips

- Latency that increased the more memory you had resulting in incredibly inconsistent performance (boot times for Windows, a basic process that should be repeatable consistently clean boot to clean boot would vary by as much as 300% depending on what was loaded where in memory)

- Extremely poor scaling making it completely unsuitable for large memory applications

- Very poor random access performance

And on and on and on. RDRAM works very well in applications where you can plan for it and put your data in specific locations both to balance heat issues and to control for latency problems (ie: actively manage your memory to put less used data in higher latency addresses). It can be fantastic for streaming applications, such as a lot of multimedia production work, where random access is not a common task vs raw bandwidth (one place where RDRAM had an advantage). But for general purpose PC's or servers, RDRAM was a terrible choice that did not make sense from day one.

I dislike the historical revisionism I see in some quarters around RDRAM. The decisions Intel made around the technology were a complete fiasco, and the Intel engineers I worked with knew and lamented it.

Samus - Tuesday, March 3, 2015 - link

FIT, RDRAM was a solution looking for a problem. It worked better in low-memory embedded systems because it was able to flush and rewrite fast. Inevitably I agree, the high cost killed RAMBUS's business, but even if it was price-competitive with DDR it would have never become mainstream in anything but where you find it now; embedded applications like game consoles and network routers. Even the latest RAMBUS (XDR2) runs too hot for use in even large notebooks and graphics cards.Long story short, RAMBUS was fitting to the P4; both used very inefficient technology, and Netburst was in fact designed around the quad datarate of RDRAM (which is why it performed so poorly with SDR and even DDR until the memory controller was optimized.) A match made in hell.

CPUGPUGURU - Tuesday, March 3, 2015 - link

RDRAM was expensive and had high latency, I shorted RAMBUSTED from triple digits to the price a sub sandwich, that set me up financially for years. Ripped Intel a new ahole for getting in bed with RAMBUSTED, but I still didn't buy anything AMD. AMD won a few benchmarks and lost a few, it was never a slam dunk for AMD and I still trusted Intel more. I bought every CPU Intel made from 286 up, my timing upgrade cycle never pointed to a AMD alternative, it would of taken a all out performance win for me to switch camps and that didn't happen even with Intel riding expensive high latency RAMBUS.cwolf78 - Wednesday, March 4, 2015 - link

LOL get a load of this guy. It must be nice being delusional.dooki - Thursday, April 30, 2015 - link

in his words he is a intel pumping fool tool.dooki - Thursday, April 30, 2015 - link

plus that company tried to sue just about every other competitor in the DDR market in order to have a monopoly with their already out of this world pricing.They were the "GMO Monsanto" of their day

Dribble - Tuesday, March 3, 2015 - link

They never forced the issue, DX12 development is ahead of mantle - it's actually going to get released and work properly. AMD just saw what was coming and released and AMD only alpha of DX12 called Mantle, paid off some games companies to use it, hinted that AMD's console win would make Mantle much better then anything the opposition would do. Talked a lot of rubbish about openness they never had any plans to follow through with.It ends up being empty marketing - if you bought a card for Mantle support then you were conned. Not quite sure why Ryan is being so nice to AMD here - they shouldn't be praised for empty marketing, they should be castigated for it. Praise them for real solutions (e.g. AMD eyeinfinity), but burn them for empty marketing campaigns.

HalloweenJack - Tuesday, March 3, 2015 - link

BF4 with mantle is over a year old , DA:I makes the game playable on higher settings with mantle on lower end machines - NFS rivals is another to benefit... as do cryengine games.DX12 games wont be about till 2016 at the earliest. Please stop making up rubbish `Dribble`

CiccioB - Tuesday, March 3, 2015 - link

Releasing alpha versions of a library doesn't make them better than not available libraries still in development.When DX12 will bere released it will be finished and with driver capable of making it run well in a couple of months. And DX12 engine could be in creation today. You don't know.

Mantle is still not fully useable after a year. And it will soon be forgotten by AMD as soon as a new GCN architecture revision comes into play. It is a dead library. In fact it was dead at its launch.

Samus - Tuesday, March 3, 2015 - link

I think what he meant to say is "AMD: mission accomplished, time to move on"AMD never wanted to support their own API. I remember in 2003 getting two huge programming books from AMD regarding AMD64 design (one was for the hardware level, one was for the programming level) and just thinking "man this must be costing them a fortune to support."

AMD isn't in a financial situation to push Mantel and they knew it from day 1. BUT the know by optimizing rendering closer to the hardware level will benefit them more than competing architectures because GCN on average still has 1/3 more physical cores than Maxwell for comparable performance.

It isn't relevant how powerful the cores are (Maxwell's are obviously more powerful) but how busy the cores are and how efficient they are running. Maxwell's are already pretty efficient, AMD's are not. A better API can even this out in AMD's favor and it's less expensive to push an API then a whole new architecture, especially when GCN isn't that bad. Nothing AMD has is bad, per se, it just isn't optimized for (especially CPU's.)

The real boggle is why AMD didn't go after the CUDA market, a market that is actually making nVidia decent money. The premium of Quadro parts and their performance over FireGL because of application optimizations, in addition to the sheer number of sales in the high performance computing space, are just icing on the cake :\