Microsoft Announces DirectX 12: Low Level Graphics Programming Comes To DirectX

by Ryan Smith on March 24, 2014 8:00 AM EST

With GDC 2014 having drawn to a close, we have finally seen what is easily the most exciting piece of news for PC gamers. As previously teased by Microsoft, Microsoft took to the stage last week to announce the next iteration of DirectX: DirectX 12. And as hinted at by the session description, Microsoft’s session was all about bringing low level graphics programming to Direct3D.

As is often the case for these early announcements Microsoft has been careful on releasing too many technical details at once. But from their presentation and the smaller press releases put together by their GPU partners, we’ve been given our first glimpse at Microsoft’s plans for low level programming in Direct3D.

Preface: Why Low Level Programming?

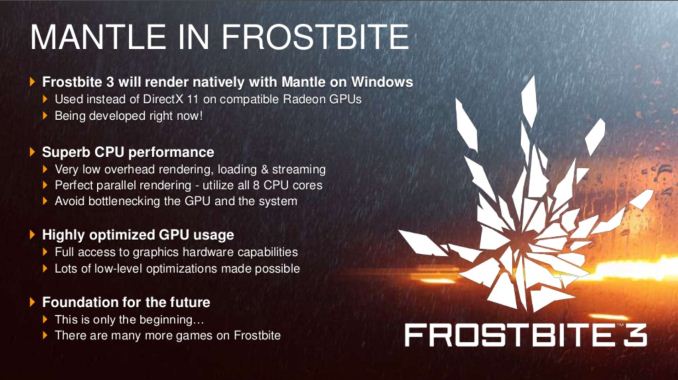

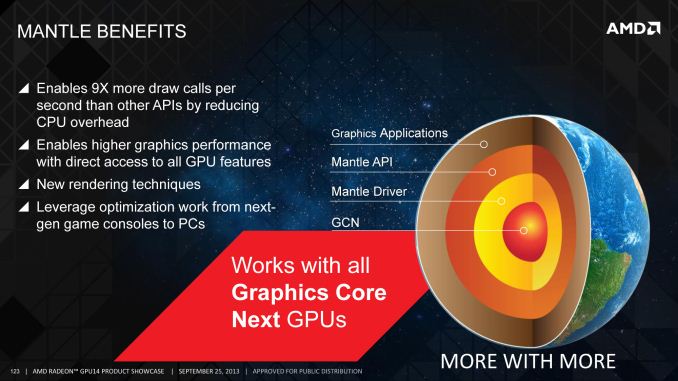

The subject of low level graphics programming has become a very hot topic very quickly in the PC graphics industry. In the last 6 months we’ve gone from low level programming being a backburner subject, to being a major public initiative for AMD, to now being a major initiative for the PC gaming industry as a whole through Direct3D 12. The sudden surge in interest and development isn’t a mistake – this is a subject that has been brewing for years – but it’s within the last couple of years that all of the pieces have finally come together.

But why are we seeing so much interest in low level graphics programming on the PC? The short answer is performance, and more specifically what can be gained from returning to it.

Something worth pointing out right away is that low level programming is not new or even all that uncommon. Most high performance console games are written in such a manner, thanks to the fact that consoles are fixed platforms and therefore easily allow this style of programming to be used. By working with hardware at such a low level programmers are able to tease out a great deal of performance of this hardware, which is why console games look and perform as well as they do given the consoles’ underpowered specifications relative to the PC hardware from which they’re derived.

However with PCs the same cannot be said. PCs, being a flexible platform, have long worked off of high level APIs such as Direct3D and OpenGL. Through the powerful abstraction provided by these high level APIs, PCs have been able to support a wide variety of hardware and over a much longer span of time. With low level PC graphics programming having essentially died with DOS and vendor specific APIs, PCs have traded some performance for the convenience and flexibility that abstraction offers.

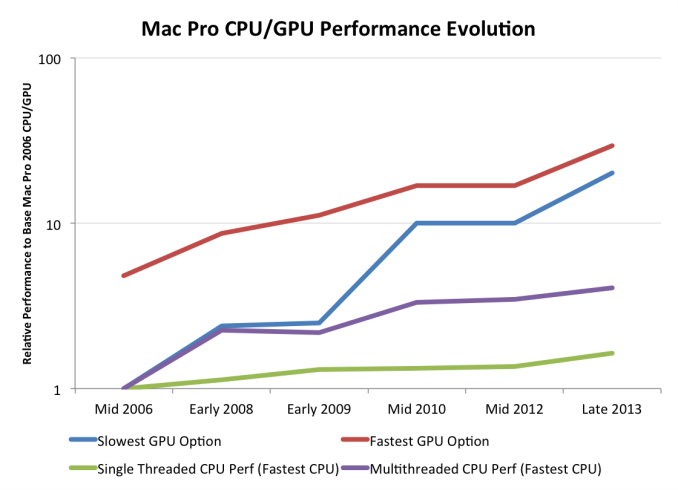

The nature of that performance tradeoff has shifted over the years though, requiring that it be reevaluated. As we’ve covered in great detail in our look at AMD’s Mantle, these tradeoffs were established at a time when CPUs and GPUs were growing in performance by leaps and bounds year after year. But in the last decade or so that has changed – CPUs are no longer rapidly increasing in performance, especially in the case of single-threaded performance. CPU clockspeeds have reached a point where higher clockspeeds are increasingly power-expensive, and the “low hanging fruit” for improving CPU IPC has long been exhausted. Meanwhile GPUs have roughly continued their incredible pace of growth, owing to the embarrassingly parallel nature of graphics rendering.

The result is that when looking at single threaded CPU performance, GPUs have greatly outstripped CPU performance growth. This in and of itself isn’t necessarily a problem, but it does present a problem when coupled with the high level APIs used for PC graphics. The bulk of the work these APIs do in preparing data for GPUs is single threaded by its very nature, causing the slowdown in CPU performance increases to create a bottleneck. As a result of this gap and its ever-increasing nature, the potential for bottlenecking has similarly increased; the price of abstraction is the CPU performance required to provide it.

Low level programming in contrast is more resistant against this type of bottlenecking. There is still the need for a “master” thread and hence the possibility of bottlenecking on that master, but low level programming styles have no need for a CPU-intensive API and runtime to prepare data for GPUs. This makes it much easier to farm out work to multiple CPU cores, protecting against this bottlenecking. To use consoles as an example once again, this is why they are capable of so much with such a (relatively) weak CPU, as they’re better able to utilize their multiple CPU cores than a high level programmed PC can.

The end result of this situation is that it has become time to seriously reevaluate the place of low level graphics programming in the PC space. Game developers and GPU vendors alike want better performance. Meanwhile, though it’s a bit cynical, there’s a very real threat posed by the latest crop of consoles, putting PC gaming in a tight spot where it needs to adapt to keep pace with the consoles. PCs still hold a massive lead in single-threaded CPU performance, but given the limits we’ve discussed earlier, too much bottlenecking can lead to the PC being the slower platform despite the significant hardware advantage. A PC platform that can process fewer draw calls than a $400 game console is a poor outcome for the industry as a whole.

105 Comments

View All Comments

Lerianis - Monday, March 31, 2014 - link

Ah, but the PC with similar hardware will usually run it in a higher resolution and with more graphical candy turned on than on a console. That is the thing that a lot of people forget to mention, that the settings for the game in question are not always the same.I.E. the PC has AA and other settings turned on by default that make the game run 'slower'. Once you hash out what settings are not used in a console and turn those off? The numbers get a hell of a lot closer together.

inighthawki - Monday, March 24, 2014 - link

"Unfortunately, off-the-shelf game engines - particularly graphics - have remained steadfastly single-threaded, and that's not something DirectX or Mantle will be able to change."I guess you missed the giant part of the DX12 talk where they focused heavily on ease and performance of multithreading for graphics and actually came up with a nearly linearly scaling solution.

tipoo - Monday, March 24, 2014 - link

Really wondering about Mantles fate after this hits. They have a time advantage, but DirectX/Direct3D being the Windows standard that it is will be hard to compete with, particularly if the performance improvements are similar (or let alone, DX12 is better).Perhaps AMD should consider bringing Mantle to Linux.

I wonder if the consoles being AMD based will be an advantage to them too, though Microsoft has the XBO also using DX12...PS4 porting may be easier to Mantle, while XBO porting is easier to DX12 perhaps?

ninjaquick - Monday, March 24, 2014 - link

Mantle is a much broader low-level access API. D3D12 is limited by the scope of support, which is basically all driver level. They are putting as much as they can, while having intel/amd/nvidia/imagination/ti/arm/samsung/etc. all onboard for rapid implementation. Building new drivers for this, based on MS spec means they are less flexible than AMD with their own spec built around their hardware and driver platform.D3D12 will possibly be widely implemented, but that won't stop excitable rendering engineers and architects from trying out mantle anyways. I would be very surprised if any of the 'big rendering' players decide to completely forego D3D12 or Mantle. The chance to really push the boundaries as far as possible is far too tempting. Heck, low level programmability might even result in hybridization where possible, between d3d12 and mantle.

Dribble - Tuesday, March 25, 2014 - link

The time advantage isn't that great - Mantle is released beta software, by the time they actually finish it DX12 will be just around the corner and that will come out fully productized and ready to go. Given that no dev is going to bother investing time in Mantle as only a few % of users can use it unlike DX where everyone in the end will be able to use it, also they have no guarantee's AMD will ever finish it off properly or continue to support it for future gens of cards - AMD have never been great at software, unlike MS.jabber - Monday, March 24, 2014 - link

I wonder also if a lot of this is coming out of all the furious work that I bet is going on to try to bridge the performance gap between the Xbox One and the PS4.ninjaquick - Monday, March 24, 2014 - link

Actually, it is because developers have been complaining about the massive step-backwards Xbone programmability took in comparison to X360.Like, not only is there a raw performance gap between the PS4 and X1, but there is a software-api performance black-hole on the X1 called WindowsRT/x64 hybrid, with straight PC DX11.1.

ninjaquick - Monday, March 24, 2014 - link

D3D11.1**Scali - Tuesday, March 25, 2014 - link

The XBox One has its own D3D11.x API, which is D3D11-ish with special low-level features.The games I've seen on XBox One and PS4 so far, only seem to suffer from fillrate problems on the XBox, which means slightly lower resolutions and upscaling. In terms of detail, AI, physics and other CPU-related things, games appear to be identical on both platforms.

et20 - Monday, March 24, 2014 - link

Don't forget Mantle is also cross-platform, it just crosses different platforms compared to Direct3D.