The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM EST

For the past eighteen months, Intel has paraded its new ‘Lakefield’ processor design around the press and the public as a paragon of new processor innovation. Inside, Intel pairs one of its fast peak performance cores with four of its lower power efficient cores, and uses novel technology in order to build the processor in the smallest footprint it can. The new Lakefield design is a sign that Intel is looking into new processor paradigms, such as hybrid processors with different types of cores, but also different stacking and packaging technologies to help drive the next wave of computing. With this article, we will tell you all you need to know about Lakefield.

Part Smartphone, Part PC

When designing a processor, there are over a thousand design choices to be made. The processor can be built to tackle everything, or it can be aimed at a niche. For high performance computing, there might be a need for a high power, high performance design where cooling is of no consideration – compare that to a processor aimed at a portable device, and it needs to be energy efficient and offer considerable battery life for a fixed battery size. There is also the cost of designing the product, how much to invest into research and development, how many units are expected to sell, and thus how many should be produced and what size the product should be. What the price range of the target market is can be a huge factor, even before putting pen to paper.

This is all why we have big multi-core processors with lots of compute acceleration in servers, more moderate power and core counts in home machines that focus on single core performance and user experience, and why smartphone processors have to physically fit into a small design and offer exceptional battery life.

Laptop processors have always sort of fit into the middle of the PC and smartphone markets. Laptop users, especially professionals and gamers, need the high performance that a desktop platform can provide, but road warriors need something that is superbly efficient in power consumption, especially at idle, to provide all-day battery life as if they were on a good smartphone. Not only this, but the more energy efficient and the smaller the footprint of the processor and its features, the thinner and lighter the laptop can be, offering a premium design experience.

As a result, we have seen the ultra-premium notebook market converge from two directions.

From the top, we have AMD and Intel, using their laptop processor designs in smaller and smaller power envelopes to offer thin and light devices with exceptional performance and yet retain the energy efficiency required for battery life. For the most premium designs, we see 12-15+ hours of laptop battery life, as well as very capable gaming.

From the bottom, we have Qualcomm, building out its high-performance smartphone processor line into larger power envelopes, in order to offer desktop-class performance with smartphone-class connectivity and battery life. With the designs using Qualcomm’s processors, a user can very easily expect 24+ hours of battery life, and with regular office use, only charge the system once every couple of days. Qualcomm still has an additional barrier in software, which it is working towards.

Both of these directions converge on something in the middle – something that can offer desktop-class performance, 24hr+ battery life, capable gaming, but also has a full range of software support. Rather continue with trying to bring its processors down to the level it requires, Intel has decided to flip its traditional processor paradigm upside down, and build a smartphone-class processor for this market, matching Qualcomm in its bottom up approach while also looking into novel manufacturing techniques in order to do so.

This processor design is called ‘Lakefield’.

Lakefield at the Core, and the Atom

For the past two decades, Intel has had two different types of x86 CPU design.

The Big ‘Core’ CPU

Intel calls its high power/high performance x86 design the ‘Core’ family. This can make it very confusing, to differentiate between the general concept of a processor core and a ‘Core’-based processor core.

Over the years, Core-based processor cores have been designed for power envelopes from low-power laptops all the way up to the beefiest of servers. The Core line of processor cores implement more complex logic in order to provide additional acceleration, at the expense of physical size and power.

The Small ‘Atom’ CPU

The second type of x86 design from Intel is its more energy efficient implementation, called ‘Atom’. With the Atom cores, Intel simplifies the design in order to maximise efficiency for a given power or a given performance. This makes the design smaller, cheaper to manufacturer, but has a lower peak performance than the Core design. We typically see Atom designs in power restricted scenarios where performance is not critical, such as IoT, or low cost laptop designs.

Where Core Meets Atom

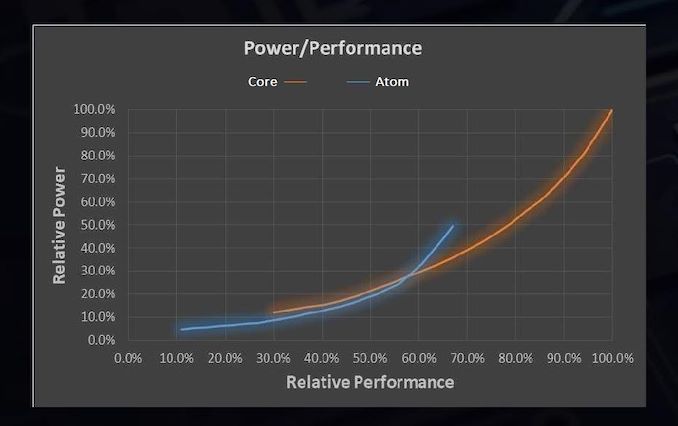

Normally we characterise a processor core design in terms of this power and performance. Due to the variation in the design, we see where some designs work best, at various points for a given power or for a given performance. In the case of Intel’s latest generation of Core and Atom hardware, it looks something like this, if we compare one thread against one thread:

From this graph, which measures Performance on the bottom axis and power on the side axis, there is a crossover point where each design makes the best sense. When the demand for performance is below 58%, the Atom design is the most power efficient, but above 58% then a Core design is preferred.

Homogenous CPUs (all the same) vs

Heterogeneous CPUs (mix of different)

Now in modern processors, especially in laptops, desktops, and servers, we only experience one type of core design. We either have all Core or all Atom, and the performance is designed to scale within those homogeneous designs. It becomes a simple curve to navigate, and when more parallel performance is required, more of those types of cores are fired up to serve the needs of the end user. This has been the case for these markets for the last 30-50 years.

The smartphone space, for the last decade, has been taking a different approach. Within the smartphone world, there are core designs listed as ‘big’ and core designs listed as ‘little’, in the same way that Intel has Core and Atom designs.

These smartphone processors combine numbers of big cores with numbers of small cores, such that there is an intrinsic benefit to running background tasks on the little cores, where efficiency is important, and user experience related elements on the big cores, where latency and performance is important.

The complexity of such a heterogeneous smartphone-like design has many layers. By default most items will start on the little cores, and it is up to either the processor or the operating system to identify when the higher performance mode during a user experience moment is needed. This can be tricky to identify.

Then also comes the matter when a workload has to actually move from one type of core to the other, typically in response to a request for a specific level of performance – if the cores are designed significantly different, then the demands on the memory can likely increase and it is up to the operating system to ensure everything works as it should. There is also an additional element of security, which is a larger topic outside of the scope of this article.

Ultimately building a design with both big cores and little cores comes down a lot to what we call the scheduler. This is a program inside the operating system that manages where different background processes, user experience events, or things like video editing and games, get arranged. The smartphone market has been working on different types of schedulers, and optimizing the designs, for over a decade as mentioned. For the land of Intel and AMD, the push for heterogeneous schedulers has been a slow process by comparison, and it becomes very much a chicken and egg problem – there is no need for an optimized heterogeneous scheduler if there is never a heterogeneous processor in the market.

So why bring all this up?

Lakefield is the first x86 heterogeneous processor.

In its marketing, Intel calls this a ‘hybrid’ CPU, and we will start to see logos identifying this as such. At the heart of its design, Lakefield combines one of the big Core designs with a cluster of four smaller Atom designs, all into one single piece of silicon. In normal x86 processor talk, this is essentially a ‘penta-core’ design, which will commonly be referred to as a 1+4 implementation (for one big core and four small cores).

Intel’s goal with Lakefield is to combine the benefits of the power efficient Atom core with the better user-experience elements provided by the more power hungry but better peak performing big Core. As a result, it sits in the middle of Intel’s traditional homogeneous designs which only contain one type of x86 design – somewhere above the ‘all Atom’ 0+4 design and somewhere below the ‘all Core’ 4+0 design (in actual fact, it’s closer to 0+4).

Based on our conversations with Intel, and the small demonstrations we have seen so far, the best way to consider the new Lakefield processor is to consider it similar to one of the older quad-core Atom processors, with the benefits of the single core performance of a big Core. The cluster of four smaller Atom CPUs will take care of the heavy lifting and parallel performance requests, because there are four of them, while the big Core will respond when the user loads an application, or touches the screen, or scrolls a web browser.

Being a new form of x86 hybrid CPU is not the only thing that Lakefield brings to the table.

Now, just for some form of clarification, we have already had some experience with these sorts of hybrid CPU designs on operating systems like Windows. Qualcomm’s Windows on Snapdragon laptops, like the Lenovo Yoga, use a 4+4 design with the Snapdragon smartphone chips, and Qualcomm has had to work extensively with Microsoft to develop an appropriate scheduler that can manage workloads between the different CPU designs.

The main difference to what Qualcomm has done and what Intel is doing with Lakefield is in software support – Qualcomm processors run ‘Arm’ instructions, while Intel processors run ‘x86’ instructions. Most Windows software is built for x86 instructions, which has limited Qualcomm’s effectiveness in penetrating the traditional laptop market. Qualcomm's design actually allows for ‘x86 translation’, however its scope is limited and there is a performance penalty, but is a work in progress. The point being is that while we have not had a hybrid CPU scheduler for Windows on an x86 system previously, there has been a lot of work put in by Microsoft to date while working with Qualcomm.

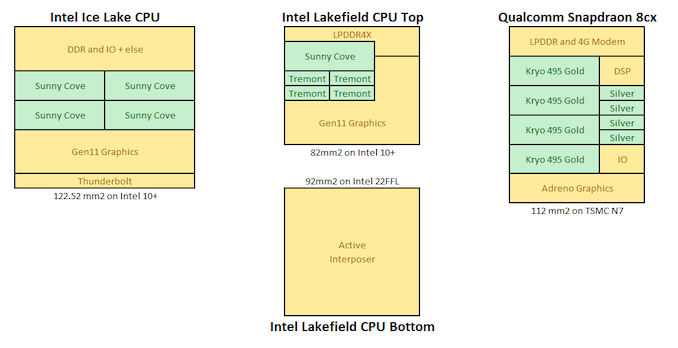

Visualising Heterogeneous CPU Designs

Here are some examples of mobile processors, from Intel and Qualcomm, with the cores in green. On the left is Intel's own Ice Lake processor, with four big cores. In the middle is Intel's Lakefield, which has two stacked silicon dies, but it's the top one that has one big core and four small ones. On the right is Qualcomm's Snapdragon 8cx, currently used in Windows on Snapdragon devices, which uses four performance cores and four efficiency cores, but also integrates a smartphone modem onboard.

In this article, over the following pages, we'll be looking at Intel's new Lakefield processor in detail, covering the new multi-core design, discussing chiplets and Intel's new die-to-die bonding technology called Foveros, the implications of such a design on laptop size (as well as looking at the publicly disclosed Lakefield laptops coming to market), die shots, supposed performance numbers, thermal innovations, and the future for Lakefield. Data for this article has come from our research as well as interviews with Intel's technical personnel and Intel's own presentations on Lakefield at events such as HotChips, Architecture Day, CES, IEDM, and ISSCC. Some information is dissected with helpful input from David Schor of Wikichip. We also cover some of Intel’s innovations with the scope of other semiconductor companies, some of which may be competitors.

221 Comments

View All Comments

EthiaW - Tuesday, July 7, 2020 - link

How can we expect something that stingy on silicon area (don't have place for a single more large core) to compete with a snapdragon 9cx (likely with two Cortex-X1)or apple a14? Actually it has no edge over apple a12 from 2018 even the latter faces some 40% performance lost in x86 simulation.Wilco1 - Wednesday, July 8, 2020 - link

It doesn't even compete with the 18 month old 8cx... It will be interesting to see a side by side Book S review with benchmarks and battery life.serendip - Tuesday, July 14, 2020 - link

https://www.notebookcheck.net/Samsung-Galaxy-Book-...Here it is. It barely competes against the 8cx but gets almost half the battery life running at 5W TDP. Samsung is supposed to release an update to allow running at 7W but that would kill battery life even more.

Wilco1 - Wednesday, July 15, 2020 - link

Ouch... Thanks for that link!reggjoo1 - Tuesday, July 7, 2020 - link

Just manipulating the scheduler, won't be enough. They're gonna have to work on the governor more.808Hilo - Sunday, July 12, 2020 - link

Headline:Intel expanded its turd business!

We successfully, and at great cost, replicated the Atom processor and are only 10 years late with our consumer grade chip. The improvements are amazing: 1 slow processor supported by 3 superslow processors in a revolutionary new 4 processor die. The chip, designed for warheads, is exclusively down binned and handselected for exacting consumer standards. Support our military. Desining low performance is not cheap. Getting effed - Intel inside!

throAU - Monday, July 13, 2020 - link

So, unless this can compete with the iPad Pro processor of the day, I just don't see the market. Windows 10 on ultra portable tablet type devices already sucks. So your realistic choices are android and iOS. Android has a suite of decently performing, already existent SOCs on the market, likely at far less cost than intel will no doubt try to charge for this. And no AVX-512? Only a single performance core? I just don't see it working out.I would have thought they'd be far better off not neutering the Sunny Cove core, and working with Microsoft/others on an API for workload queuing to the relevant core for a relevant code fragment. Treat the performance core as you would any other co-processor. Use thread affinity to bind specific UI threads to it. I'm sure there are methods that could be used but no - in order to run on unmodified platforms (that suck for the market segment they are aiming at anyway) - they crippled it.

serendip - Tuesday, July 14, 2020 - link

Notebookcheck has a review comparing the Intel Lakefield and ARM models of the Galaxy Book S:mhttps://www.notebookcheck.net/Samsung-Galaxy-Book-...The results aren't pretty. For the same price of around $1000, the Lakefield version loses LTE in some markets, has equal or slightly less performance for CPU and GPU, but it has <10 hour battery life compared to the 8cx model's 16 hours. Despite all the fancy packaging, Lakefield is still half as efficient as Qualcomm's best, which makes it outclassed by Apple's silicon.

The worst part about Lakefield on Windows is how it essentially performs as a quad core Atom chip most of the time. Ian's fears were realized.

throAU - Tuesday, July 14, 2020 - link

This is pretty much exactly what I expected. Except the modern ARM processors have a better feature set than a crippled Lakefield chip. And there's less fragmentation in what they will/will not support vs. other ARM processors of the day.I expected Qualcomm to outclass it. It won't even be anywhere near close an A12Z and that's a processor from 12-18 months ago, which will no doubt be outclassed itself by whatever apple release late this year.

ballsystemlord - Wednesday, July 22, 2020 - link

Spelling and grammar errors:"For those that are interested, Lakefield's PMICs are under the codenames Warren Cove and Castro Cover, and were developed in 2017-2018."

I think you misspelled "cove":

"For those that are interested, Lakefield's PMICs are under the codenames Warren Cove and Castro Cove, and were developed in 2017-2018."

"Even those these CPUs are a 1+4 configuration,..."

"though" not "those":

"Even though these CPUs are a 1+4 configuration,..."

"Another thing to note, which Intel glossed over, that most people are going to be really concerned about."

Missing "is" and concerned about what?

"Another thing to note, which Intel glossed over, is that most people are going to be really concerned about."