HighPoint Releases the SSD7102: A Bootable Quad M.2 PCIe x16 NVMe SSD RAID Card

by Ian Cutress on October 24, 2018 8:00 AM EST

In the upper echelons of commercial workhouses, having access to copious amounts of local NVMe storage is more of a requirement than ‘something nice to have’. We’ve seen solutions in this space include custom FPGAs to software breakout boxes, and more recently a number of the motherboard vendors have provided PCIe x16 Quad M.2 cards for the market. The only downside is that they rely on the processor bifurcation, i.e. the ability for the processor to drive multiple devices from a single PCIe x16 slot. HighPoint has got around that limitation.

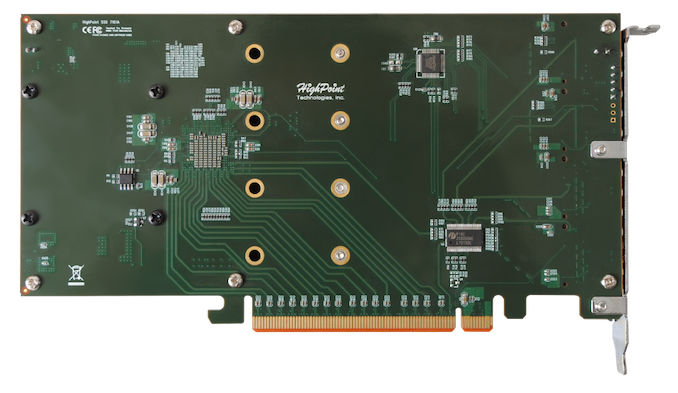

The current way of getting four NVMe M.2 drives in a single PCIe x16 slot sounds fairly easy. There are 16 lanes in the slot, and each drive can take up to four lanes, so what is all the fuss? The problem arises from the CPU side of the equation: that PCIe slot connects directly to one PCIe x16 root complex on the chip, and depending on the configuration it may only be expecting one device to be connected to it. The minute you put four devices in, it might not know what to do. In order to get this to work, you need a single device to act as a communication facilitator between the drives and the CPU. This is where PCIe switches come in. Some motherboards already use these to split a PCIe x16 complex into x8/x8 when different cards are inserted. For something a bit bigger, like bootable NVMe, then HighPoint use something bigger (and more expensive).

The best way to get around that limitation is to use a PCIe switch, and in this case HighPoint is using PLX8747 chip with custom firmware for booting. This switch is not cheap (not since Avago is now at the helm of the company and increased the pricing by several multiples), but it does allow for that configurable interface between the CPU and the drives that works in all scenarios. Up until today, HighPoint already had a device on the market for this, the SSD7101-A, which enabled four M.2 NVMe drives to connect to the machine. What makes the SSD7102 different is that the firmware inside the PLX chip has been changed, and it now allows for booting from a RAID of NVMe drives.

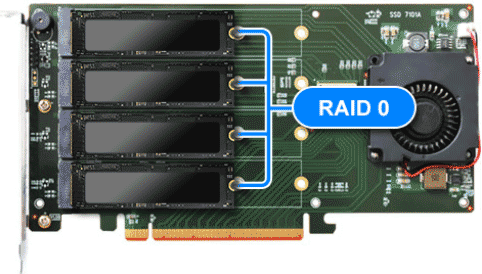

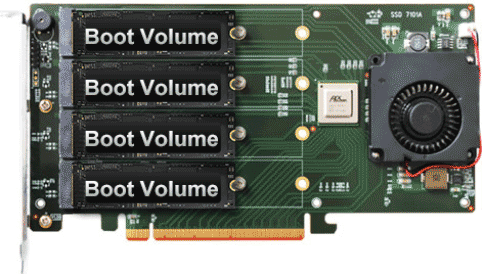

The SSD7102 supports booting with RAID 0 across all four drives, with RAID 1 across pairs of drives, or booting from a single drive in a JBOD configuration. Each drive in the JBOD can be configured to be a boot drive, allowing for multiple OS installs across the different drives. The SSD7102 supports any M.2 NVMe drives from any vendor, although for RAID setups it is advised that identical drives are used.

The card is a single slot device with a heatsink and a 50mm blower fan, to keep every drive cool. Drives up to the full 22110 standard are supported, and HighPoint says that the card is supported under Windows 10, Server 2012 R2 (or later), Linux Kernel 3.3 (or later) and macOS 10.13 (or later). Management of the drives after installation occurs through a browser based tool or a custom API for deployments that want to do their own management, and rebuilding arrays is automatic with auto-resume features. MTBF is set at just under 1M hours, with a typical power draw (minus drives) at 8W. HighPoint states that both AMD and Intel are supported, and given the presence of the PCIe switch, I suspect the card would also ‘work’ in PCIe x8 or x4 modes too.

The PCIe card is due out in November, either direct from HighPoint or through reseller/distribution partners. It is expected to have an MSRP of $399, the same as the current SSD7101-A which does not have the RAID bootable option.

23 Comments

View All Comments

Billy Tallis - Wednesday, October 24, 2018 - link

Microsemi also makes PCIe switches, in competition with PLX/Avago/Broadcom. But they're both targeting the datacenter market heavily and don't want to reduce their margins enough to cater to consumers. Until most server platforms have as many PCIe lanes as EPYC, these switches will be very lucrative in the datacenter and thus too expensive for consumers.The_Assimilator - Wednesday, October 24, 2018 - link

Very good question, IMO the cost of PLX switches has been holding back interesting board features like this for a long time, we really need a competitor in this arena and I feel that anyone who chose to compete would be able to make a lot of money.Kjella - Wednesday, October 24, 2018 - link

Mainly the SLI market died. The single cards became too powerful, the problems too many because it was niche and poorly supported. That only left professional users which lead to lower volume, higher prices. But the problem now was you started to reach a price range where you could just buy enthusiast/server platforms with more lanes. So PLX chips became an even more niche for special enterprise level needs. To top that off AMDs Threadripper/Epyc line went PCIe lane crazy with 64/128 native lanes and bifurcation for those who need it, which leaves them with with hardly any market at all. You'd have to be crazy to invest in re-inventing this technology, this is just PLX trying to squeeze the last few dollars out of a dying technology.Vatharian - Wednesday, October 24, 2018 - link

I'm sitting near nForce 780SLI (ASUS P5NT Deluxe) and Intel Skulltrail, both have PCIe switches. After that most of my multi-PCIe boards where dual CPU, thanks to Intel being a male reproductive organ to consumers, and AMD spectacularly failing to deliver any system capable of anything more than increased power bill.On the fun side, mining haze brought a lot of small players to the yard that learned how to work PCI-Express magic. Some of them may try tackling switch challenge, at some point, which I very much wish for.

Things might have been more lively if PCI SIG didn't stopped to smell flowers too. Excuse me, how many years we are stuck at PCI-Expess 2.5?

Bytales - Wednesday, October 24, 2018 - link

People dont know but this is not something new. Amfeltec did a similar board, perhaps with a similar splitter, and it works with 4 NVME ssds.I had to order from them directly from Canada and payed 400 500 dolars, idont know. But it was at a time, where People kept telling me 4 NVMes from a single pci express 3.0 16x is impossible.

http://amfeltec.com/products/pci-express-gen-3-car...

I have 3 SSDs, a 250 960 evo, a 512 950pro and a 250 WD black 2018 model. They are both recognized without any hiccups whatsoever, and all are individually bootable.

Dragonstongue - Wednesday, October 24, 2018 - link

they could/should have used a larger likely MUCH quieter fan as them "tiny fans" tend to be overly loud for nothing, high pressure is not what these things need, they need high flow.likely they could have used say a 90 or 110mm fan size set at an incline to vent "out the back" to promote cooling efficacy.

All depends on how it works of course, but it does open the doors of slotting 4 lower capacity m.2 drives to create a single larger volume ^.^

Diji1 - Friday, November 2, 2018 - link

>likely they could have used say a 90 or 110mm fan size set at an incline to vent "out the back"Sure they could have done that but then it takes up more than 1 PCI slot. Well actually it takes up more since radial fans do not handle tight fitting spaces very well unlike the blower style fan that it comes with.

'nar - Wednesday, October 24, 2018 - link

I've had more RAID controllers fail on me than SSDs, so I only use JBOD now.Dug - Wednesday, October 31, 2018 - link

I wonder if 4 nvme ssd's would saturate even a 12Gb/s hba though.Dug - Wednesday, October 31, 2018 - link

I forgot to add, I agree. I haven't checked all cards of course, but we've had RAID controllers with backup batteries fail. Why did they fail? Because when the RAID card detects the backup battery is bad or can't hold a charge- it won't continue running even if your system is powered on! So all this redundancy and the single point of failure is a tiny battery that corrupts your entire array.