Assessing IBM's POWER8, Part 1: A Low Level Look at Little Endian

by Johan De Gelas on July 21, 2016 8:45 AM ESTInside the Beast(s)

When the POWER8 was first launched, the specs were mind boggling. The processor could decode up to 8 instructions, issue 8 instructions, and execute up to 10 and all this at clockspeed up to 4.5 GHz. The POWER8 is thus an 8-way superscalar out of order processor. Now consider that

- The complexity of an architecture generally scales quadratically with the number of "ways" (hardware parallelism)

- Intel's most advanced architecture today - Skylake - is 5-way

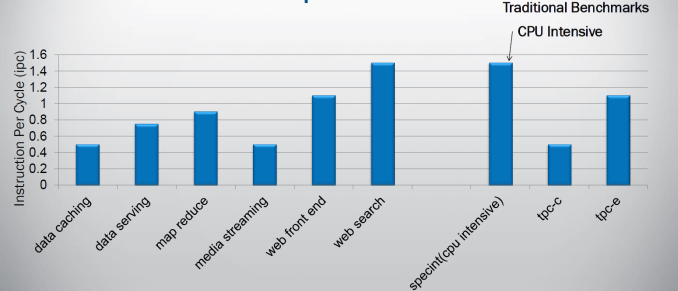

and you know this is a bold move. If you superficially look at what kind of parallelism can be found in software, it starts to look like a suicidal move. Indeed on average, most modern CPU compute on average 2 instructions per clockcycle when running spam filtering (perlbench), video encoding (h264.ref) and protein sequence analyses (hmmer). Those are the SPEC CPU2006 integer benchmarks with the highest Instruction Per Clockcycle (IPC) rate. Server workloads are much worse: IPC of 0.8 and less are not an exception.

It is clear that simply widening a design will not bring good results, so IBM chose to run up to 8 threads simultaneously on their core. But running lots of threads is not without risk: you can end up with a throughput processor which delivers very poor performance in a wide range of applications that need that single threaded speed from time to time.

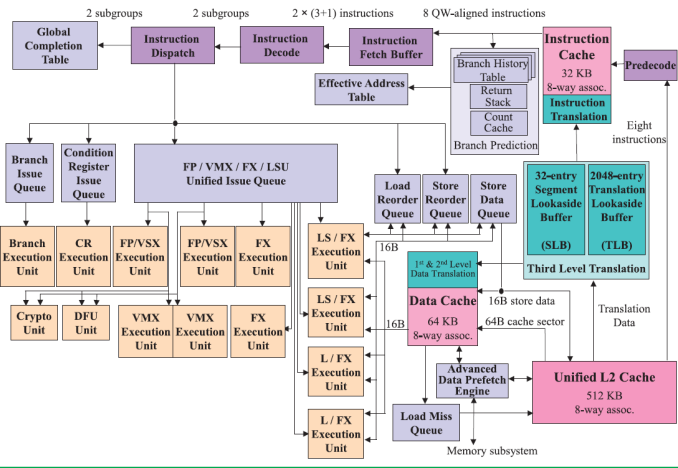

The picture below shows the wide superscalar architecture of the IBM POWER8. The image is taken from the white paper "IBM POWER8 processor core architecture", written by B. Shinharoy and many others.

The POWER8+ will have very similar microarchitecture. Since it might have to face a Skylake based Xeon, we thought it would be interesting to compare the POWER8 with both Haswell/Broadwell as Skylake.

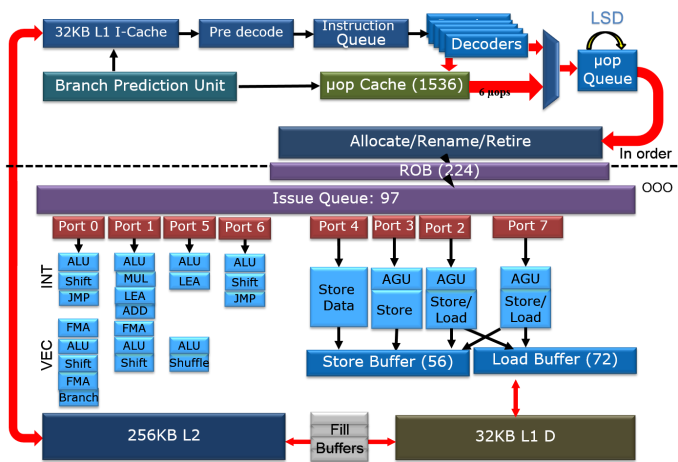

The second picture is a very simplified architecture plan that we adapted from an older Intel Powerpoint presentation about the Haswell architecture, to show the current Skylake architecture. The adaptations were based on the latest Intel optimization manuals. The Intel diagram is much simpler than the POWER8's but that is simply because I was not as diligent as the people at IBM.

It is above our heads to compare the different branch prediction systems, but both Intel and IBM combine several different branch predictors to choose a branch. Both make use of a very large (16 K entries) global branch history table. Both processors scan 32 bytes in advance for branches. In case of IBM this is exactly 8 instructions. In case of Intel this is twice as much as it can fetch in one cycle (16 Bytes).

On the POWER8, data is fetched from the L2-cache and then predecoded into the L1-cache. Predecoding includes adding branch, exception, and grouping. This makes sure that predecoding is out the way before the actual computing ("Von Neuman Cycle") starts.

In Intel Haswell/Skylake, instructions are only predecoded after they are fetched. Predecoding performs macro-op fusion: fusing two x86 instructions together to save decode bandwidth. Intel's Skylake has 5 decoders and up to 5 µop instructions are sent down the pipelines. The current Xeon based upon Broadwell has 4 decoders and is limited to 4 instructions per clock. Those decoded instructions are sent into a µ-op cache, which can contain up to 1536 instructions (8-way), about 100 bits wide. The hitrate of the µop cache is estimated at 80-90% and up to 6 µops can be dispatched in that case. So in some situations, Skylake can run 6 instructions in parallel but as far as we understand it cannot sustain it all the time. Haswell/Broadwell are limited to 4. The µop cache can - most of the time - reduce the branch misprediction penalty from 19 to 14.

Back to the POWER8. Eight instructions are sent to the IBM POWER8 fetch buffer, where up 128 instructions can be held for two thread(s). A single thread can only use half of that buffer (64 instructions). This method of allocation gives each of two threads as much resources as one (i.e. no sharing), which is one of the key design philosophies for the POWER8 architecture.

Just like in the x86 world, the decoding unit breaks down the more complex RISC instructions into simpler internal instructions. Just like any modern Intel CPU, the opposite is also possible: the POWER8 is capable of fusing some combinations of 2 adjacent instructions into one instruction. Saving internal bandwidth and eliminating branches is one of the way this kind of fusion increases performances.

Contrary to the Intel's unified queue, the IBM POWER has 3 different issue queues: branch, condition register, and the "Load/Store/FP/Integer" queue. The first two can issue one instruction per clock, the latter can send off 8 instructions, for a combined total of 10 instructions per cycle. Intel's Haswell-Skylake cores can issue 8 µops per cycle. So both the POWER8 and Intel CPU have more than ample issue and execution resources for single threaded code. More than one thread is needed to really make use of all those resources.

Notice the difference in focus though. The Intel CPU has half of the load units (2), but each unit has twice the bandwidth (256 bit/cycle). The POWER8 has twice the amount of load units (4), but less bandwidth per unit (128 bit per cycle). Intel went for high AVX (HPC) performance, IBM's focus was on feeding 2 to 8 server threads. Just like the Intel units, the LSUs have Address Generation Units (AGUs). But contrary to Intel, the LSUs are also capable of doing simple integer calculations. That kind of massive integer crunching power would be a total waste on the Intel chip, but it is necessary if you want to run 8 threads on one core.

124 Comments

View All Comments

HellStew - Wednesday, July 27, 2016 - link

It depends what kind of software you are running. If you are running giant backend workloads on x86, you can seamlessly migrate that data to PPC while keeping custom front ends running on x86.aryonoco - Saturday, July 23, 2016 - link

Johan, maybe the little endian-ness makes a difference in porting proprietary software, but pretty much all open source software on Linux has supported BE POWER for a long time.If you get the time and the inclination Johan, it would be great if you could say do some benchmarks on BE RHEL 7 vs LE RHEL 7 on the same POWER 8 system. I think it would make for fascinating reading in itself, and would show if there are any differences when POWER operates in BE mode vs LE mode.

aryonoco - Saturday, July 23, 2016 - link

Actually scrap that, seems like IBM is fully focusing on LE for Linux on POWER in future. I'm not sure there will be many BE Linux distributions officially supporting POWER9 anyway. So your choice of focusing on LE Linux on POWER is fully justified.HellStew - Wednesday, July 27, 2016 - link

Side note: Once you are running KVM, you can run any mix of BE and LE linux varieties side by side. I'm running FedoraBE, SuSE BE, Ubuntu LE, CentOS LE, and (yes a very slow copy of windows) on one of these chipsrootbeerrail - Saturday, July 23, 2016 - link

If a machine is completely isolated, it doesn't matter much to the machine. I personally find BE easier to read in hex dumps because it follows the left-to-right nature of English numbers, but there are reasons to use LE for human understanding as well.The problem shows up the instance one tries to interchange binary data. If the endian order does not match, the data is going to get scrambled. Careful programming can work around this issue, but not everyone is a careful programmer - there's a lot of 'get something out the door' from inexperienced or lazy people. If everything is using the same conventions (not only endian, but size of the binary data types (less of a problem now that most everything has converged to 64-bit)), it's not an issue. Thus having LE on Power makes the interchange of binary data easier with the X86 world.

errorr - Friday, July 22, 2016 - link

Great Article! Just an FYI, the term "just" as in "just out" on the first page has different meanings on opposite sides of the Atlantic and is usually avoided in writing for international audiences. I'm not quite sure which one is used her. The NaE would mean 'just out' in that it had come out right before while the BrE would mean it came out right after the time period referenced in the sentence.xCalvinx - Friday, July 22, 2016 - link

awesome!!..keepup the good work..looking forward to Part2!! ... actualy cant wait.. hurryup lol.. :)double thumbsup

Mpat - Friday, July 22, 2016 - link

Skylake does not have 5 decoders, it is still 4. I know that that segment of the optimization manual is written in a cryptic way, but this's what actually happened: up until Broadwell there are 4 decoders and a max bandwidth from the decoder segment of 4 uops. If the first decoder (the complex one) produces 4 uops from one x86 op, the other decoders can't work. If the first produces 3, then the second can produce 1, etc. this means that the decoders can produce one of these combinations of uops from an x86 op, depending on how complex a task the first decoder has: 1/1/1/1, 2/1/1, 3/1, or 4. Skylake changes this so the max bandwidth from that segment is now 5, and the legal combinations become 1/1/1/1, 2/1/1/1, 3/1/1, and 4/1. You still can't do 1/1/1/1/1, so there is still only 4 decoders. Make sense?ReaperUnreal - Friday, July 22, 2016 - link

Why do the tests with GCC? Why not give each platform their full advantage and go with ICC on Intel and xLC on Power? The compiler can make a HUGE difference with benchmarks.Michael Bay - Saturday, July 23, 2016 - link

It`s right in the text why.